代码 import numpy as np# 各种激活函数及导数def sigmoid(x): return 1 / (1 + np.exp(-x))def dsigmoid(y): return y * (1 - y)def tanh(x): return np.tanh(x)def dtanh(y): return 1.0 - y ** 2def relu(y): tmp = y.copy() tmp[tmp 0] = 0 re

代码

import numpy as np

# 各种激活函数及导数

def sigmoid(x):

return 1 / (1 + np.exp(-x))

def dsigmoid(y):

return y * (1 - y)

def tanh(x):

return np.tanh(x)

def dtanh(y):

return 1.0 - y ** 2

def relu(y):

tmp = y.copy()

tmp[tmp < 0] = 0

return tmp

def drelu(x):

tmp = x.copy()

tmp[tmp >= 0] = 1

tmp[tmp < 0] = 0

return tmp

class MLPClassifier(object):

"""多层感知机,BP 算法训练"""

def __init__(self,

layers,

activation='tanh',

epochs=20, batch_size=1, learning_rate=0.01):

"""

:param layers: 网络层结构

:param activation: 激活函数

:param epochs: 迭代轮次

:param learning_rate: 学习率

"""

self.epochs = epochs

self.learning_rate = learning_rate

self.layers = []

self.weights = []

self.batch_size = batch_size

for i in range(0, len(layers) - 1):

weight = np.random.random((layers[i], layers[i + 1]))

layer = np.ones(layers[i])

self.layers.append(layer)

self.weights.append(weight)

self.layers.append(np.ones(layers[-1]))

self.thresholds = []

for i in range(1, len(layers)):

threshold = np.random.random(layers[i])

self.thresholds.append(threshold)

if activation == 'tanh':

self.activation = tanh

self.dactivation = dtanh

elif activation == 'sigomid':

self.activation = sigmoid

self.dactivation = dsigmoid

elif activation == 'relu':

self.activation = relu

self.dactivation = drelu

def fit(self, X, y):

"""

:param X_: shape = [n_samples, n_features]

:param y: shape = [n_samples]

:return: self

"""

for _ in range(self.epochs * (X.shape[0] // self.batch_size)):

i = np.random.choice(X.shape[0], self.batch_size)

# i = np.random.randint(X.shape[0])

self.update(X[i])

self.back_propagate(y[i])

def predict(self, X):

"""

:param X: shape = [n_samples, n_features]

:return: shape = [n_samples]

"""

self.update(X)

return self.layers[-1].copy()

def update(self, inputs):

self.layers[0] = inputs

for i in range(len(self.weights)):

next_layer_in = self.layers[i] @ self.weights[i] - self.thresholds[i]

self.layers[i + 1] = self.activation(next_layer_in)

def back_propagate(self, y):

errors = y - self.layers[-1]

gradients = [(self.dactivation(self.layers[-1]) * errors).sum(axis=0)]

self.thresholds[-1] -= self.learning_rate * gradients[-1]

for i in range(len(self.weights) - 1, 0, -1):

tmp = np.sum(gradients[-1] @ self.weights[i].T * self.dactivation(self.layers[i]), axis=0)

gradients.append(tmp)

self.thresholds[i - 1] -= self.learning_rate * gradients[-1] / self.batch_size

gradients.reverse()

for i in range(len(self.weights)):

tmp = np.mean(self.layers[i], axis=0)

self.weights[i] += self.learning_rate * tmp.reshape((-1, 1)) * gradients[i]

测试代码

import sklearn.datasets

import numpy as np

def plot_decision_boundary(pred_func, X, y, title=None):

"""分类器画图函数,可画出样本点和决策边界

:param pred_func: predict函数

:param X: 训练集X

:param y: 训练集Y

:return: None

"""

# Set min and max values and give it some padding

x_min, x_max = X[:, 0].min() - .5, X[:, 0].max() + .5

y_min, y_max = X[:, 1].min() - .5, X[:, 1].max() + .5

h = 0.01

# Generate a grid of points with distance h between them

xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h))

# Predict the function value for the whole gid

Z = pred_func(np.c_[xx.ravel(), yy.ravel()])

Z = Z.reshape(xx.shape)

# Plot the contour and training examples

plt.contourf(xx, yy, Z, cmap=plt.cm.Spectral)

plt.scatter(X[:, 0], X[:, 1], s=40, c=y, cmap=plt.cm.Spectral)

if title:

plt.title(title)

plt.show()

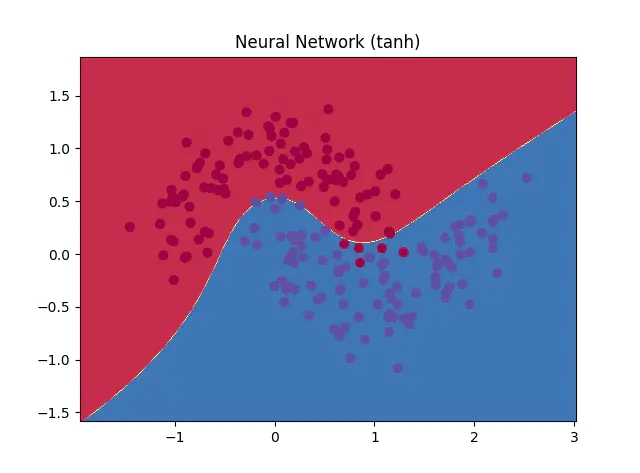

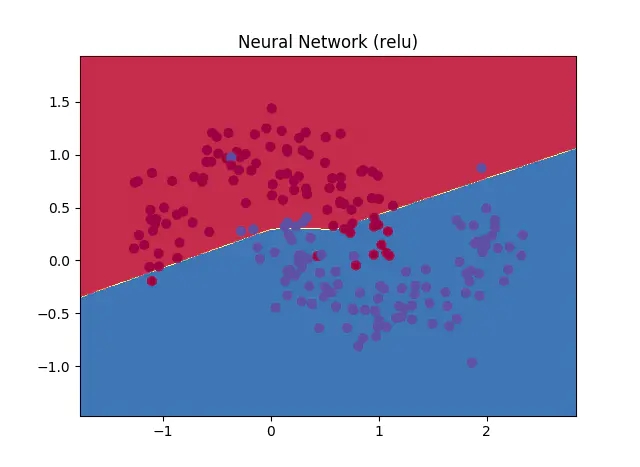

def test_mlp():

X, y = sklearn.datasets.make_moons(200, noise=0.20)

y = y.reshape((-1, 1))

n = MLPClassifier((2, 3, 1), activation='tanh', epochs=300, learning_rate=0.01)

n.fit(X, y)

def tmp(X):

sign = np.vectorize(lambda x: 1 if x >= 0.5 else 0)

ans = sign(n.predict(X))

return ans

plot_decision_boundary(tmp, X, y, 'Neural Network')

效果

更多机器学习代码,请访问 https://github.com/WiseDoge/plume

以上就是如何用Python 实现全连接神经网络(Multi-layer Perceptron)的详细内容,更多关于Python 实现全连接神经网络的资料请关注易盾网络其它相关文章!