本文实例为大家分享了C++实现神经BP神经网络的具体代码,供大家参考,具体内容如下 BP.h #pragma once#includevector#includestdlib.h#includetime.h#includecmath#includeiostreamusing std::vector;using std::exp;usi

本文实例为大家分享了C++实现神经BP神经网络的具体代码,供大家参考,具体内容如下

BP.h

#pragma once

#include<vector>

#include<stdlib.h>

#include<time.h>

#include<cmath>

#include<iostream>

using std::vector;

using std::exp;

using std::cout;

using std::endl;

class BP

{

private:

int studyNum;//允许学习次数

double h;//学习率

double allowError;//允许误差

vector<int> layerNum;//每层的节点数,不包括常量节点1

vector<vector<vector<double>>> w;//权重

vector<vector<vector<double>>> dw;//权重增量

vector<vector<double>> b;//偏置

vector<vector<double>> db;//偏置增量

vector<vector<vector<double>>> a;//节点值

vector<vector<double>> x;//输入

vector<vector<double>> y;//期望输出

void iniwb();//初始化w与b

void inidwdb();//初始化dw与db

double sigmoid(double z);//激活函数

void forward();//前向传播

void backward();//后向传播

double Error();//计算误差

public:

BP(vector<int>const& layer_num, vector<vector<double>>const & input_a0,

vector<vector<double>> const & output_y, double hh = 0.5, double allerror = 0.001, int studynum = 1000);

BP();

void setLayerNumInput(vector<int>const& layer_num, vector<vector<double>> const & input);

void setOutputy(vector<vector<double>> const & output_y);

void setHErrorStudyNum(double hh, double allerror,int studynum);

void run();//运行BP神经网络

vector<double> predict(vector<double>& input);//使用已经学习好的神经网络进行预测

~BP();

};

BP.cpp

#include "BP.h"

BP::BP(vector<int>const& layer_num, vector<vector<double>>const & input,

vector<vector<double>> const & output_y, double hh, double allerror,int studynum)

{

layerNum = layer_num;

x = input;//输入多少个节点的数据,每个节点有多少份数据

y = output_y;

h = hh;

allowError = allerror;

a.resize(layerNum.size());//有这么多层网络节点

for (int i = 0; i < layerNum.size(); i++)

{

a[i].resize(layerNum[i]);//每层网络节点有这么多个节点

for (int j = 0; j < layerNum[i]; j++)

a[i][j].resize(input[0].size());

}

a[0] = input;

studyNum = studynum;

}

BP::BP()

{

layerNum = {};

a = {};

y = {};

h = 0;

allowError = 0;

}

BP::~BP()

{

}

void BP::setLayerNumInput(vector<int>const& layer_num, vector<vector<double>> const & input)

{

layerNum = layer_num;

x = input;

a.resize(layerNum.size());//有这么多层网络节点

for (int i = 0; i < layerNum.size(); i++)

{

a[i].resize(layerNum[i]);//每层网络节点有这么多个节点

for (int j = 0; j < layerNum[i]; j++)

a[i][j].resize(input[0].size());

}

a[0] = input;

}

void BP::setOutputy(vector<vector<double>> const & output_y)

{

y = output_y;

}

void BP::setHErrorStudyNum(double hh, double allerror,int studynum)

{

h = hh;

allowError = allerror;

studyNum = studynum;

}

//初始化权重矩阵

void BP::iniwb()

{

w.resize(layerNum.size() - 1);

b.resize(layerNum.size() - 1);

srand((unsigned)time(NULL));

//节点层数层数

for (int l = 0; l < layerNum.size() - 1; l++)

{

w[l].resize(layerNum[l + 1]);

b[l].resize(layerNum[l + 1]);

//对应后层的节点

for (int j = 0; j < layerNum[l + 1]; j++)

{

w[l][j].resize(layerNum[l]);

b[l][j] = -1 + 2 * (rand() / RAND_MAX);

//对应前层的节点

for (int k = 0; k < layerNum[l]; k++)

w[l][j][k] = -1 + 2 * (rand() / RAND_MAX);

}

}

}

void BP::inidwdb()

{

dw.resize(layerNum.size() - 1);

db.resize(layerNum.size() - 1);

//节点层数层数

for (int l = 0; l < layerNum.size() - 1; l++)

{

dw[l].resize(layerNum[l + 1]);

db[l].resize(layerNum[l + 1]);

//对应后层的节点

for (int j = 0; j < layerNum[l + 1]; j++)

{

dw[l][j].resize(layerNum[l]);

db[l][j] = 0;

//对应前层的节点

for (int k = 0; k < layerNum[l]; k++)

w[l][j][k] = 0;

}

}

}

//激活函数

double BP::sigmoid(double z)

{

return 1.0 / (1 + exp(-z));

}

void BP::forward()

{

for (int l = 1; l < layerNum.size(); l++)

{

for (int i = 0; i < layerNum[l]; i++)

{

for (int j = 0; j < x[0].size(); j++)

{

a[l][i][j] = 0;//第l层第i个节点第j个数据样本

//计算变量节点乘权值的和

for (int k = 0; k < layerNum[l - 1]; k++)

a[l][i][j] += a[l - 1][k][j] * w[l - 1][i][k];

//加上节点偏置

a[l][i][j] += b[l - 1][i];

a[l][i][j] = sigmoid(a[l][i][j]);

}

}

}

}

void BP::backward()

{

int xNum = x[0].size();//样本个数

//daP第l层da,daB第l+1层da

vector<double> daP, daB;

for (int j = 0; j < xNum; j++)

{

//处理最后一层的dw

daP.clear();

daP.resize(layerNum[layerNum.size() - 1]);

for (int i = 0, l = layerNum.size() - 1; i < layerNum[l]; i++)

{

daP[i] = a[l][i][j] - y[i][j];

for (int k = 0; k < layerNum[l - 1]; k++)

dw[l - 1][i][k] += daP[i] * a[l][i][j] * (1 - a[l][i][j])*a[l - 1][k][j];

db[l - 1][i] += daP[i] * a[l][i][j] * (1 - a[l][i][j]);

}

//处理剩下层的权重w的增量Dw

for (int l = layerNum.size() - 2; l > 0; l--)

{

daB = daP;

daP.clear();

daP.resize(layerNum[l]);

for (int k = 0; k < layerNum[l]; k++)

{

daP[k] = 0;

for (int i = 0; i < layerNum[l + 1]; i++)

daP[k] += daB[i] * a[l + 1][i][j] * (1 - a[l + 1][i][j])*w[l][i][k];

//dw

for (int i = 0; i < layerNum[l - 1]; i++)

dw[l - 1][k][i] += daP[k] * a[l][k][j] * (1 - a[l][k][j])*a[l - 1][i][j];

//db

db[l-1][k] += daP[k] * a[l][k][j] * (1 - a[l][k][j]);

}

}

}

//计算dw与db平均值

for (int l = 0; l < layerNum.size() - 1; l++)

{

//对应后层的节点

for (int j = 0; j < layerNum[l + 1]; j++)

{

db[l][j] = db[l][j] / xNum;

//对应前层的节点

for (int k = 0; k < layerNum[l]; k++)

w[l][j][k] = w[l][j][k] / xNum;

}

}

//更新参数w与b

for (int l = 0; l < layerNum.size() - 1; l++)

{

for (int j = 0; j < layerNum[l + 1]; j++)

{

b[l][j] = b[l][j] - h * db[l][j];

//对应前层的节点

for (int k = 0; k < layerNum[l]; k++)

w[l][j][k] = w[l][j][k] - h * dw[l][j][k];

}

}

}

double BP::Error()

{

int l = layerNum.size() - 1;

double temp = 0, error = 0;

for (int i = 0; i < layerNum[l]; i++)

for (int j = 0; j < x[0].size(); j++)

{

temp = a[l][i][j] - y[i][j];

error += temp * temp;

}

error = error / x[0].size();//求对每一组样本的误差平均

error = error / 2;

cout << error << endl;

return error;

}

//运行神经网络

void BP::run()

{

iniwb();

inidwdb();

int i = 0;

for (; i < studyNum; i++)

{

forward();

if (Error() <= allowError)

{

cout << "Study Success!" << endl;

break;

}

backward();

}

if (i == 10000)

cout << "Study Failed!" << endl;

}

vector<double> BP::predict(vector<double>& input)

{

vector<vector<double>> a1;

a1.resize(layerNum.size());

for (int l = 0; l < layerNum.size(); l++)

a1[l].resize(layerNum[l]);

a1[0] = input;

for (int l = 1; l < layerNum.size(); l++)

for (int i = 0; i < layerNum[l]; i++)

{

a1[l][i] = 0;//第l层第i个节点第j个数据样本

//计算变量节点乘权值的和

for (int k = 0; k < layerNum[l - 1]; k++)

a1[l][i] += a1[l - 1][k] * w[l - 1][i][k];

//加上节点偏置

a1[l][i] += b[l - 1][i];

a1[l][i] = sigmoid(a1[l][i]);

}

return a1[layerNum.size() - 1];

}

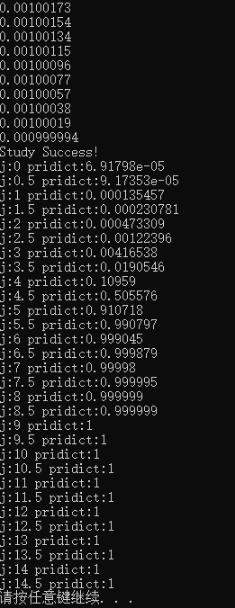

验证程序:

#include"BP.h"

int main()

{

vector<int> layer_num = { 1, 10, 1 };

vector<vector<double>> input_a0 = { { 1,2,3,4,5,6,7,8,9,10 } };

vector<vector<double>> output_y = { {0,0,0,0,1,1,1,1,1,1} };

BP bp(layer_num, input_a0,output_y,0.6,0.001, 2000);

bp.run();

for (int j = 0; j < 30; j++)

{

vector<double> input = { 0.5*j };

vector<double> output = bp.predict(input);

for (auto i : output)

cout << "j:" << 0.5*j <<" pridict:" << i << " ";

cout << endl;

}

system("pause");

return 0;

}

输出:

以上就是本文的全部内容,希望对大家的学习有所帮助,也希望大家多多支持自由互联。