一、请求探索

网页分析:

页码分析:

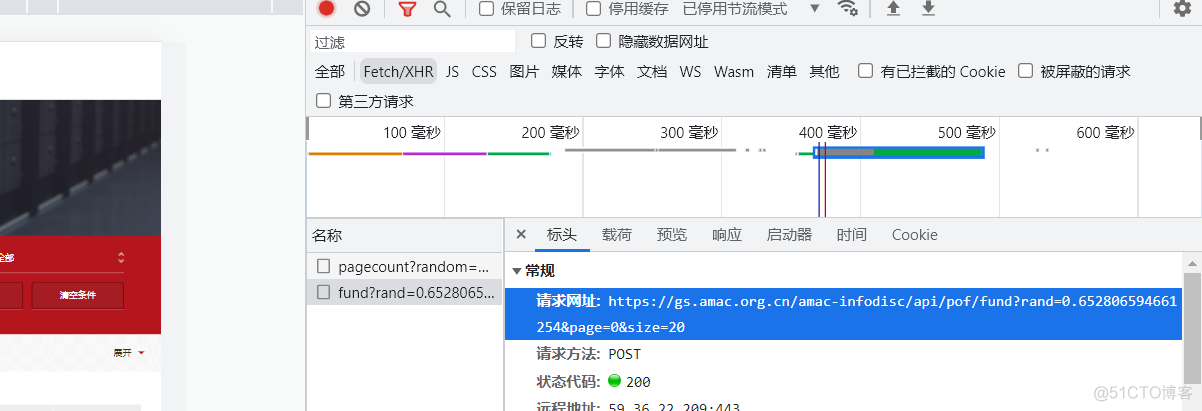

1.第一页:https://gs.amac.org.cn/amac-infodisc/api/pof/fund?rand=0.652806594661254&page=0&size=20

2. 第二页: https://gs.amac.org.cn/amac-infodisc/api/pof/fund?rand=0.652806594661254&page=1&size=20

3. https://gs.amac.org.cn/amac-infodisc/api/pof/fund?rand=0.652806594661254&page=2&size=20

单数刷新一下rand发生变化:似乎就是是一个随机数

https://gs.amac.org.cn/amac-infodisc/api/pof/fund?rand=0.16767992415199218&page=0&size=20

规律变化:页码部分需要调整,rand部分随机的。

https://gs.amac.org.cn/amac-infodisc/api/pof/fund?rand={}&page={}&size=20

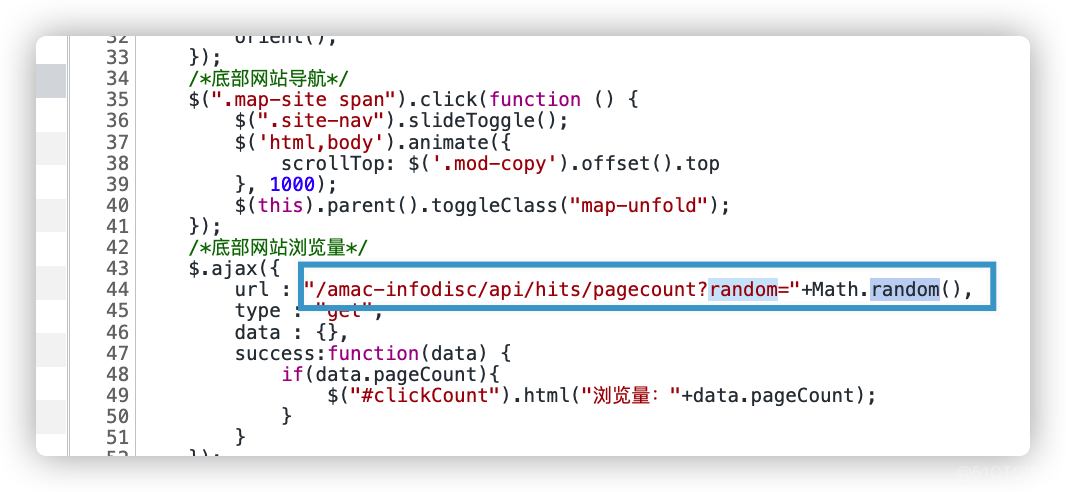

也可以通过JS查看确定一下就是用的一个随机函数:

写段代码产生随机数:

import randomr=random.random()

print(str(r))

完整请求一个页面如下所示:

import requestsimport random

import json

import time

headers = {

"Accept-Language": "zh-CN,zh;q=0.9",

'Content-Type': 'application/json',

'Origin': 'http://gs.amac.org.cn',

'Referer': 'https://gs.amac.org.cn/amac-infodisc/res/pof/fund/index.html',

'User-Agent': 'Mozilla/5.0 (Linux; Android 6.0; Nexus 5 Build/MRA58N) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/101.0.4951.54 Mobile Safari/537.36'

}

r = random.random()

num = 1

url = "https://gs.amac.org.cn/amac-infodisc/api/pof/fund?rand=" + str(r) + "&page=" + str(num) + "&size=20"

data = {}

data = json.dumps(data)

response = requests.post(url=url, data=data, headers=headers)

print(response)

二、分析详情页面

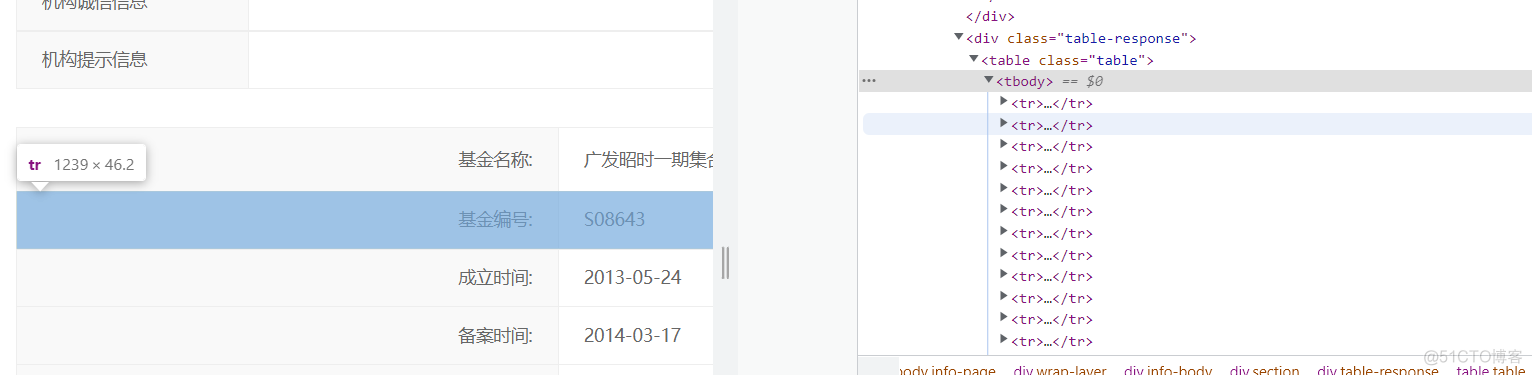

2.1 基本解析

例如分析这一个详情页面:https://gs.amac.org.cn/amac-infodisc/res/pof/fund/351000128956.html

我们使用Beautiful Soup来解析它。

response = requests.get(url2, headers=headers) # 请求详情页面html = requests.get(url=url, headers=headers).content.decode('utf-8') # 获取详情页面的url

soup = BeautifulSoup(html, 'lxml') # 接卸

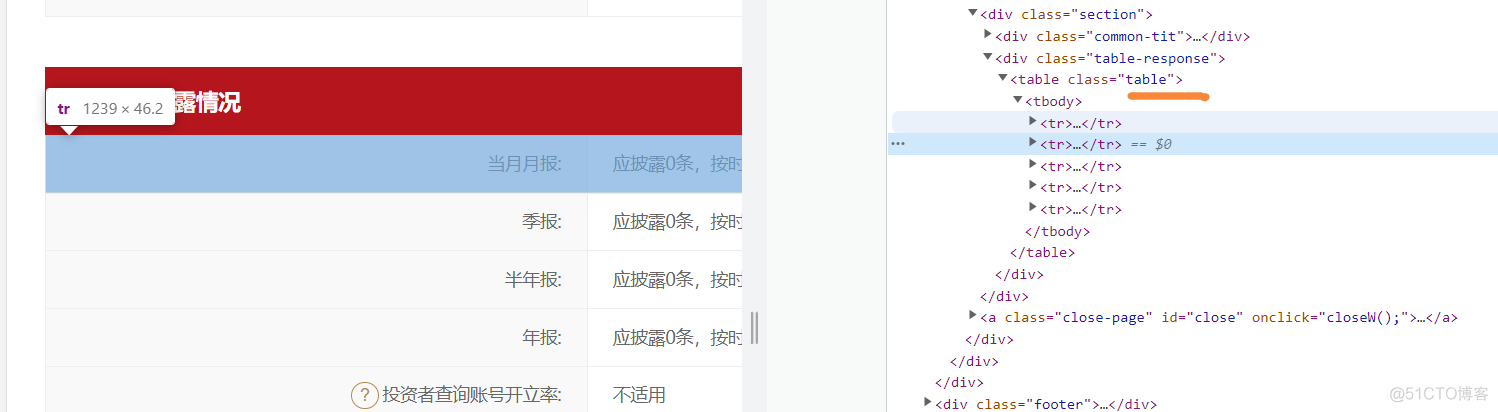

分析具体内容:上面一个表内容都在一个table里面

下面一个表信息透露情况:单独又在另外一个table里面

2.2 内容定位获取

比如定位基金名称,最简单的做法就是直接复制xpath:

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 基金名称

三、完整源码

import requests

import random

import json

from lxml import etree

import codecs

import csv

count = 0

rows = []

for i in range(1, 15):

headers = {

"Accept-Language": "zh-CN,zh;q=0.9",

'Content-Type': 'application/json',

'Origin': 'http://gs.amac.org.cn',

'Referer': 'https://gs.amac.org.cn/amac-infodisc/res/pof/fund/index.html',

'User-Agent': 'Mozilla/5.0 (Linux; Android 6.0; Nexus 5 Build/MRA58N) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/101.0.4951.54 Mobile Safari/537.36'

}

r = random.random()

num = 1

url = "https://gs.amac.org.cn/amac-infodisc/api/pof/fund?rand=" + str(r) + "&page=" + str(i) + "&size=20"

data = {}

data = json.dumps(data)

response = requests.post(url=url, data=data, headers=headers)

datas = json.loads(response.text)["content"]

''':cvar

获取每一个页面的链接

'''

for data1 in datas:

jjid = data1['id'] # 基金ID

managerurl = data1['managerUrl'] # 经理页url

fundName = data1['fundName'] # 基金名称

managename = data1['managerName'] # 基金管理人名称

url = data1['url'] # 获取分支url

url2 = 'https://gs.amac.org.cn/amac-infodisc/res/pof/fund/' + url # 构造完整url,根据每一个链接获取详情页面

count += 1

print("正在爬取第" + str(count) + "条数据")

''':cvar

开始解析详情页面

'''

html = requests.get(url=url2, headers=headers).text.encode('utf-8') # 请求详情页面的url

tree = etree.HTML(html) # 标准化

num1_0 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[1]/td[2]/text()',

encoding="utf-8")

if len(num1_0) == 0:

num1 = num1_0

else:

num1 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[1]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 基金名称

num2 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[2]/td[2]/text()',

encoding="utf-8") # 基金编号

num3_0 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[3]/td[2]/text()',

encoding="utf-8")

if len(num3_0) == 0:

num3 = num3_0

else:

num3 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[3]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "") # 成立时间

num4_0 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[4]/td[2]/text()',

encoding="utf-8")

if len(num4_0) == 0:

num4 = num4_0

else:

num4 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[4]/td[2]/text()',

encoding="utf-8")[0].replace(" ", "").replace("\t", "") # 备案时间

num5_0 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[5]/td[2]/text()',

encoding="utf-8")

if len(num5_0) == 0:

num5 = num5_0

else:

num5 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[5]/td[2]/text()',

encoding="utf-8")[0].encode(

'ISO-8859-1').decode('utf-8') # 基金类型

num6_0 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[6]/td[2]/text()',

encoding="utf-8")

if len(num6_0) == 0:

num6 = num6_0

else:

num6 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[6]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 基金类型

num7_0 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[7]/td[2]/a',

encoding="utf-8")

if len(num7_0) == 0:

num7 = num7_0

else:

num7 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[7]/td[2]/a/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 币种

num8_0 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[7]/td[2]/a/text()',

encoding="utf-8")

if len(num8_0) == 0:

num8 = num8_0

else:

num8 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[8]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 基金管理人名称

num9_0 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[9]/td[2]/text()',

encoding="utf-8")

if len(num9_0) == 0:

num9 = num9_0

else:

num9 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[9]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 管理类型

num10_0 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[10]/td[2]/text()',

encoding="utf-8") # 托管人名称

if len(num10_0) == 0:

num10 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[10]/td[2]/text()',

encoding="utf-8") # 托管人名称

else:

num10 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[10]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 托管人名称

num11_0 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[11]/td[2]/text()',

encoding="utf-8")

if len(num11_0) == 0:

num11 = num11_0

else:

num11 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[11]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 运作状态

num12 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[12]/td[2]/text()',

encoding="utf-8") # 基金信息最后更新时间

num13_0 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[13]/td[2]/text()',

encoding="utf-8") # 基金业协会特别提示

if len(num13_0) == 0:

num13 = num13_0

else:

num13 = tree.xpath('/html/body/div[3]/div/div[2]/div[1]/div[2]/table/tbody/tr[13]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8')

xian = tree.xpath('/html/body/div[3]/div/div[2]/div[2]/div[2]/table/tbody/tr[1]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 当月月报

xian2 = tree.xpath('/html/body/div[3]/div/div[2]/div[2]/div[2]/table/tbody/tr[2]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 季报

xian3 = tree.xpath('/html/body/div[3]/div/div[2]/div[2]/div[2]/table/tbody/tr[3]/td[3]/text()',

encoding="utf-8") # 半年报

xian4 = tree.xpath('/html/body/div[3]/div/div[2]/div[2]/div[2]/table/tbody/tr[4]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 年报

xian5 = tree.xpath('/html/body/div[3]/div/div[2]/div[2]/div[2]/table/tbody/tr[5]/td[2]/text()',

encoding="utf-8")[0].replace('\r\n', '').replace(" ", "").replace("\t", "").encode(

'ISO-8859-1').decode('utf-8') # 账号开立率

row = (

num1, num2, num3, num4, num5, num6, num7, num8, num9, num10, num11, num12, num13, xian, xian2, xian3, xian4,

xian5)

rows.append(row)

with codecs.open('基金.csv', 'wb', encoding='gbk', errors='ignore') as f:

writer = csv.writer(f)

writer.writerow(

["基金名称", "基金编号", "成立时间", "备案时间", "X1", "X2", "X3", "X4", "X5", "x6", 'X7', 'X8', 'X9'

, '当月月报', '季报', '半年报', '年报', '账号开立率'])

writer.writerows(rows)

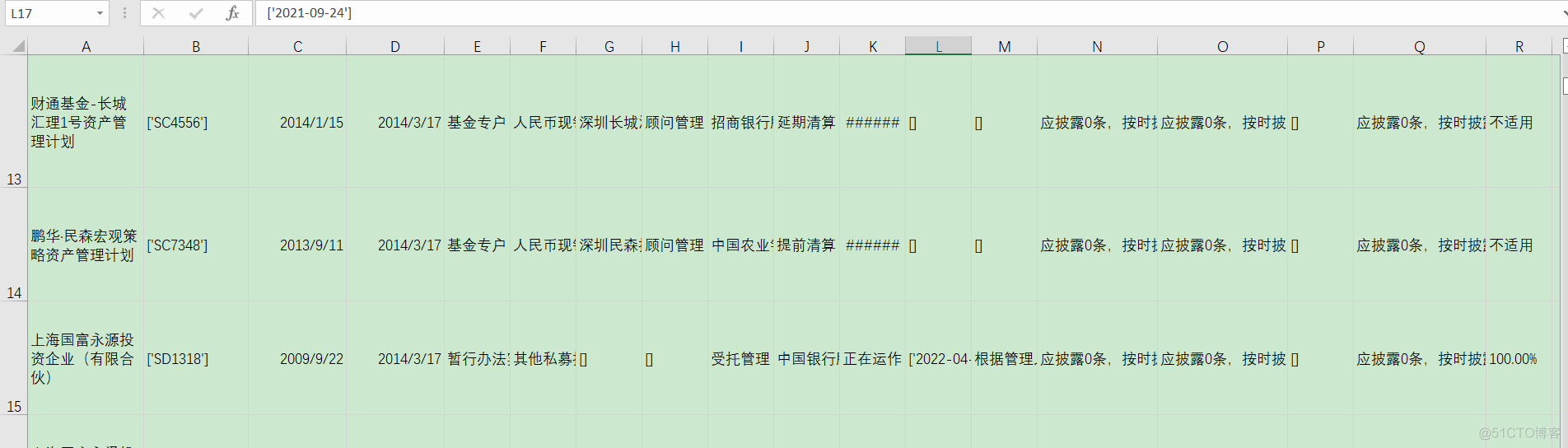

效果如下:

四、总结

设计有些欠妥,代码也有些冗余,但是已经写了一下午了,感兴趣的自行优化。