本文主要介绍ceph的树状层次结构调整,以rack故障域为例展开。

在开始操作之前介绍一种使用crushmap 导出和导入的方法也可以进行调整,但是这个操作起来需要具备一定ceph基础知识。优点是操作简单,缺点是修改起来要求逻辑严谨、ceph基本功扎实。

集群修改crushmap 1.下载当前系统的crushmap: ceph osd getcrushmap -o crushmap 2.decompile该crushmap: crushtool -d crushmap -o real_crushmap 3.修改该crushmap: 这里调整树状层次结构以及规则。 4.compile 修改后的real_crushmap: crushtool -c real_crushmap -o new_crushmap //c-compile -o outfile 5.将encode后的new_crushmap导入系统: ceph osd setcrushmap -i new_crushmap // i-input/bulid开始进行rack故障域调整

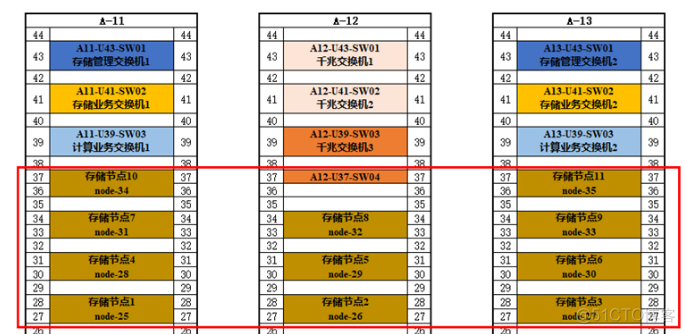

机柜和节点对应关系:

操作步骤

1、执行ceph -s确认存储集群状态,保证为健康状态。

ceph -s cluster: id: 991ffe01-8ef5-42dd-bf2e-ae885fc77555 health: HEALTH_OK services: mon: 3 daemons, quorum node-1,node-2,node-3 mgr: node-1(active), standbys: node-2, node-3 osd: 99 osds: 99 up, 99 in flags nodeep-scrub rbd-mirror: 1 daemon active rgw: 3 daemons active data: pools: 12 pools, 3904 pgs objects: 87.52k objects, 320GiB usage: 1.04TiB used, 643TiB / 644TiB avail pgs: 3904 active+clean io: client: 37.2KiB/s rd, 1.30MiB/s wr, 27op/s rd, 120op/s wr2、执行ceph osd tree ,记录变更前的结构。以及存储池pool及其他信息。

ceph osd tree ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -4 3.39185 root ssdpool -67 0.30835 host node-25_ssdpool 98 hdd 0.30835 osd.98 up 1.00000 1.00000 -46 0.30835 host node-26_ssdpool 8 hdd 0.30835 osd.8 up 1.00000 1.00000 -37 0.30835 host node-27_ssdpool 17 hdd 0.30835 osd.17 up 1.00000 1.00000 -58 0.30835 host node-28_ssdpool 26 hdd 0.30835 osd.26 up 1.00000 1.00000 -55 0.30835 host node-29_ssdpool 35 hdd 0.30835 osd.35 up 1.00000 1.00000 -61 0.30835 host node-30_ssdpool 44 hdd 0.30835 osd.44 up 1.00000 1.00000 -41 0.30835 host node-31_ssdpool 53 hdd 0.30835 osd.53 up 1.00000 1.00000 -50 0.30835 host node-32_ssdpool 62 hdd 0.30835 osd.62 up 1.00000 1.00000 -6 0.30835 host node-33_ssdpool 71 hdd 0.30835 osd.71 up 1.00000 1.00000 -49 0.30835 host node-34_ssdpool 80 hdd 0.30835 osd.80 up 1.00000 1.00000 -64 0.30835 host node-35_ssdpool 89 hdd 0.30835 osd.89 up 1.00000 1.00000 -1 642.12769 root default -70 58.37524 host node-25 90 ssd 7.29691 osd.90 up 1.00000 1.00000 91 ssd 7.29691 osd.91 up 1.00000 1.00000 92 ssd 7.29691 osd.92 up 1.00000 1.00000 93 ssd 7.29691 osd.93 up 1.00000 1.00000 94 ssd 7.29691 osd.94 up 1.00000 1.00000 95 ssd 7.29691 osd.95 up 1.00000 1.00000 96 ssd 7.29691 osd.96 up 1.00000 1.00000 97 ssd 7.29691 osd.97 up 1.00000 1.00000 -12 58.37524 host node-26 0 ssd 7.29691 osd.0 up 1.00000 1.00000 1 ssd 7.29691 osd.1 up 1.00000 1.00000 2 ssd 7.29691 osd.2 up 1.00000 1.00000 3 ssd 7.29691 osd.3 up 1.00000 1.00000 4 ssd 7.29691 osd.4 up 1.00000 1.00000 5 ssd 7.29691 osd.5 up 1.00000 1.00000 6 ssd 7.29691 osd.6 up 1.00000 1.00000 7 ssd 7.29691 osd.7 up 1.00000 1.00000 -5 58.37524 host node-27 9 ssd 7.29691 osd.9 up 1.00000 1.00000 10 ssd 7.29691 osd.10 up 1.00000 1.00000 11 ssd 7.29691 osd.11 up 1.00000 1.00000 12 ssd 7.29691 osd.12 up 1.00000 1.00000 13 ssd 7.29691 osd.13 up 1.00000 1.00000 14 ssd 7.29691 osd.14 up 1.00000 1.00000 15 ssd 7.29691 osd.15 up 1.00000 1.00000 16 ssd 7.29691 osd.16 up 1.00000 1.00000 -3 58.37524 host node-28 18 ssd 7.29691 osd.18 up 1.00000 1.00000 19 ssd 7.29691 osd.19 up 1.00000 1.00000 20 ssd 7.29691 osd.20 up 1.00000 1.00000 21 ssd 7.29691 osd.21 up 1.00000 1.00000 22 ssd 7.29691 osd.22 up 1.00000 1.00000 23 ssd 7.29691 osd.23 up 1.00000 1.00000 24 ssd 7.29691 osd.24 up 1.00000 1.00000 25 ssd 7.29691 osd.25 up 1.00000 1.00000 -11 58.37524 host node-29 27 ssd 7.29691 osd.27 up 1.00000 1.00000 28 ssd 7.29691 osd.28 up 1.00000 1.00000 29 ssd 7.29691 osd.29 up 1.00000 1.00000 30 ssd 7.29691 osd.30 up 1.00000 1.00000 31 ssd 7.29691 osd.31 up 1.00000 1.00000 32 ssd 7.29691 osd.32 up 1.00000 1.00000 33 ssd 7.29691 osd.33 up 1.00000 1.00000 34 ssd 7.29691 osd.34 up 1.00000 1.00000 -10 58.37524 host node-30 36 ssd 7.29691 osd.36 up 1.00000 1.00000 37 ssd 7.29691 osd.37 up 1.00000 1.00000 38 ssd 7.29691 osd.38 up 1.00000 1.00000 39 ssd 7.29691 osd.39 up 1.00000 1.00000 40 ssd 7.29691 osd.40 up 1.00000 1.00000 41 ssd 7.29691 osd.41 up 1.00000 1.00000 42 ssd 7.29691 osd.42 up 1.00000 1.00000 43 ssd 7.29691 osd.43 up 1.00000 1.00000 -9 58.37524 host node-31 45 ssd 7.29691 osd.45 up 1.00000 1.00000 46 ssd 7.29691 osd.46 up 1.00000 1.00000 47 ssd 7.29691 osd.47 up 1.00000 1.00000 48 ssd 7.29691 osd.48 up 1.00000 1.00000 49 ssd 7.29691 osd.49 up 1.00000 1.00000 50 ssd 7.29691 osd.50 up 1.00000 1.00000 51 ssd 7.29691 osd.51 up 1.00000 1.00000 52 ssd 7.29691 osd.52 up 1.00000 1.00000 -8 58.37524 host node-32 54 ssd 7.29691 osd.54 up 1.00000 1.00000 55 ssd 7.29691 osd.55 up 1.00000 1.00000 56 ssd 7.29691 osd.56 up 1.00000 1.00000 57 ssd 7.29691 osd.57 up 1.00000 1.00000 58 ssd 7.29691 osd.58 up 1.00000 1.00000 59 ssd 7.29691 osd.59 up 1.00000 1.00000 60 ssd 7.29691 osd.60 up 1.00000 1.00000 61 ssd 7.29691 osd.61 up 1.00000 1.00000 -40 58.37524 host node-33 63 ssd 7.29691 osd.63 up 1.00000 1.00000 64 ssd 7.29691 osd.64 up 1.00000 1.00000 65 ssd 7.29691 osd.65 up 1.00000 1.00000 66 ssd 7.29691 osd.66 up 1.00000 1.00000 67 ssd 7.29691 osd.67 up 1.00000 1.00000 68 ssd 7.29691 osd.68 up 1.00000 1.00000 69 ssd 7.29691 osd.69 up 1.00000 1.00000 70 ssd 7.29691 osd.70 up 1.00000 1.00000 -7 58.37524 host node-34 72 ssd 7.29691 osd.72 up 1.00000 1.00000 73 ssd 7.29691 osd.73 up 1.00000 1.00000 74 ssd 7.29691 osd.74 up 1.00000 1.00000 75 ssd 7.29691 osd.75 up 1.00000 1.00000 76 ssd 7.29691 osd.76 up 1.00000 1.00000 77 ssd 7.29691 osd.77 up 1.00000 1.00000 78 ssd 7.29691 osd.78 up 1.00000 1.00000 79 ssd 7.29691 osd.79 up 1.00000 1.00000 -2 58.37524 host node-35 81 ssd 7.29691 osd.81 up 1.00000 1.00000 82 ssd 7.29691 osd.82 up 1.00000 1.00000 83 ssd 7.29691 osd.83 up 1.00000 1.00000 84 ssd 7.29691 osd.84 up 1.00000 1.00000 85 ssd 7.29691 osd.85 up 1.00000 1.00000 86 ssd 7.29691 osd.86 up 1.00000 1.00000 87 ssd 7.29691 osd.87 up 1.00000 1.00000 88 ssd 7.29691 osd.88 up 1.00000 1.00000查看存储池pool规则及其他详细信息

ceph osd pool ls detail pool 1 '.rgw.root' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 128 pgp_num 128 last_change 826 lfor 0/825 flags hashpspool stripe_width 0 application rgw pool 2 'volumes' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 2048 pgp_num 2048 last_change 1347 lfor 0/326 flags hashpspool stripe_width 0 application cinder-volume removed_snaps [1~21] pool 3 'rbd' replicated size 3 min_size 1 crush_rule 0 object_hash rjenkins pg_num 64 pgp_num 64 last_change 726 flags hashpspool stripe_width 0 removed_snaps [1~9] pool 4 'compute' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 512 pgp_num 512 last_change 1361 lfor 0/323 flags hashpspool stripe_width 0 removed_snaps [1~4,6~e,15~1,1c~6] pool 5 'ssdpool' replicated size 3 min_size 1 crush_rule 1 object_hash rjenkins pg_num 256 pgp_num 256 last_change 45 flags hashpspool stripe_width 0 removed_snaps [1~3] pool 6 'default.rgw.control' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 128 pgp_num 128 last_change 897 lfor 0/896 flags hashpspool stripe_width 0 application rgw pool 7 'default.rgw.meta' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 128 pgp_num 128 last_change 904 lfor 0/903 flags hashpspool stripe_width 0 application rgw pool 8 'default.rgw.log' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 128 pgp_num 128 last_change 923 lfor 0/922 flags hashpspool stripe_width 0 application rgw pool 9 'backups' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 128 pgp_num 128 last_change 930 lfor 0/929 flags hashpspool stripe_width 0 application cinder-backup removed_snaps [1~3] pool 10 'metrics' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 128 pgp_num 128 last_change 937 lfor 0/936 flags hashpspool stripe_width 0 pool 11 'default.rgw.buckets.index' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 128 pgp_num 128 last_change 944 lfor 0/943 flags hashpspool stripe_width 0 application rgw pool 12 'default.rgw.buckets.data' replicated size 3 min_size 2 crush_rule 0 object_hash rjenkins pg_num 128 pgp_num 128 last_change 947 lfor 0/946 flags hashpspool stripe_width 0 application rgw3、备份集群的crushmap文件。

ceph osd getcrushmap -o crushmap.bak该文件用于恢复到原先crushmap状态。4、添加rack类型的bucket。

ceph osd crush add-bucket rackA-11 rack ceph osd crush add-bucket rackA-12 rack ceph osd crush add-bucket rackA-13 rack ceph osd crush add-bucket rackA-11-ssdpool rack ceph osd crush add-bucket rackA-12-ssdpool rack ceph osd crush add-bucket rackA-13-ssdpool rack5、 把rack类型的bucket分别移动到default/ssdpool的bucket中。

ceph osd crush move rackA-11 root=default ceph osd crush move rackA-12 root=default ceph osd crush move rackA-13 root=default ceph osd crush move rackA-11-ssdpool root=ssdpool ceph osd crush move rackA-12-ssdpool root=ssdpool ceph osd crush move rackA-13-ssdpool root=ssdpool6、 按节点在机柜中的分布,把节点到对应的rack:

ceph osd crush move node-25 rack=rackA-11 ceph osd crush move node-28 rack=rackA-11 ceph osd crush move node-31 rack=rackA-11 ceph osd crush move node-34 rack=rackA-11 ceph osd crush move node-26 rack=rackA-12 ceph osd crush move node-29 rack=rackA-12 ceph osd crush move node-32 rack=rackA-12 ceph osd crush move node-27 rack=rackA-13 ceph osd crush move node-30 rack=rackA-13 ceph osd crush move node-33 rack=rackA-13 ceph osd crush move node-35 rack=rackA-13 ceph osd crush move node-25_ssdpool rack=rackA-11-ssdpool ceph osd crush move node-28_ssdpool rack=rackA-11-ssdpool ceph osd crush move node-31_ssdpool rack=rackA-11-ssdpool ceph osd crush move node-34_ssdpool rack=rackA-11-ssdpool ceph osd crush move node-26_ssdpool rack=rackA-12-ssdpool ceph osd crush move node-29_ssdpool rack=rackA-12-ssdpool ceph osd crush move node-32_ssdpool rack=rackA-12-ssdpool ceph osd crush move node-27_ssdpool rack=rackA-13-ssdpool ceph osd crush move node-30_ssdpool rack=rackA-13-ssdpool ceph osd crush move node-33_ssdpool rack=rackA-13-ssdpool ceph osd crush move node-35_ssdpool rack=rackA-13-ssdpool执行ceph osd tree 查看集群层次结构是否符合预期;7、 创建replicated_rule_rack和replicated_rule_rack_ssdpool规则。replicated_rule_rack:用于带缓存的osd,以rack为故障域的应用规则;replicated_rule_rack_ssdpool:用于普通ssd分区为osd,以rack为故障域的应用规则;

ceph osd crush rule create-replicated {name} {root} {failure-domain-type} //root,The name of the node under which data should be placed.即应该放置数据的root bucket的名称,例如default。 ceph osd crush rule create-replicated replicated_rule_rack default rack ceph osd crush rule create-replicated replicated_rule_rack_ssdpool ssdpool rack备注:可以结合以下命令验证创建的rule是否符合预期。ceph osd crush rule ls 查看当前集群的rule规则ceph osd crush rule dump xxx 查看某一个xxx rule的详细信息

[root@node-4 ~]# ceph osd crush rule ls replicated_rule replicated_rule_ssdpool replicated_rule_rack replicated_rule_rack_ssdpool [root@node-4 ~]# ceph osd crush rule dump replicated_rule_rack { "rule_id": 2, "rule_name": "replicated_rule_rack", "ruleset": 2, "type": 1, "min_size": 1, "max_size": 10, "steps": [ { "op": "take", "item": -1, "item_name": "default" }, { "op": "chooseleaf_firstn", "num": 0, "type": "rack" }, { "op": "emit" } ] } [root@node-4 ~]# ceph osd crush rule dump replicated_rule_rack_ssdpool { "rule_id": 3, "rule_name": "replicated_rule_rack_ssdpool", "ruleset": 3, "type": 1, "min_size": 1, "max_size": 10, "steps": [ { "op": "take", "item": -4, "item_name": "ssdpool" }, { "op": "chooseleaf_firstn", "num": 0, "type": "rack" }, { "op": "emit" } ] } [root@node-4 ~]#8、 存储池应用规则。应用上一步创建的replicated_rule_rack规则到除了ssdpool以外的其他存储池

for i in $(rados lspools | grep -v ssdpool);do ceph osd pool set $i crush_rule replicated_rule_rack ;done应用上一步创建的replicated_rule_rack规则到ssdpool的存储池

ceph osd pool set ssdpool crush_rule replicated_rule_rack_ssdpool9、 验证存储池规则,查看crush_rule,确保符合预期(rule id)。

ceph osd pool ls detail [root@node-4 ~]# ceph osd pool ls detail pool 1 '.rgw.root' replicated size 3 min_size 2 crush_rule 2 object_hash rjenkins pg_num 128 pgp_num 128 last_change 1479 lfor 0/825 flags hashpspool stripe_width 0 application rgw pool 2 'volumes' replicated size 3 min_size 2 crush_rule 2 object_hash rjenkins pg_num 2048 pgp_num 2048 last_change 1480 lfor 0/326 flags hashpspool stripe_width 0 application cinder-volume removed_snaps [1~21] pool 3 'rbd' replicated size 3 min_size 1 crush_rule 2 object_hash rjenkins pg_num 64 pgp_num 64 last_change 1482 flags hashpspool stripe_width 0 removed_snaps [1~9] pool 4 'compute' replicated size 3 min_size 2 crush_rule 2 object_hash rjenkins pg_num 512 pgp_num 512 last_change 1483 lfor 0/323 flags hashpspool stripe_width 0 removed_snaps [1~4,6~e,15~1,1a~1,1c~6,2f~1] pool 5 'ssdpool' replicated size 3 min_size 1 crush_rule 3 object_hash rjenkins pg_num 256 pgp_num 256 last_change 1492 flags hashpspool stripe_width 0 removed_snaps [1~3] pool 6 'default.rgw.control' replicated size 3 min_size 2 crush_rule 2 object_hash rjenkins pg_num 128 pgp_num 128 last_change 1484 lfor 0/896 flags hashpspool stripe_width 0 application rgw pool 7 'default.rgw.meta' replicated size 3 min_size 2 crush_rule 2 object_hash rjenkins pg_num 128 pgp_num 128 last_change 1485 lfor 0/903 flags hashpspool stripe_width 0 application rgw pool 8 'default.rgw.log' replicated size 3 min_size 2 crush_rule 2 object_hash rjenkins pg_num 128 pgp_num 128 last_change 1486 lfor 0/922 flags hashpspool stripe_width 0 application rgw pool 9 'backups' replicated size 3 min_size 2 crush_rule 2 object_hash rjenkins pg_num 128 pgp_num 128 last_change 1487 lfor 0/929 flags hashpspool stripe_width 0 application cinder-backup removed_snaps [1~3] pool 10 'metrics' replicated size 3 min_size 2 crush_rule 2 object_hash rjenkins pg_num 128 pgp_num 128 last_change 1488 lfor 0/936 flags hashpspool stripe_width 0 pool 11 'default.rgw.buckets.index' replicated size 3 min_size 2 crush_rule 2 object_hash rjenkins pg_num 128 pgp_num 128 last_change 1489 lfor 0/943 flags hashpspool stripe_width 0 application rgw pool 12 'default.rgw.buckets.data' replicated size 3 min_size 2 crush_rule 2 object_hash rjenkins pg_num 128 pgp_num 128 last_change 1490 lfor 0/946 flags hashpspool stripe_width 0 application rgw10、 执行ceph –s 检查集群,等待恢复正常,重复步骤1检查集群状态,以及检查虚机业务是否正常使用。11、 如有必要,客户协助进行rack机柜下电,进行故障模拟。预期单个机柜内大于2台host主机下电不影响业务使用。12、 变更完成。

附录:变更后ceph osd tree的结构图:

[root@node-4 ~]# ceph osd tree ID CLASS WEIGHT TYPE NAME STATUS REWEIGHT PRI-AFF -4 3.39185 root ssdpool -76 1.23340 rack rackA-11-ssdpool -67 0.30835 host node-25_ssdpool 98 hdd 0.30835 osd.98 up 1.00000 1.00000 -58 0.30835 host node-28_ssdpool 26 hdd 0.30835 osd.26 up 1.00000 1.00000 -41 0.30835 host node-31_ssdpool 53 hdd 0.30835 osd.53 up 1.00000 1.00000 -49 0.30835 host node-34_ssdpool 80 hdd 0.30835 osd.80 up 1.00000 1.00000 -77 0.92505 rack rackA-12-ssdpool -46 0.30835 host node-26_ssdpool 8 hdd 0.30835 osd.8 up 1.00000 1.00000 -55 0.30835 host node-29_ssdpool 35 hdd 0.30835 osd.35 up 1.00000 1.00000 -50 0.30835 host node-32_ssdpool 62 hdd 0.30835 osd.62 up 1.00000 1.00000 -78 1.23340 rack rackA-13-ssdpool -37 0.30835 host node-27_ssdpool 17 hdd 0.30835 osd.17 up 1.00000 1.00000 -61 0.30835 host node-30_ssdpool 44 hdd 0.30835 osd.44 up 1.00000 1.00000 -6 0.30835 host node-33_ssdpool 71 hdd 0.30835 osd.71 up 1.00000 1.00000 -64 0.30835 host node-35_ssdpool 89 hdd 0.30835 osd.89 up 1.00000 1.00000 -1 642.12769 root default -73 233.50098 rack rackA-11 -70 58.37524 host node-25 90 ssd 7.29691 osd.90 up 1.00000 1.00000 91 ssd 7.29691 osd.91 up 1.00000 1.00000 92 ssd 7.29691 osd.92 up 1.00000 1.00000 93 ssd 7.29691 osd.93 up 1.00000 1.00000 94 ssd 7.29691 osd.94 up 1.00000 1.00000 95 ssd 7.29691 osd.95 up 1.00000 1.00000 96 ssd 7.29691 osd.96 up 1.00000 1.00000 97 ssd 7.29691 osd.97 up 1.00000 1.00000 -3 58.37524 host node-28 18 ssd 7.29691 osd.18 up 1.00000 1.00000 19 ssd 7.29691 osd.19 up 1.00000 1.00000 20 ssd 7.29691 osd.20 up 1.00000 1.00000 21 ssd 7.29691 osd.21 up 1.00000 1.00000 22 ssd 7.29691 osd.22 up 1.00000 1.00000 23 ssd 7.29691 osd.23 up 1.00000 1.00000 24 ssd 7.29691 osd.24 up 1.00000 1.00000 25 ssd 7.29691 osd.25 up 1.00000 1.00000 -9 58.37524 host node-31 45 ssd 7.29691 osd.45 up 1.00000 1.00000 46 ssd 7.29691 osd.46 up 1.00000 1.00000 47 ssd 7.29691 osd.47 up 1.00000 1.00000 48 ssd 7.29691 osd.48 up 1.00000 1.00000 49 ssd 7.29691 osd.49 up 1.00000 1.00000 50 ssd 7.29691 osd.50 up 1.00000 1.00000 51 ssd 7.29691 osd.51 up 1.00000 1.00000 52 ssd 7.29691 osd.52 up 1.00000 1.00000 -7 58.37524 host node-34 72 ssd 7.29691 osd.72 up 1.00000 1.00000 73 ssd 7.29691 osd.73 up 1.00000 1.00000 74 ssd 7.29691 osd.74 up 1.00000 1.00000 75 ssd 7.29691 osd.75 up 1.00000 1.00000 76 ssd 7.29691 osd.76 up 1.00000 1.00000 77 ssd 7.29691 osd.77 up 1.00000 1.00000 78 ssd 7.29691 osd.78 up 1.00000 1.00000 79 ssd 7.29691 osd.79 up 1.00000 1.00000 -74 175.12573 rack rackA-12 -12 58.37524 host node-26 0 ssd 7.29691 osd.0 up 1.00000 1.00000 1 ssd 7.29691 osd.1 up 1.00000 1.00000 2 ssd 7.29691 osd.2 up 1.00000 1.00000 3 ssd 7.29691 osd.3 up 1.00000 1.00000 4 ssd 7.29691 osd.4 up 1.00000 1.00000 5 ssd 7.29691 osd.5 up 1.00000 1.00000 6 ssd 7.29691 osd.6 up 1.00000 1.00000 7 ssd 7.29691 osd.7 up 1.00000 1.00000 -11 58.37524 host node-29 27 ssd 7.29691 osd.27 up 1.00000 1.00000 28 ssd 7.29691 osd.28 up 1.00000 1.00000 29 ssd 7.29691 osd.29 up 1.00000 1.00000 30 ssd 7.29691 osd.30 up 1.00000 1.00000 31 ssd 7.29691 osd.31 up 1.00000 1.00000 32 ssd 7.29691 osd.32 up 1.00000 1.00000 33 ssd 7.29691 osd.33 up 1.00000 1.00000 34 ssd 7.29691 osd.34 up 1.00000 1.00000 -8 58.37524 host node-32 54 ssd 7.29691 osd.54 up 1.00000 1.00000 55 ssd 7.29691 osd.55 up 1.00000 1.00000 56 ssd 7.29691 osd.56 up 1.00000 1.00000 57 ssd 7.29691 osd.57 up 1.00000 1.00000 58 ssd 7.29691 osd.58 up 1.00000 1.00000 59 ssd 7.29691 osd.59 up 1.00000 1.00000 60 ssd 7.29691 osd.60 up 1.00000 1.00000 61 ssd 7.29691 osd.61 up 1.00000 1.00000 -75 233.50098 rack rackA-13 -5 58.37524 host node-27 9 ssd 7.29691 osd.9 up 1.00000 1.00000 10 ssd 7.29691 osd.10 up 1.00000 1.00000 11 ssd 7.29691 osd.11 up 1.00000 1.00000 12 ssd 7.29691 osd.12 up 1.00000 1.00000 13 ssd 7.29691 osd.13 up 1.00000 1.00000 14 ssd 7.29691 osd.14 up 1.00000 1.00000 15 ssd 7.29691 osd.15 up 1.00000 1.00000 16 ssd 7.29691 osd.16 up 1.00000 1.00000 -10 58.37524 host node-30 36 ssd 7.29691 osd.36 up 1.00000 1.00000 37 ssd 7.29691 osd.37 up 1.00000 1.00000 38 ssd 7.29691 osd.38 up 1.00000 1.00000 39 ssd 7.29691 osd.39 up 1.00000 1.00000 40 ssd 7.29691 osd.40 up 1.00000 1.00000 41 ssd 7.29691 osd.41 up 1.00000 1.00000 42 ssd 7.29691 osd.42 up 1.00000 1.00000 43 ssd 7.29691 osd.43 up 1.00000 1.00000 -40 58.37524 host node-33 63 ssd 7.29691 osd.63 up 1.00000 1.00000 64 ssd 7.29691 osd.64 up 1.00000 1.00000 65 ssd 7.29691 osd.65 up 1.00000 1.00000 66 ssd 7.29691 osd.66 up 1.00000 1.00000 67 ssd 7.29691 osd.67 up 1.00000 1.00000 68 ssd 7.29691 osd.68 up 1.00000 1.00000 69 ssd 7.29691 osd.69 up 1.00000 1.00000 70 ssd 7.29691 osd.70 up 1.00000 1.00000 -2 58.37524 host node-35 81 ssd 7.29691 osd.81 up 1.00000 1.00000 82 ssd 7.29691 osd.82 up 1.00000 1.00000 83 ssd 7.29691 osd.83 up 1.00000 1.00000 84 ssd 7.29691 osd.84 up 1.00000 1.00000 85 ssd 7.29691 osd.85 up 1.00000 1.00000 86 ssd 7.29691 osd.86 up 1.00000 1.00000 87 ssd 7.29691 osd.87 up 1.00000 1.00000 88 ssd 7.29691 osd.88 up 1.00000 1.00000 [root@node-4 ~]#