前言 我们在学习机器学习相关内容时,一般是不需要我们自己去爬取数据的,因为很多的算法学习很友好的帮助我们打包好了相关数据,但是这并不代表我们不需要进行学习和了解相关

我们在学习机器学习相关内容时,一般是不需要我们自己去爬取数据的,因为很多的算法学习很友好的帮助我们打包好了相关数据,但是这并不代表我们不需要进行学习和了解相关知识。在这里我们了解三种数据的爬取:鲜花/明星图像的爬取、中国艺人图像的爬取、股票数据的爬取。分别对着三种爬虫进行学习和使用。

- 体会

个人感觉爬虫的难点就是URL的获取,URL的获取与自身的经验有关,这点我也很难把握,一般URL获取是通过访问该网站通过抓包进行分析获取的。一般也不一定需要抓包工具,通过浏览器的开发者工具(F12/Fn+F12)即可进行获取。

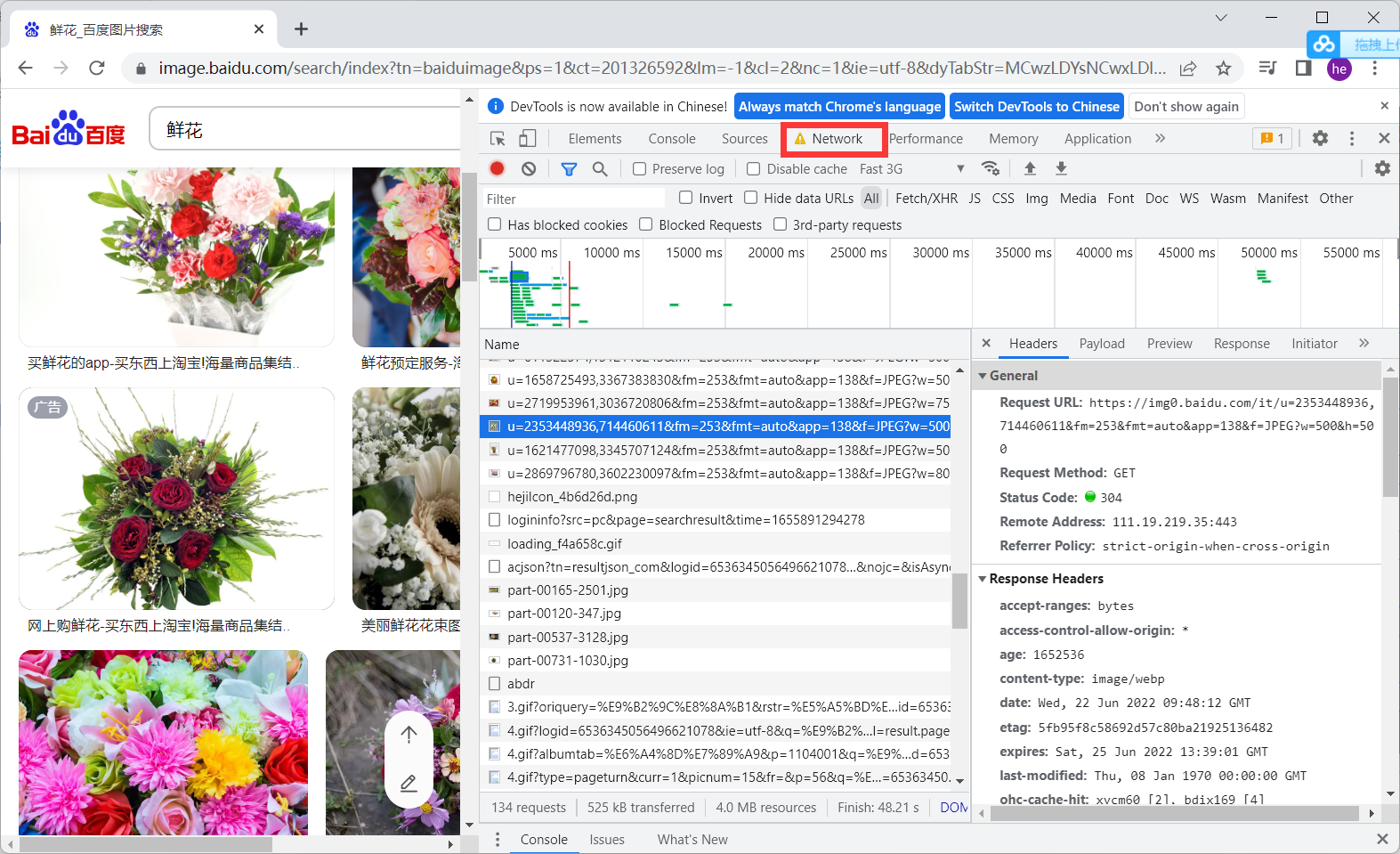

- 百度搜索鲜花关键词,并打开开发者工具,点击NrtWork

-

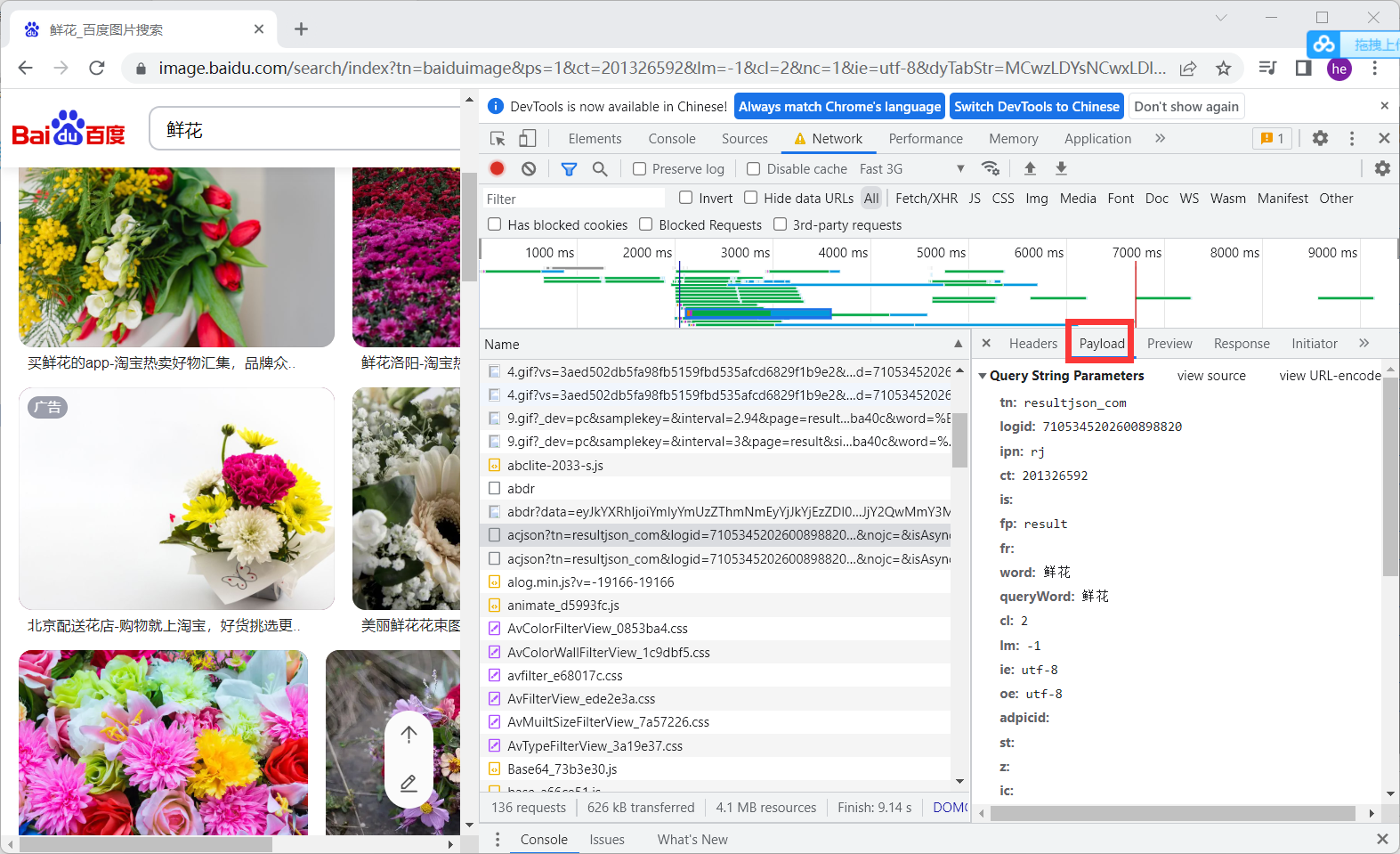

找到数据包进行分析,分析重要参数

- pn 表示第几张图片加载

- rn 表示加载多少图片

-

查看返回值进行分析,可以看到图片体制在ThumbURL中

-

http://image.baidu.com/search/acjson? 百度图片地址

-

拼接tn 进行访问可以得到每个图片的URL,在返回数据的thumbURL中

https://image.baidu.com/search/acjson?+tn -

进行分离图片的URL然后访问下载

import requests

import os

import urllib

class GetImage():

def __init__(self,keyword='鲜花',paginator=1):

self.url = 'http://image.baidu.com/search/acjson?'

self.headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/102.0.0.0 Safari/537.36'

}

self.keyword = keyword

self.paginator = paginator

def get_param(self):

keyword = urllib.parse.quote(self.keyword)

params = []

for i in range(1,self.paginator+1):

params.append(

'tn=resultjson_com&logid=10338332981203604364&ipn=rj&ct=201326592&is=&fp=result&fr=&word={}&queryWord={}&cl=2&lm=-1&ie=utf-8&oe=utf-8&adpicid=&st=&z=&ic=&hd=&latest=©right=&s=&se=&tab=&width=&height=&face=&istype=&qc=&nc=1&expermode=&nojc=&isAsync=&pn={}&rn=30&gsm=78&1650241802208='.format(keyword,keyword,30*i)

)

return params

def get_urls(self,params):

urls = []

for param in params:

urls.append(self.url+param)

return urls

def get_image_url(self,urls):

image_url = []

for url in urls:

json_data = requests.get(url,headers = self.headers).json()

json_data = json_data.get('data')

for i in json_data:

if i:

image_url.append(i.get('thumbURL'))

return image_url

def get_image(self,image_url):

##根据图片url,存入图片

file_name = os.path.join("", self.keyword)

#print(file_name)

if not os.path.exists(file_name):

os.makedirs(file_name)

for index,url in enumerate(image_url,start=1):

with open(file_name+'/{}.jpg'.format(index),'wb') as f:

f.write(requests.get(url,headers=self.headers).content)

if index != 0 and index%30 == 0:

print("第{}页下载完成".format(index/30))

def __call__(self, *args, **kwargs):

params = self.get_param()

urls = self.get_urls(params)

image_url = self.get_image_url(urls)

self.get_image(image_url=image_url)

if __name__ == '__main__':

spider = GetImage('鲜花',3)

spider()

- 只需要把main函数里的关键字换一下就可以了,换成明星即可

if __name__ == '__main__':

spider = GetImage('明星',3)

spider()

- 同理的我们需要其他图片也可以换

if __name__ == '__main__':

spider = GetImage('动漫',3)

spider()

- 我们可以使用上面的爬取图片的方式,把关键词换为中国艺人也可以爬取图片

- 显然上面的方式可以满足我们部分需求,我们如果需要爬取不同艺人那么上面的方式就不是那么好了。

- 我们下载10个不同艺人的图片,然后用他们的名字命名图片名,再把他们存入picture文件内

import requests

import json

import os

import urllib

def getPicinfo(url):

headers = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:101.0) Gecko/20100101 Firefox/101.0',

}

response = requests.get(url,headers)

if response.status_code == 200:

return response.text

return None

Download_dir = 'picture'

if os.path.exists(Download_dir) == False:

os.mkdir(Download_dir)

pn_num = 1

rn_num = 10

for k in range(pn_num):

url = "https://sp0.baidu.com/8aQDcjqpAAV3otqbppnN2DJv/api.php?resource_id=28266&from_mid=500&format=json&ie=utf-8&oe=utf-8&query=%E4%B8%AD%E5%9B%BD%E8%89%BA%E4%BA%BA&sort_key=&sort_type=1&stat0=&stat1=&stat2=&stat3=&pn="+str(pn_num)+"&rn="+str(rn_num)+"&_=1580457480665"

res = getPicinfo(url)

json_str = json.loads(res)

figs = json_str['data'][0]['result']

for i in figs:

name = i['ename']

img_url = i['pic_4n_78']

img_res = requests.get(img_url)

if img_res.status_code == 200:

ext_str_splits = img_res.headers['Content-Type'].split('/')

ext = ext_str_splits[-1]

fname = name+'.'+ext

open(os.path.join(Download_dir,fname),'wb').write(img_res.content)

print(name,img_url,'saved')

我们对http://quote.eastmoney.com/center/gridlist.html 内的股票数据进行爬取,并且把数据储存下来

爬取代码# http://quote.eastmoney.com/center/gridlist.html

import requests

from fake_useragent import UserAgent

import json

import csv

import urllib.request as r

import threading

def getHtml(url):

r = requests.get(url, headers={

'User-Agent': UserAgent().random,

})

r.encoding = r.apparent_encoding

return r.text

# 爬取多少

num = 20

stockUrl = 'http://52.push2.eastmoney.com/api/qt/clist/get?cb=jQuery112409623798991171317_1654957180928&pn=1&pz=20&po=1&np=1&ut=bd1d9ddb04089700cf9c27f6f7426281&fltt=2&invt=2&wbp2u=|0|0|0|web&fid=f3&fs=m:0+t:80&fields=f1,f2,f3,f4,f5,f6,f7,f8,f9,f10,f12,f13,f14,f15,f16,f17,f18,f20,f21,f23,f24,f25,f22,f11,f62,f128,f136,f115,f152&_=1654957180938'

if __name__ == '__main__':

responseText = getHtml(stockUrl)

jsonText = responseText.split("(")[1].split(")")[0];

resJson = json.loads(jsonText)

datas = resJson['data']['diff']

dataList = []

for data in datas:

row = [data['f12'],data['f14']]

dataList.append(row)

print(dataList)

f = open('stock.csv', 'w+', encoding='utf-8', newline="")

writer = csv.writer(f)

writer.writerow(("代码","名称"))

for data in dataList:

writer.writerow((data[0]+"\t",data[1]+"\t"))

f.close()

def getStockList():

stockList = []

f = open('stock.csv', 'r', encoding='utf-8')

f.seek(0)

reader = csv.reader(f)

for item in reader:

stockList.append(item)

f.close()

return stockList

def downloadFile(url,filepath):

try:

r.urlretrieve(url,filepath)

except Exception as e:

print(e)

print(filepath,"is downLoaded")

pass

sem = threading.Semaphore(1)

def dowmloadFileSem(url,filepath):

with sem:

downloadFile(url,filepath)

urlStart = 'http://quotes.money.163.com/service/chddata.html?code='

urlEnd = '&end=20210221&fields=TCLOSW;HIGH;TOPEN;LCLOSE;CHG;PCHG;VOTURNOVER;VATURNOVER'

if __name__ == '__main__':

stockList = getStockList()

stockList.pop(0)

print(stockList)

for s in stockList:

scode = str(s[0].split("\t")[0])

url = urlStart+("0" if scode.startswith('6') else '1')+ scode + urlEnd

print(url)

filepath = (str(s[1].split("\t")[0])+"_"+scode)+".csv"

threading.Thread(target=dowmloadFileSem,args=(url,filepath)).start()

有可能当时爬取的数据是脏数据,运行下面代码不一定能跑通,需要你自己处理数据还是其他方法

## 主要利用matplotlib进行图像绘制

import pandas as pd

import matplotlib.pyplot as plt

import csv

import 股票数据爬取 as gp

plt.rcParams['font.sans-serif'] = ['simhei'] #指定字体

plt.rcParams['axes.unicode_minus'] = False #显示-号

plt.rcParams['figure.dpi'] = 100 #每英寸点数

files = []

def read_file(file_name):

data = pd.read_csv(file_name,encoding='gbk')

col_name = data.columns.values

return data,col_name

def get_file_path():

stock_list = gp.getStockList()

paths = []

for stock in stock_list[1:]:

p = stock[1].strip()+"_"+stock[0].strip()+".csv"

print(p)

data,_=read_file(p)

if len(data)>1:

files.append(p)

print(p)

get_file_path()

print(files)

def get_diff(file_name):

data,col_name = read_file(file_name)

index = len(data['日期'])-1

sep = index//15

plt.figure(figsize=(15,17))

x = data['日期'].values.tolist()

x.reverse()

xticks = list(range(0,len(x),sep))

xlabels = [x[i] for i in xticks]

xticks.append(len(x))

y1 = [float(c) if c!='None' else 0 for c in data['涨跌额'].values.tolist()]

y2 = [float(c) if c != 'None' else 0 for c in data['涨跌幅'].values.tolist()]

y1.reverse()

y2.reverse()

ax1 = plt.subplot(211)

plt.plot(range(1,len(x)+1),y1,c='r')

plt.title('{}-涨跌额/涨跌幅'.format(file_name.split('_')[0]),fontsize = 20)

ax1.set_xticks(xticks)

ax1.set_xticklabels(xlabels,rotation = 40)

plt.ylabel('涨跌额')

ax2 = plt.subplot(212)

plt.plot(range(1, len(x) + 1), y1, c='g')

#plt.title('{}-涨跌额/涨跌幅'.format(file_name.splir('_')[0]), fontsize=20)

ax2.set_xticks(xticks)

ax2.set_xticklabels(xlabels, rotation=40)

plt.xlabel('日期')

plt.ylabel('涨跌额')

plt.show()

print(len(files))

for file in files:

get_diff(file)

上文描述了三个数据爬取的案例,不同的数据爬取需要我们对不同的URL进行获取,不同参数进行输入,URL如何组合、如何获取、这是数据爬取的难点,需要有一定的经验和基础。