k8s 1.22.4 最新版本 二进制部署 标签(空格分隔): kubernetes系列 [toc] 一: 系统环境初始化 1.1 系统环境 cat /etc/hosts----172.16.10.11 flyfishsrvs01172.16.10.12 flyfishsrvs02172.16.10.13 flyfishsrvs03172.16.

k8s 1.22.4 最新版本 二进制部署

标签(空格分隔): kubernetes系列

[toc]

一: 系统环境初始化

1.1 系统环境

cat /etc/hosts ---- 172.16.10.11 flyfishsrvs01 172.16.10.12 flyfishsrvs02 172.16.10.13 flyfishsrvs03 172.16.10.14 flyfishsrvs04 172.16.10.15 flyfishsrvs05 172.16.10.16 flyfishsrvs06 172.16.10.17 flyfishsrvs07 ----- 先安装单master版本后续扩容成多master 系统关闭firewalld/selinux /清空iptables防火墙规则1.2 升级系统内核

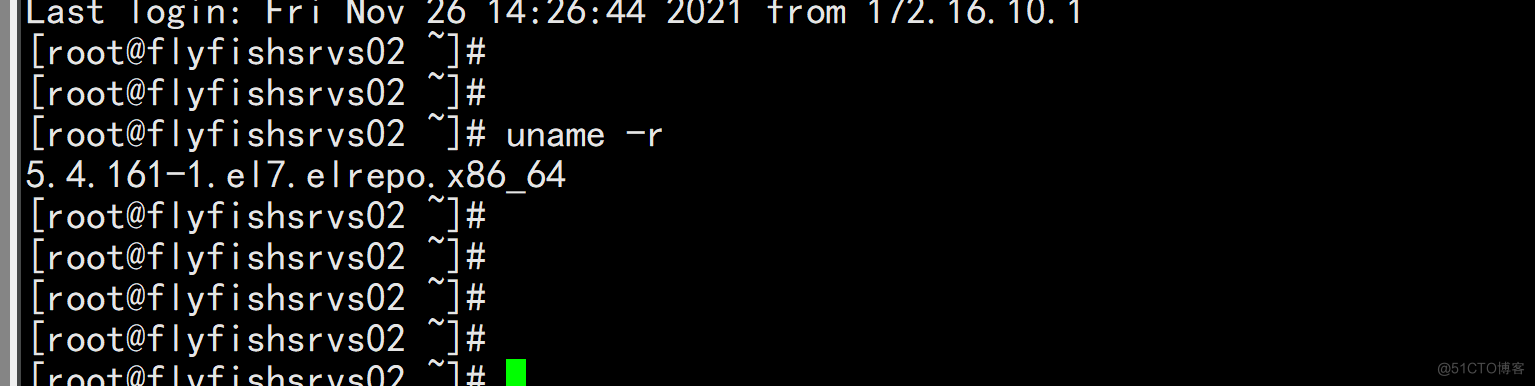

所有机器都要升级内核 #查看当前内核版本 uname -r uname -a cat /etc/redhat-release #添加yum源仓库 mv /etc/yum.repos.d/CentOS-Base.repo /etc/yum.repos.d/CentOS-Base.repo.backup curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo curl -o /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org yum install -y https://www.elrepo.org/elrepo-release-7.0-4.el7.elrepo.noarch.rpm #更新yum源仓库 yum -y update #查看可用的系统内核包 yum --disablerepo="*" --enablerepo="elrepo-kernel" list available #安装内核,注意先要查看可用内核,我安装的是5.4版本的内核 yum --enablerepo=elrepo-kernel install kernel-lt #查看目前可用内核 awk -F\' '$1=="menuentry " {print i++ " : " $2}' /etc/grub2.cfg #使用序号为0的内核,序号0是前面查出来的可用内核编号 grub2-set-default 0 #生成 grub 配置文件并重启 grub2-mkconfig -o /boot/grub2/grub.cfg reboot

1.2 环境配置

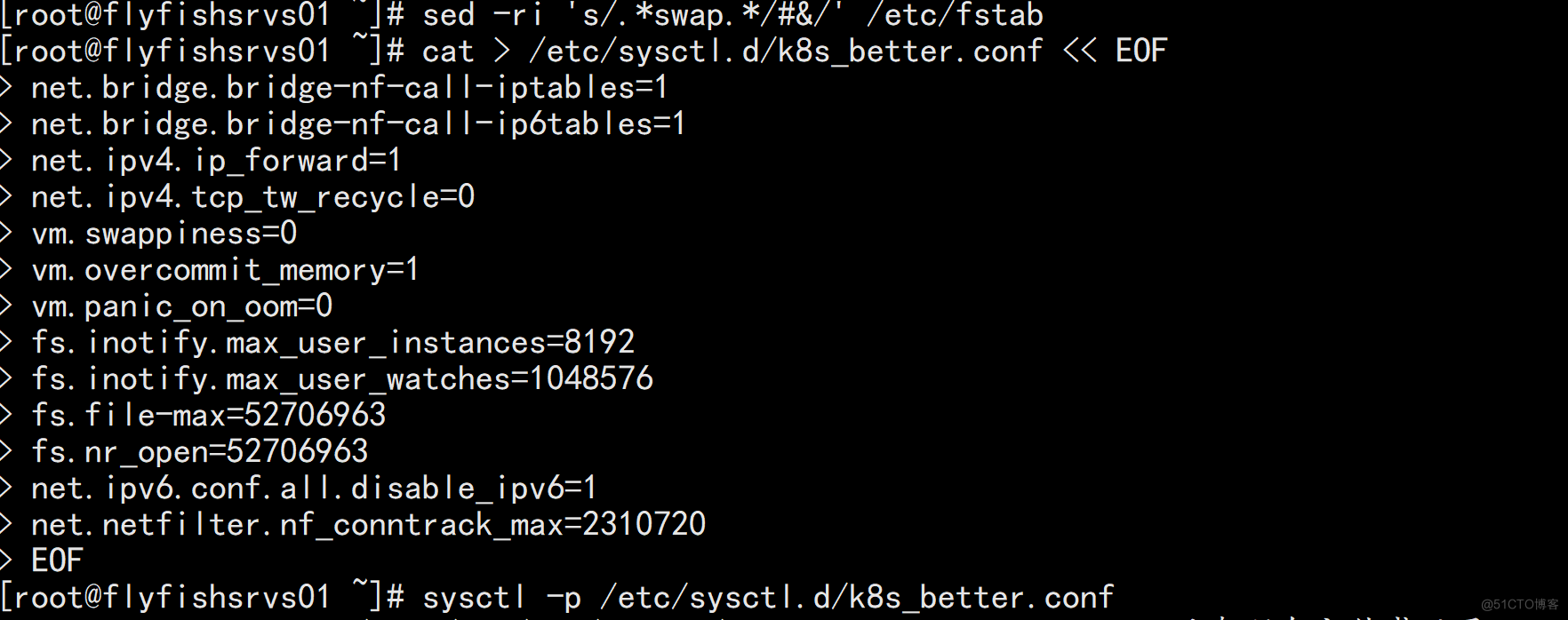

#修改时区,同步时间 yum install chrond -y vim /etc/chrony.conf ----- ntpdate ntp1.aliyun.com iburst ----- ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime echo 'Asia/Shanghai' > /etc/timezone #关闭防火墙,selinux systemctl stop firewalld systemctl disable firewalld sed -i 's/enforcing/disabled/' /etc/selinux/config setenforce 0 ## 关闭swap swapoff -a sed -ri 's/.*swap.*/#&/' /etc/fstab #系统优化 cat > /etc/sysctl.d/k8s_better.conf << EOF net.bridge.bridge-nf-call-iptables=1 net.bridge.bridge-nf-call-ip6tables=1 net.ipv4.ip_forward=1 net.ipv4.tcp_tw_recycle=0 vm.swappiness=0 vm.overcommit_memory=1 vm.panic_on_oom=0 fs.inotify.max_user_instances=8192 fs.inotify.max_user_watches=1048576 fs.file-max=52706963 fs.nr_open=52706963 net.ipv6.conf.all.disable_ipv6=1 net.netfilter.nf_conntrack_max=2310720 EOF sysctl -p /etc/sysctl.d/k8s_better.conf #确保每台机器的uuid不一致,如果是克隆机器,修改网卡配置文件删除uuid那一行 cat /sys/class/dmi/id/product_uuid

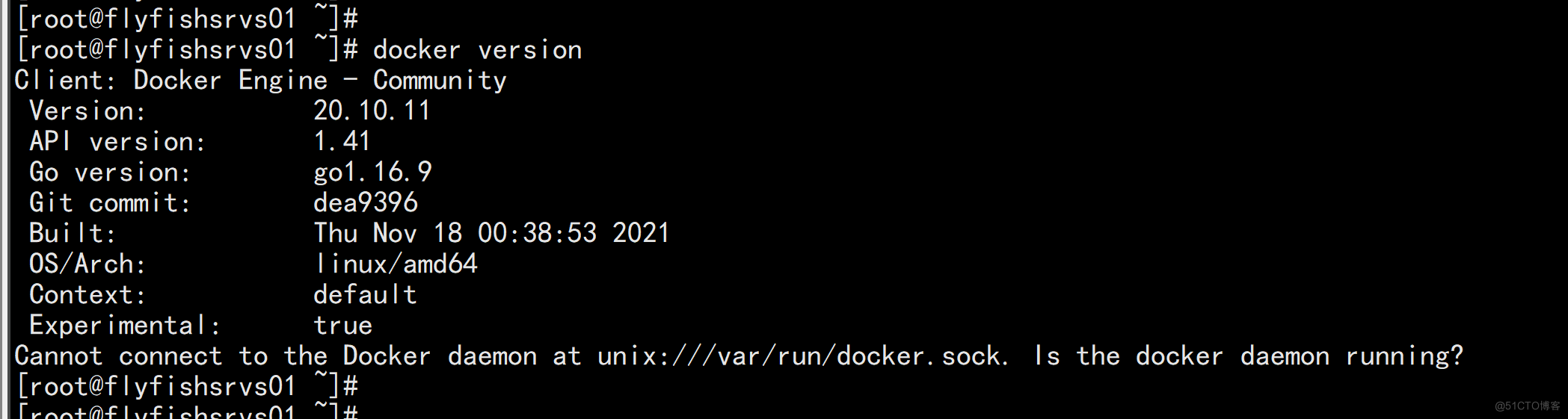

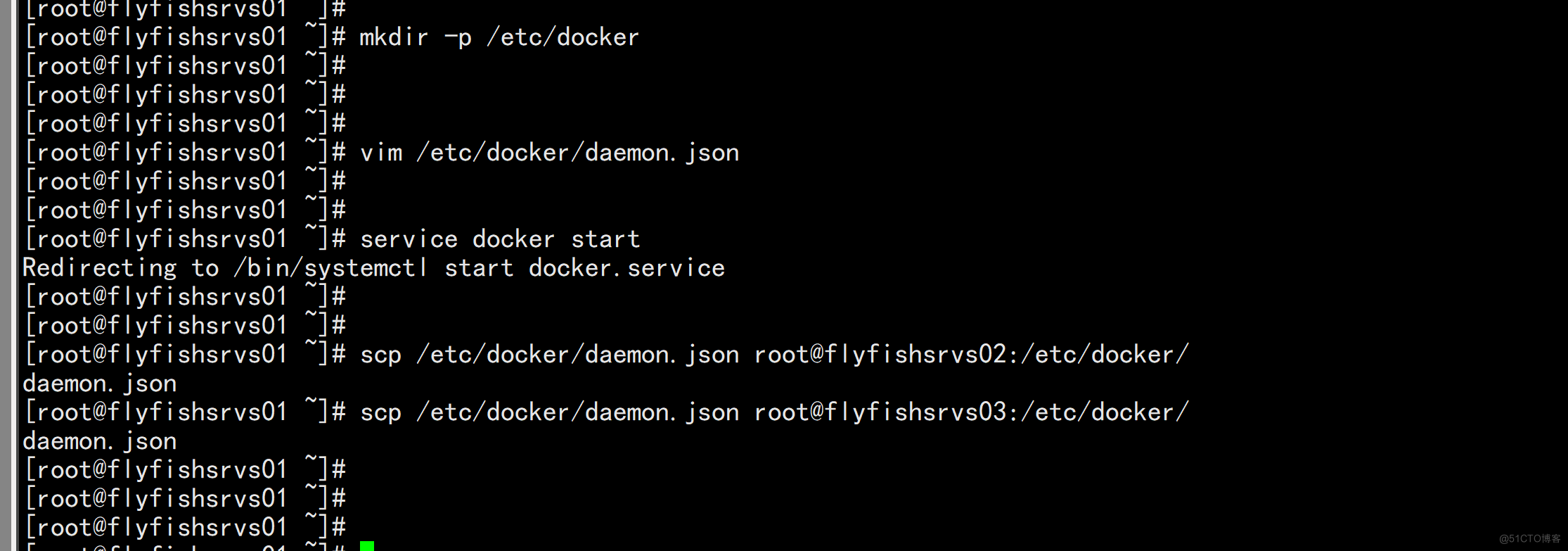

1.3 安装docker

全部节点安装: 安装docker 这里介绍yum源安装 1. 卸载旧版本 yum remove docker \ docker-client \ docker-client-latest \ docker-common \ docker-latest \ docker-latest-logrotate \ docker-logrotate \ docker-engine \ docker-ce rm -rf /var/lib/docker 2.安装必备软件包 yum install -y yum-utils device-mapper-persistent-data lvm2 3.设置yum源 #建议使用阿里源 yum-config-manager \ --add-repo \ http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo 4.安装docker 这里介绍两种安装方式,我是用的是第一种 1)使用yum命令安装 #查看可用版本 yum list docker-ce --showduplicates | sort -r #安装18.09.1的版本,安装其他版本照套格式就行 yum install docker-ce-18.09.1 docker-ce-cli-18.09.1 containerd.io #安装最新版本 yum install -y docker-ce docker-ce-cli containerd.io mkdir -p /etc/docker cat > /etc/docker/daemon.json << EOF { "registry-mirrors": ["https://b9pmyelo.mirror.aliyuncs.com"] } EOF

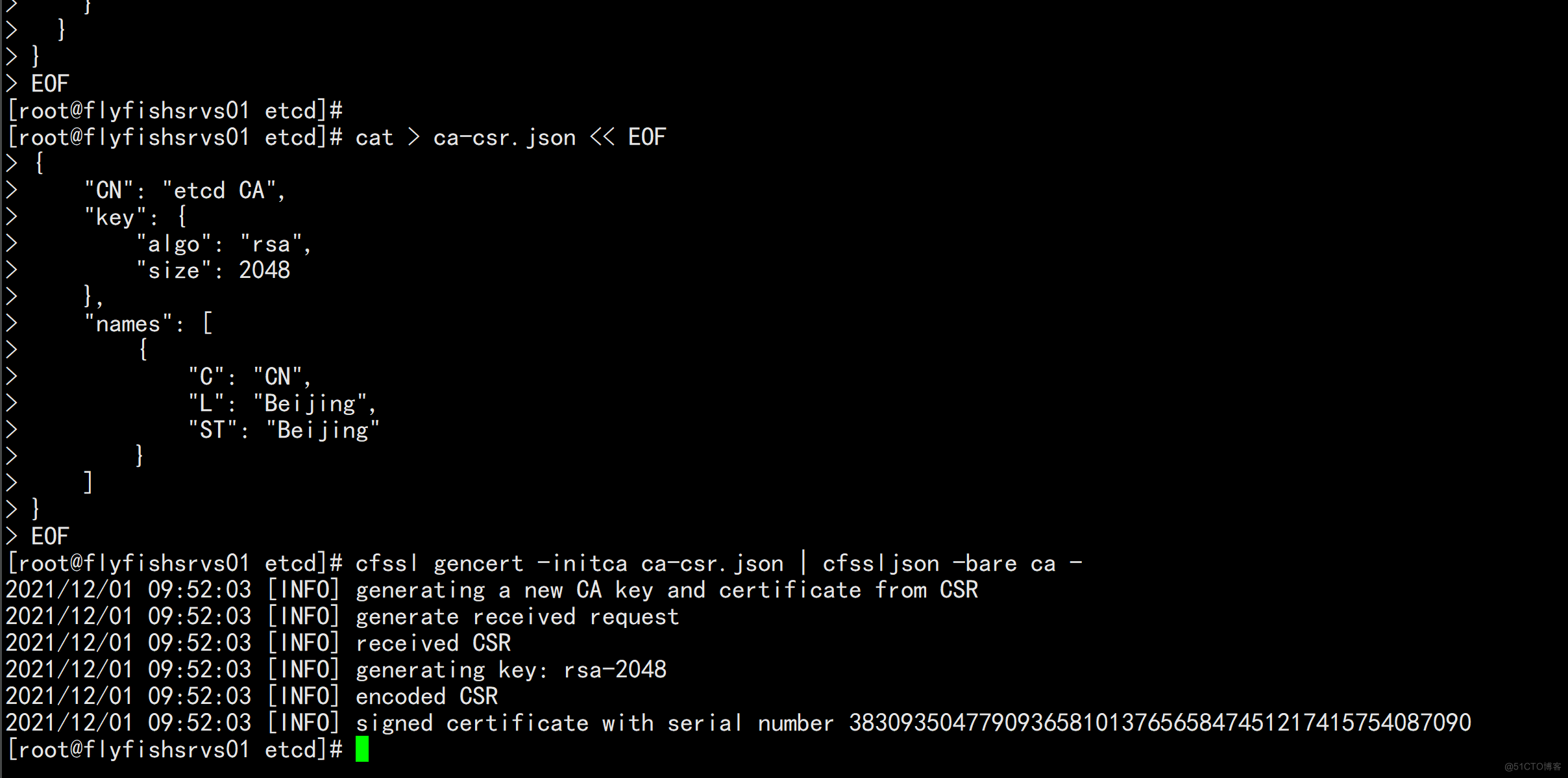

二:部署etcd集群

2.1 关于签名证书

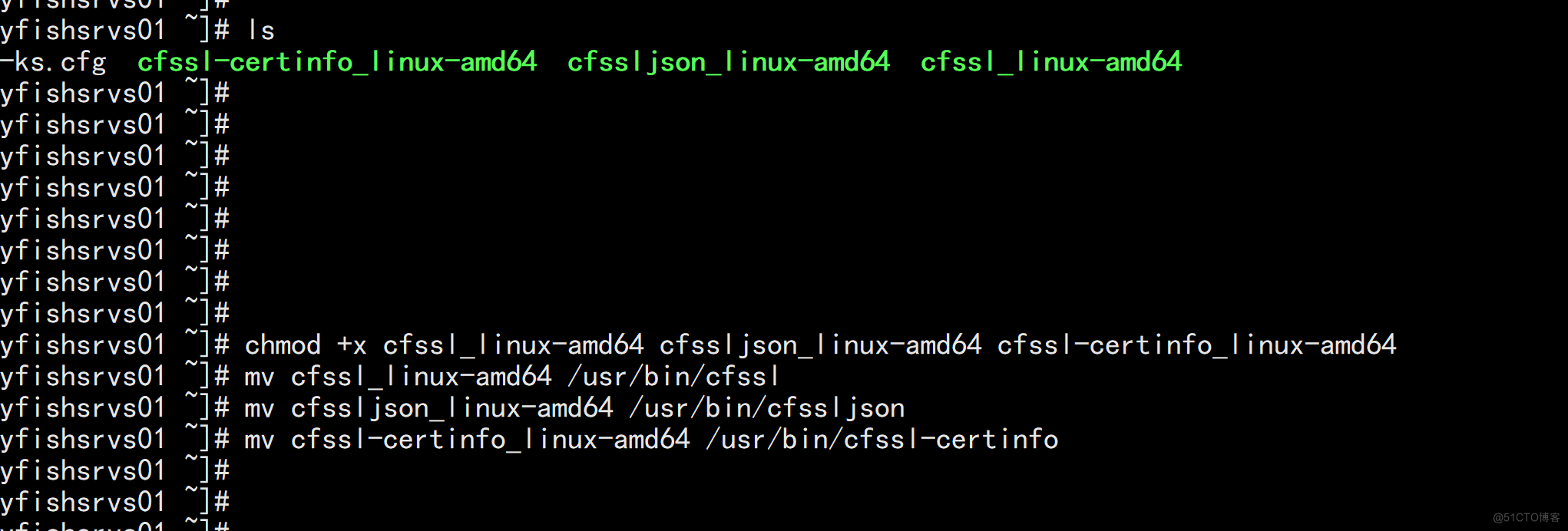

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64 mv cfssl_linux-amd64 /usr/bin/cfssl mv cfssljson_linux-amd64 /usr/bin/cfssljson mv cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo

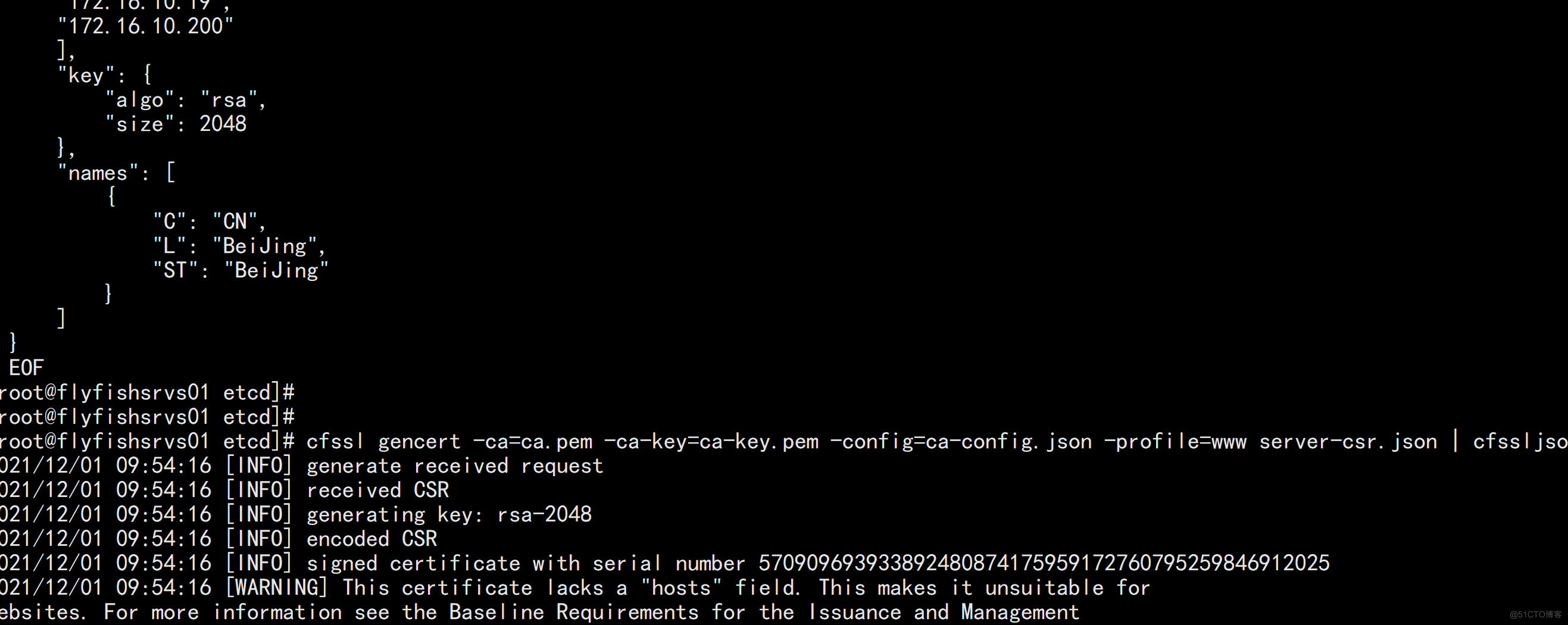

2.2 部署etcd

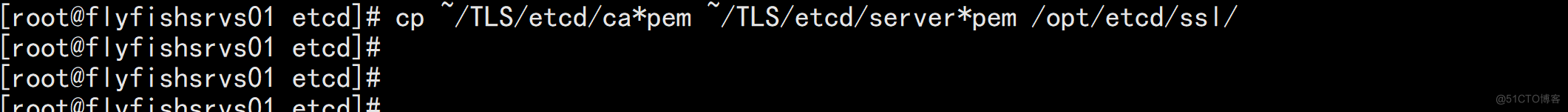

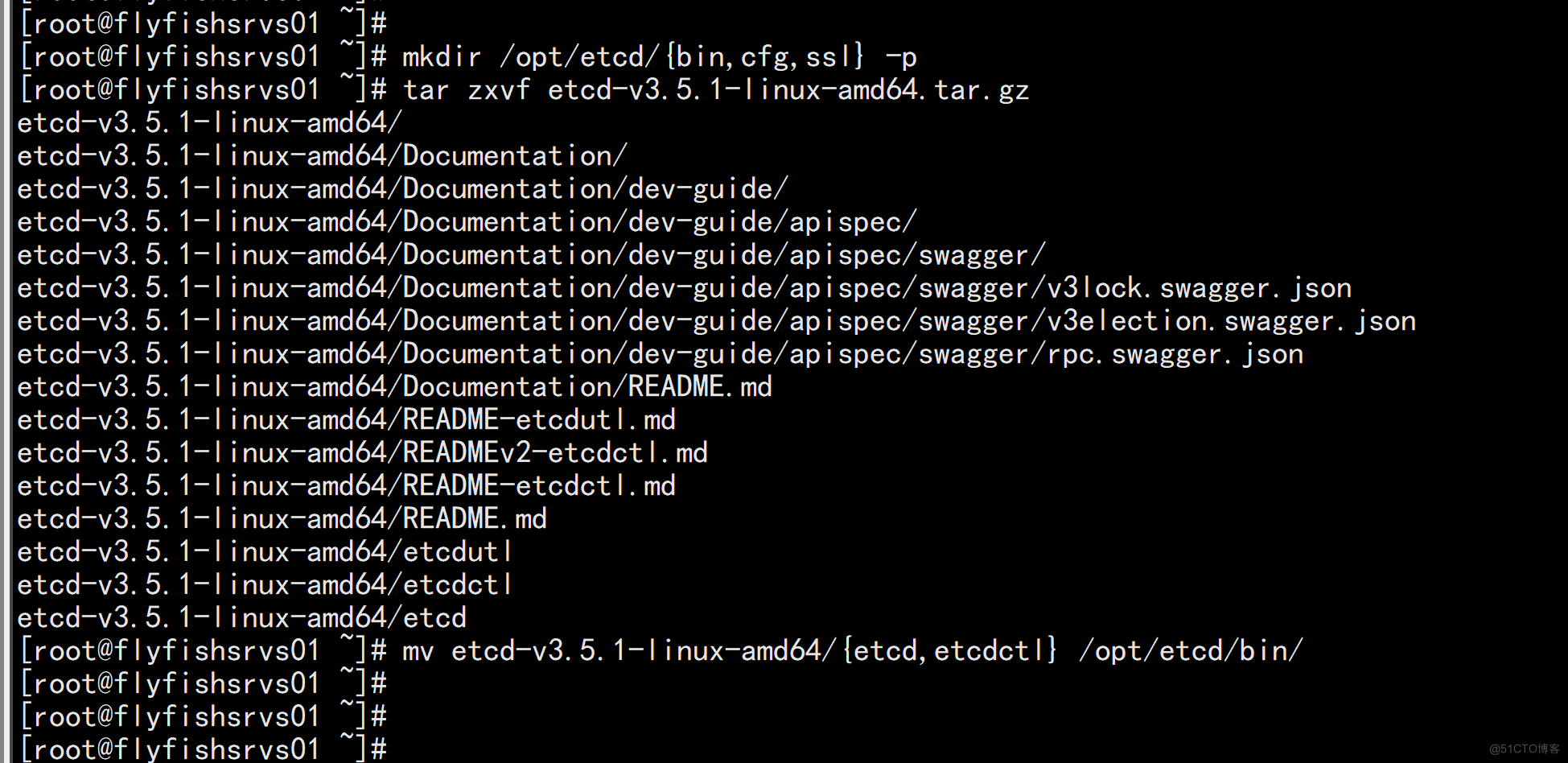

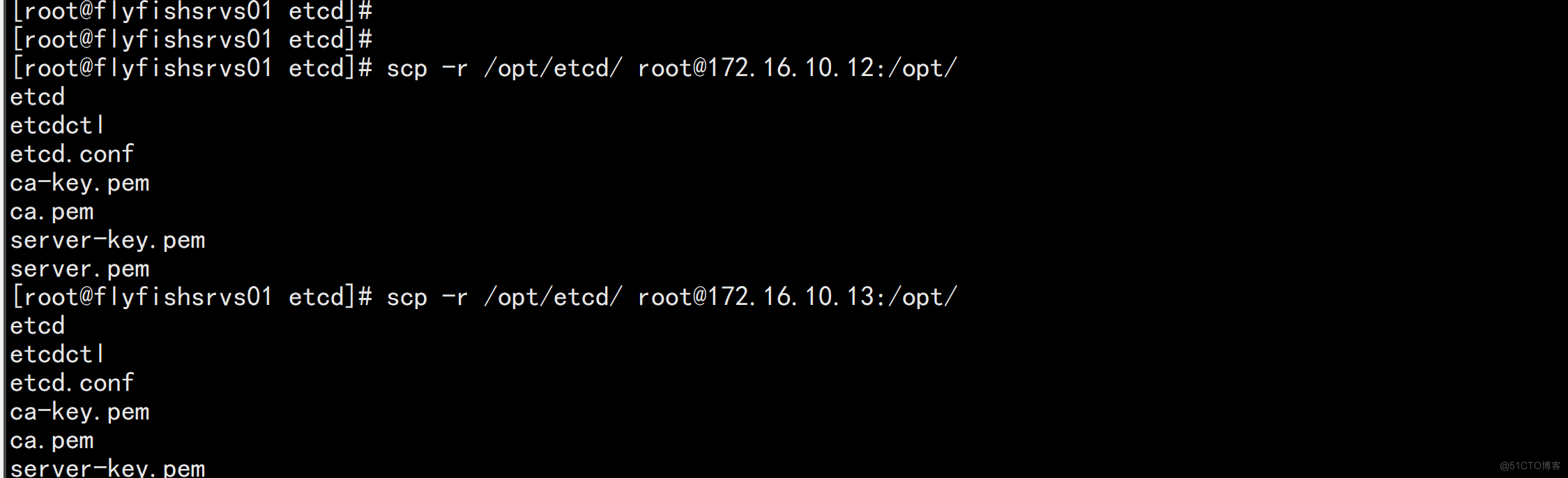

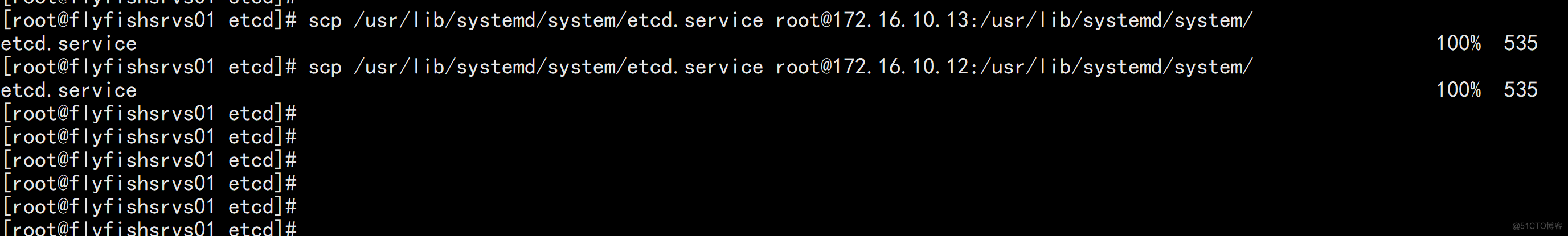

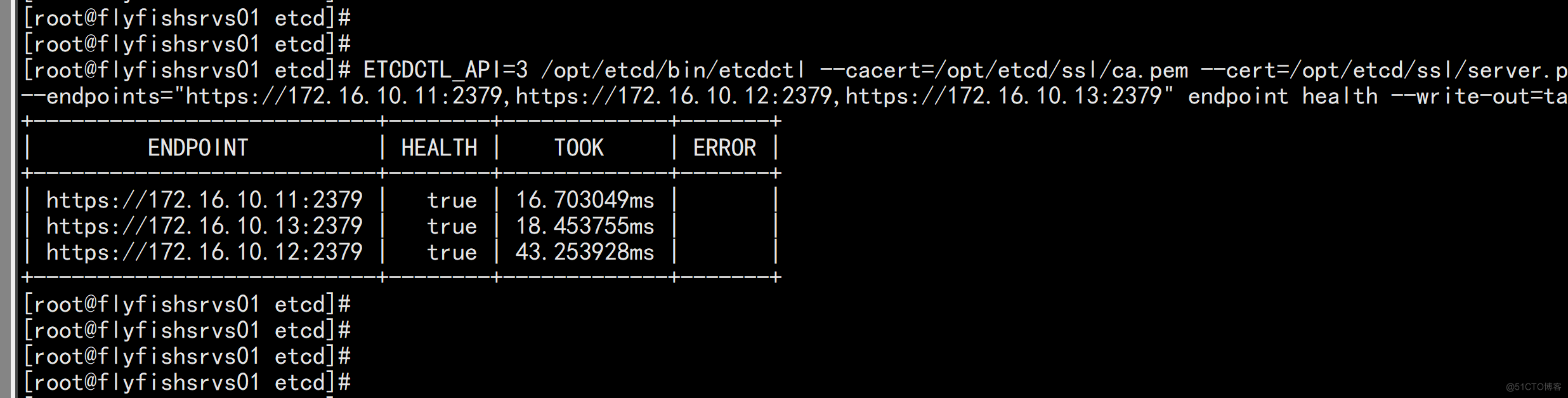

1. Etcd 的概念: Etcd 是一个分布式键值存储系统,Kubernetes使用Etcd进行数据存储,所以先准备一个Etcd数据库,为解决Etcd单点故障,应采用集群方式部署,这里使用3台组建集群,可容忍1台机器故障,当然,你也可以使用5台组建集群,可容忍2台机器故障。 下载地址: https://github.com/etcd-io/etcd/releases 以下在节点1上操作,为简化操作,待会将节点1生成的所有文件拷贝到节点2和节点3.2. 安装配置etcd mkdir /opt/etcd/{bin,cfg,ssl} -p tar zxvf etcd-v3.5.1-linux-amd64.tar.gz mv etcd-v3.5.1-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/

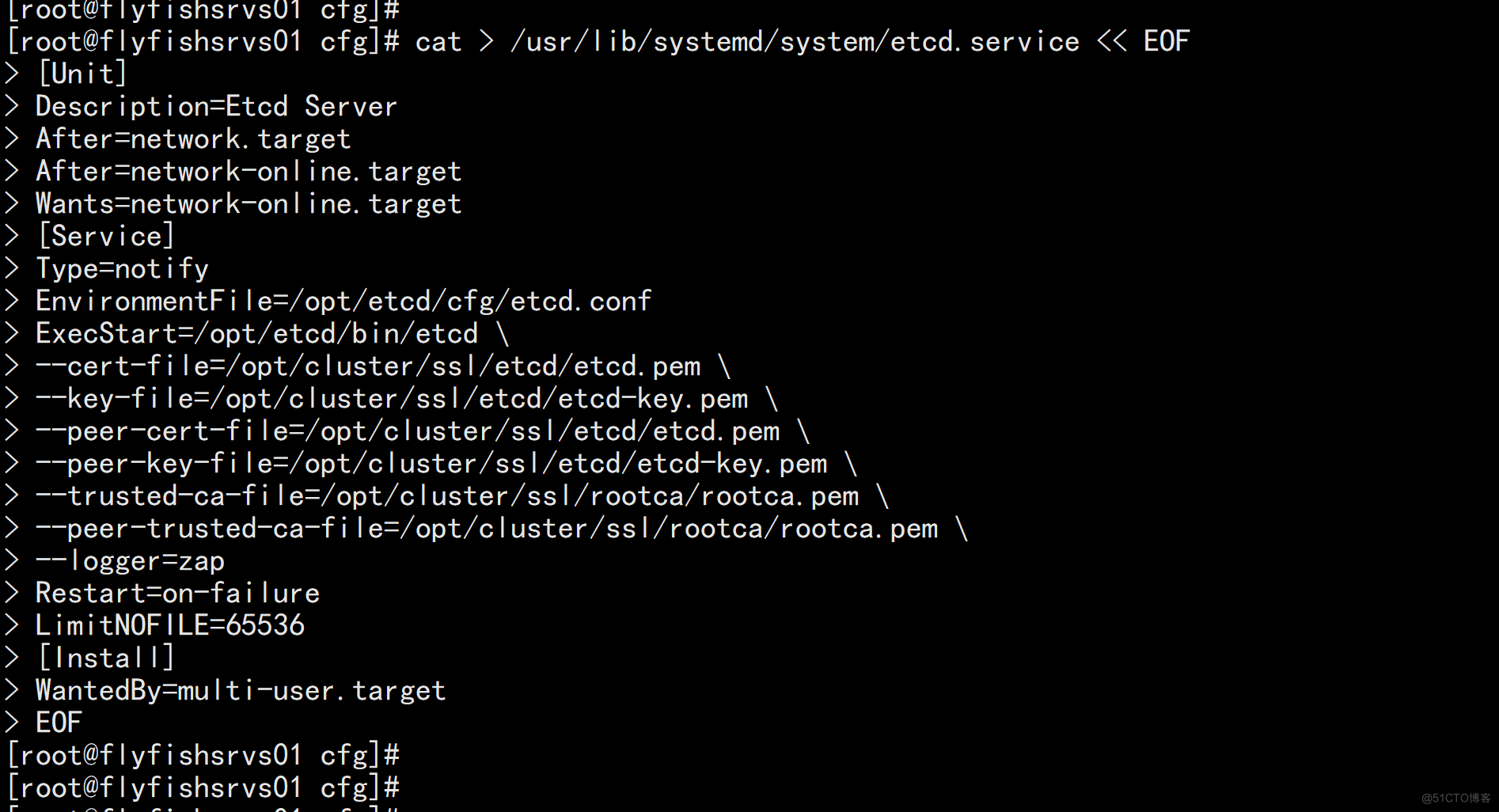

3. systemd管理etcd cat > /usr/lib/systemd/system/etcd.service << EOF [Unit] Description=Etcd Server After=network.target After=network-online.target Wants=network-online.target [Service] Type=notify EnvironmentFile=/opt/etcd/cfg/etcd.conf ExecStart=/opt/etcd/bin/etcd \ --cert-file=/opt/etcd/ssl/server.pem \ --key-file=/opt/etcd/ssl/server-key.pem \ --peer-cert-file=/opt/etcd/ssl/server.pem \ --peer-key-file=/opt/etcd/ssl/server-key.pem \ --trusted-ca-file=/opt/etcd/ssl/ca.pem \ --peer-trusted-ca-file=/opt/etcd/ssl/ca.pem \ --logger=zap Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF

flyfishsrvs02 etcd vim /opt/etcd/cfg/etcd.conf ----- #[Member] ETCD_NAME="etcd-2" ETCD_DATA_DIR="/var/lib/etcd/default.etcd" ETCD_LISTEN_PEER_URLS="https://172.16.10.12:2380" ETCD_LISTEN_CLIENT_URLS="https://172.16.10.12:2379" #[Clustering] ETCD_INITIAL_ADVERTISE_PEER_URLS="https://172.16.10.12:2380" ETCD_ADVERTISE_CLIENT_URLS="https://172.16.10.12:2379" ETCD_INITIAL_CLUSTER="etcd-1=https://172.16.10.11:2380,etcd-2=https://172.16.10.12:2380,etcd-3=https://172.16.10.13:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_INITIAL_CLUSTER_STATE="new" ----

flyfishsrvs03 etcd vim /opt/etcd/cfg/etcd.conf ---- #[Member] ETCD_NAME="etcd-3" ETCD_DATA_DIR="/var/lib/etcd/default.etcd" ETCD_LISTEN_PEER_URLS="https://172.16.10.13:2380" ETCD_LISTEN_CLIENT_URLS="https://172.16.10.13:2379" #[Clustering] ETCD_INITIAL_ADVERTISE_PEER_URLS="https://172.16.10.13:2380" ETCD_ADVERTISE_CLIENT_URLS="https://172.16.10.13:2379" ETCD_INITIAL_CLUSTER="etcd-1=https://172.16.10.11:2380,etcd-2=https://172.16.10.12:2380,etcd-3=https://172.16.10.13:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_INITIAL_CLUSTER_STATE="new" -----

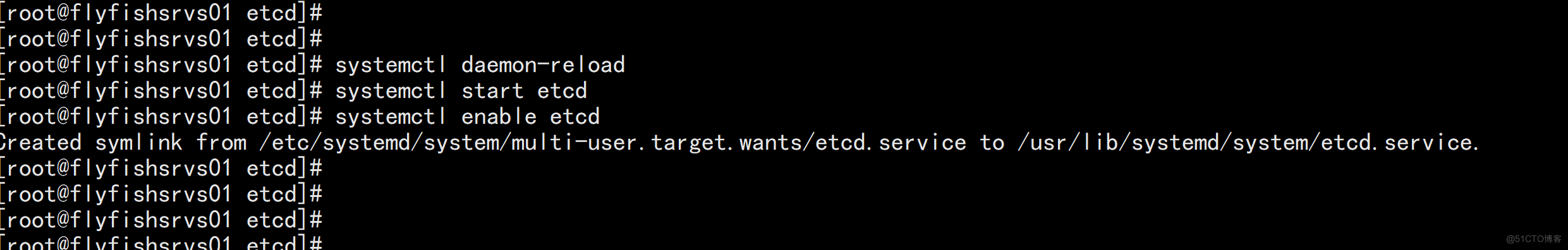

6. 启动etcd: systemctl daemon-reload systemctl start etcd systemctl enable etcd

三: 部署k8s1.22.4

3.1 关于k8s 最新版本的下载

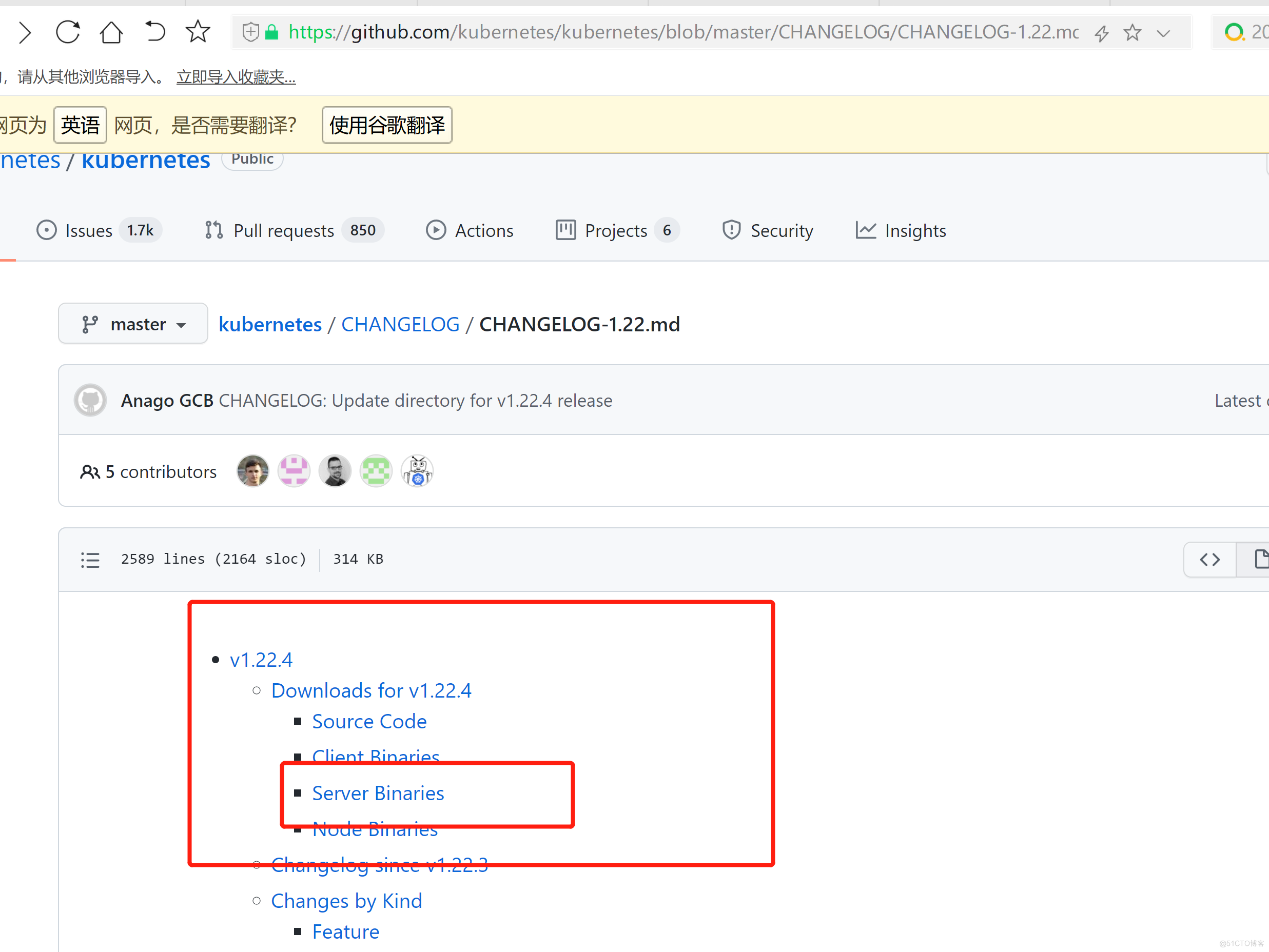

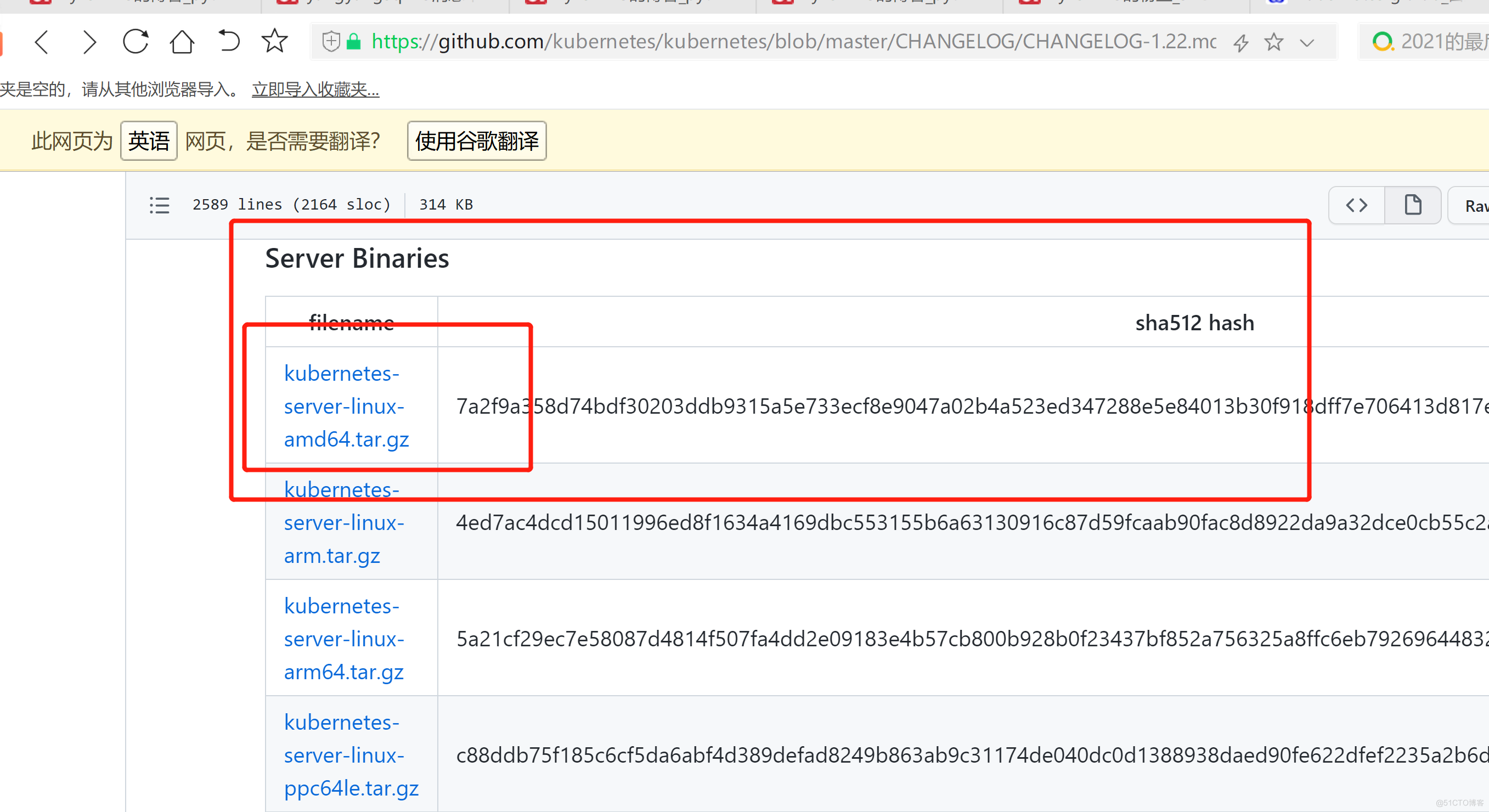

1. 从Github下载二进制文件 下载地址: https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.22.md 注:打开链接你会发现里面有很多包,下载一个server包就够了,包含了Master和Worker Node二进制文件。

3.2 创建k8s 的kube-apiserver证书

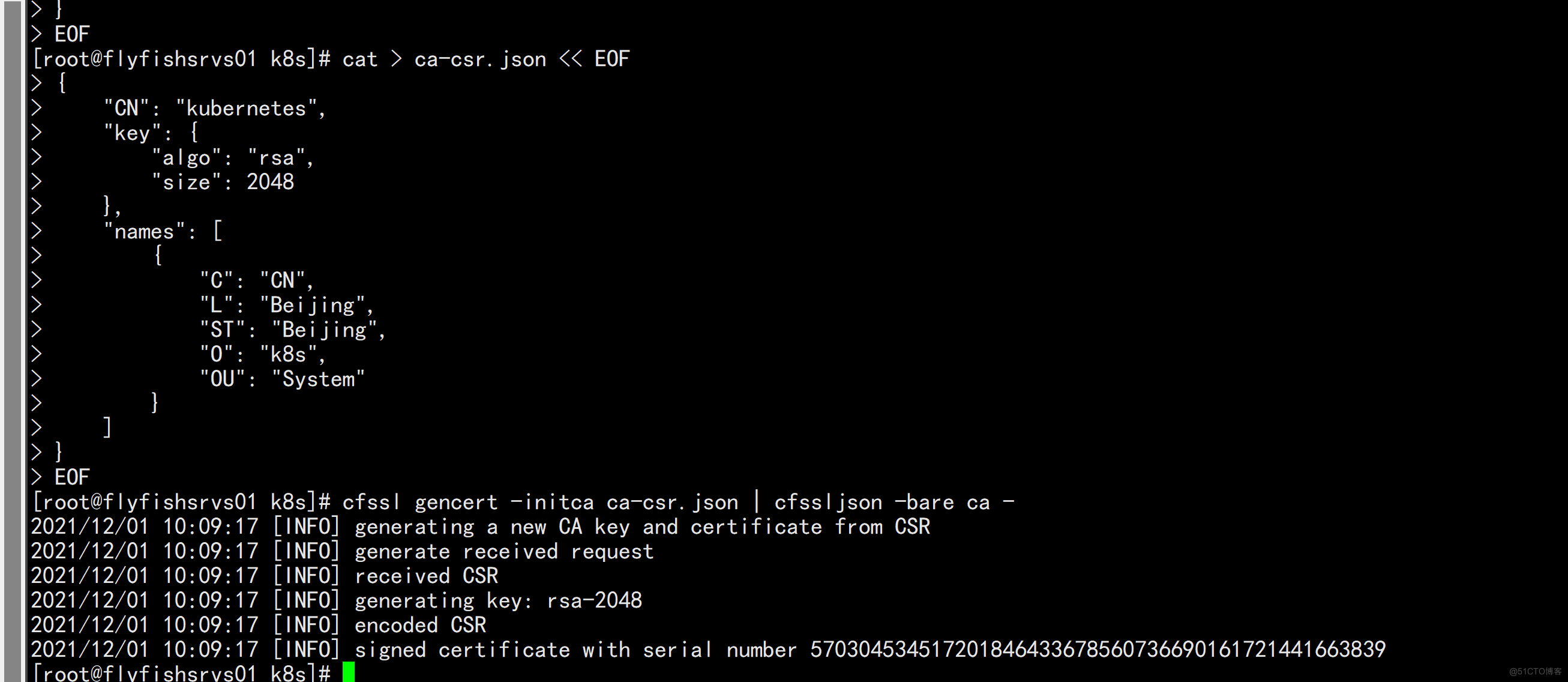

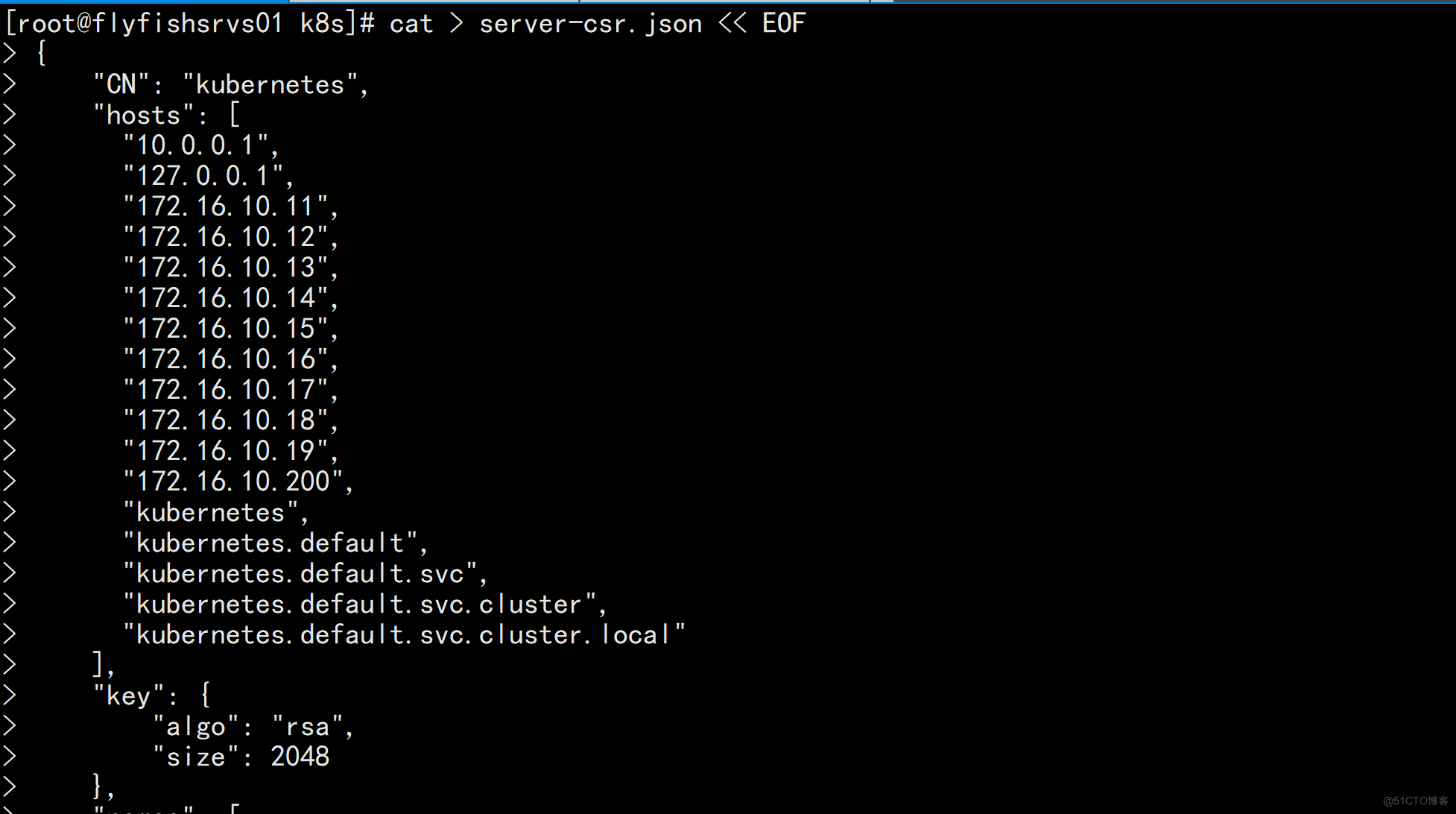

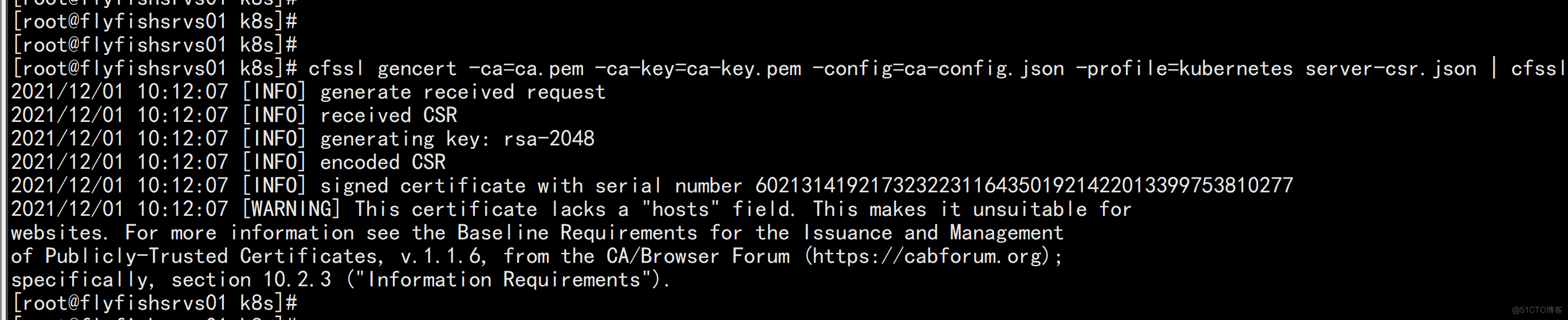

创建k8s 的kube-apiserver证书 cd ~/TLS/k8s cat > ca-config.json << EOF { "signing": { "default": { "expiry": "87600h" }, "profiles": { "kubernetes": { "expiry": "87600h", "usages": [ "signing", "key encipherment", "server auth", "client auth" ] } } } } EOF cat > ca-csr.json << EOF { "CN": "kubernetes", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "Beijing", "ST": "Beijing", "O": "k8s", "OU": "System" } ] } EOF 生成证书: cfssl gencert -initca ca-csr.json | cfssljson -bare ca - 会生成ca.pem和ca-key.pem文件。

3.3 部署k8s1.22.4

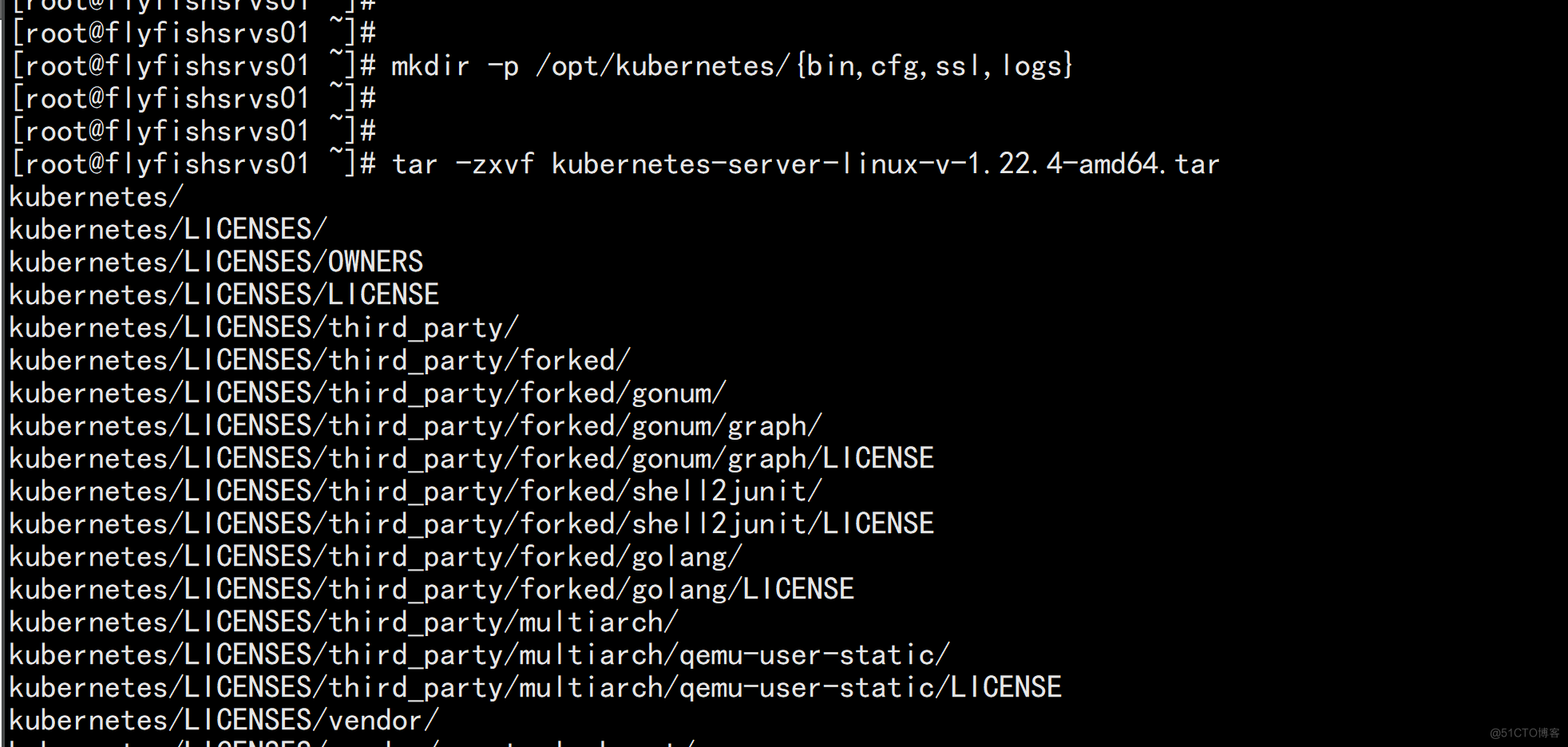

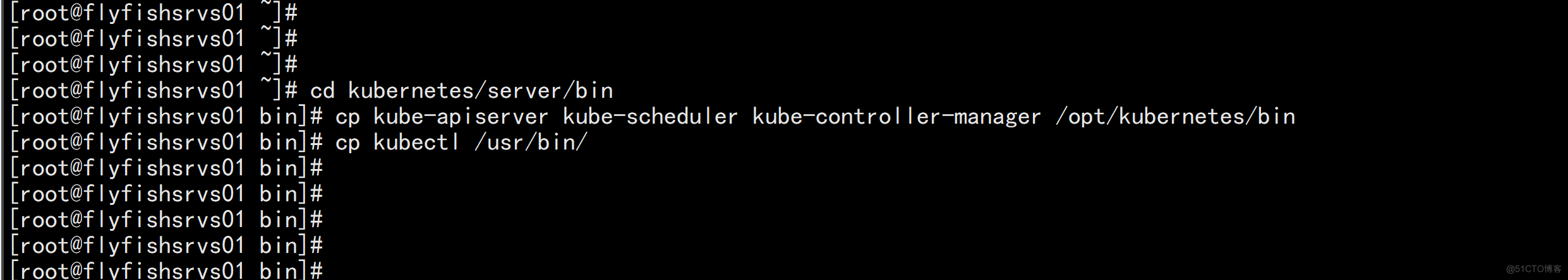

3. 部署k8s1.22.4 3.1 解压二进制包 mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs} tar -zxvf kubernetes-server-linux-v-1.22.4-amd64.tar cd kubernetes/server/bin cp kube-apiserver kube-scheduler kube-controller-manager /opt/kubernetes/bin cp kubectl /usr/bin/

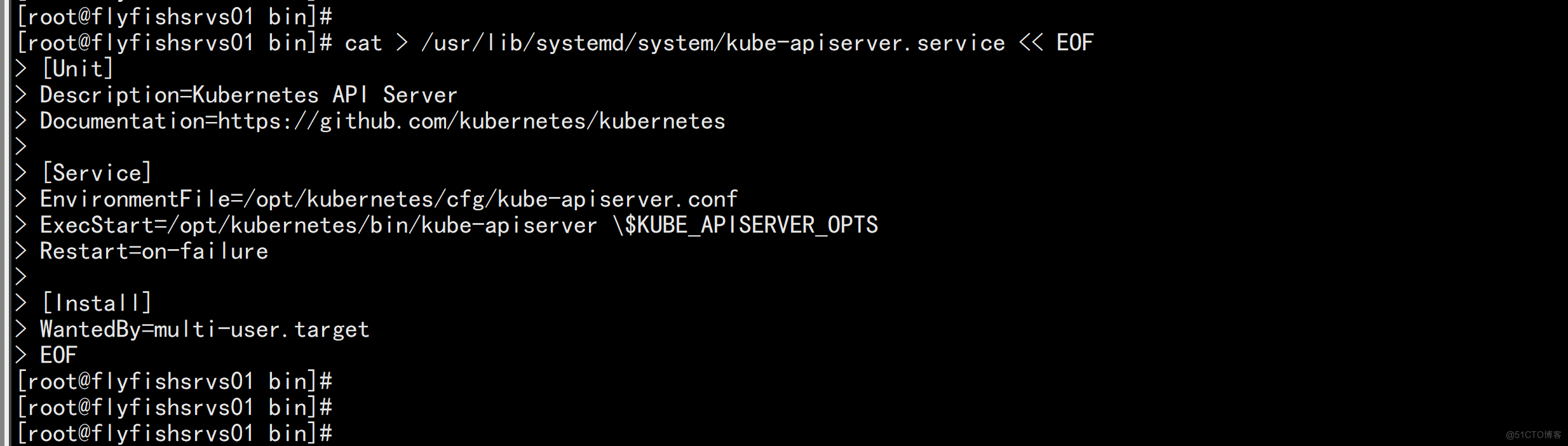

3.3.1 部署kube-apiserver

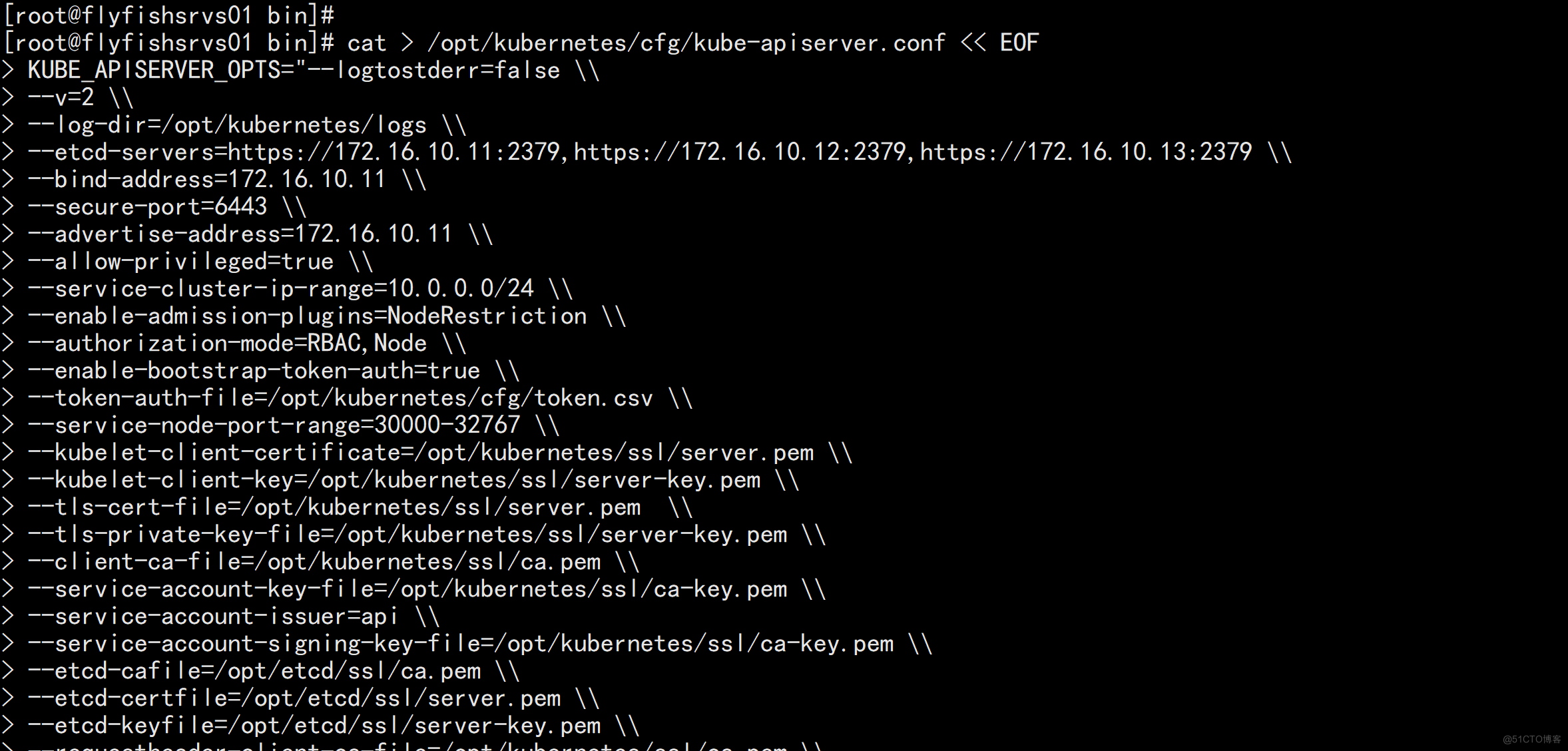

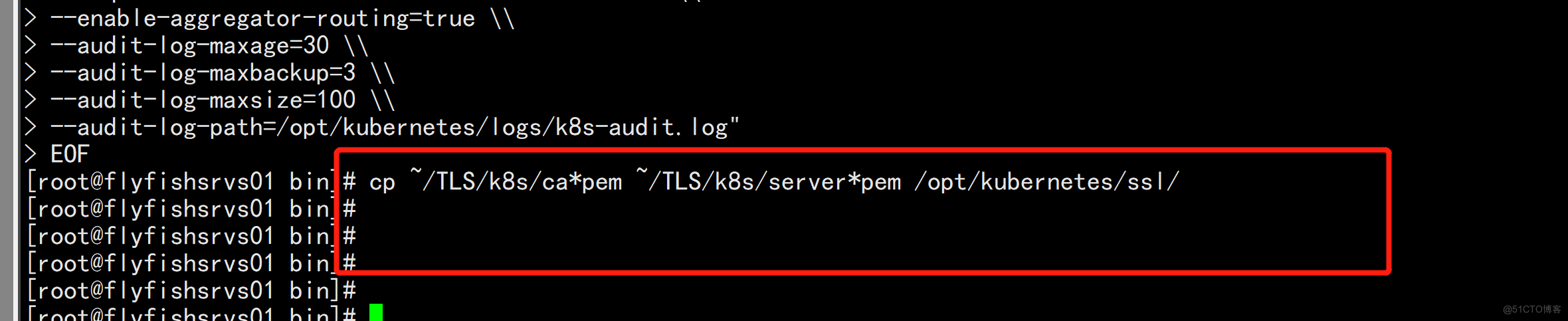

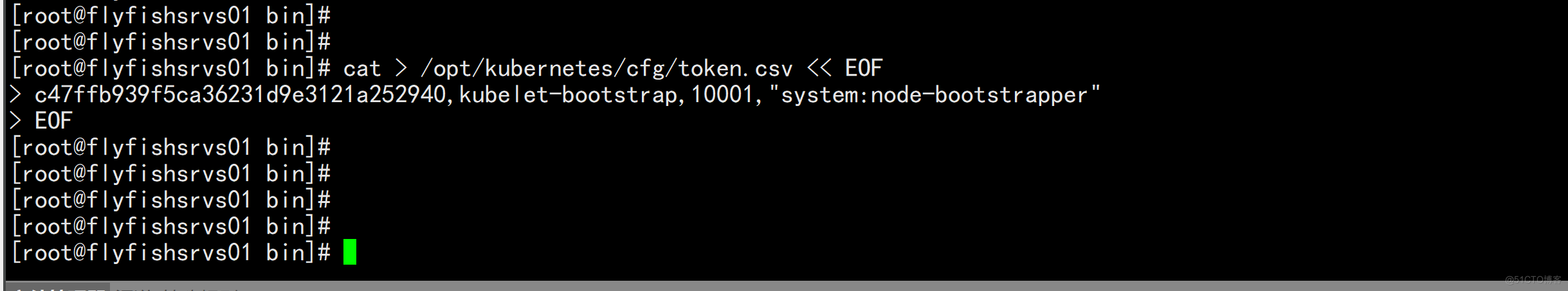

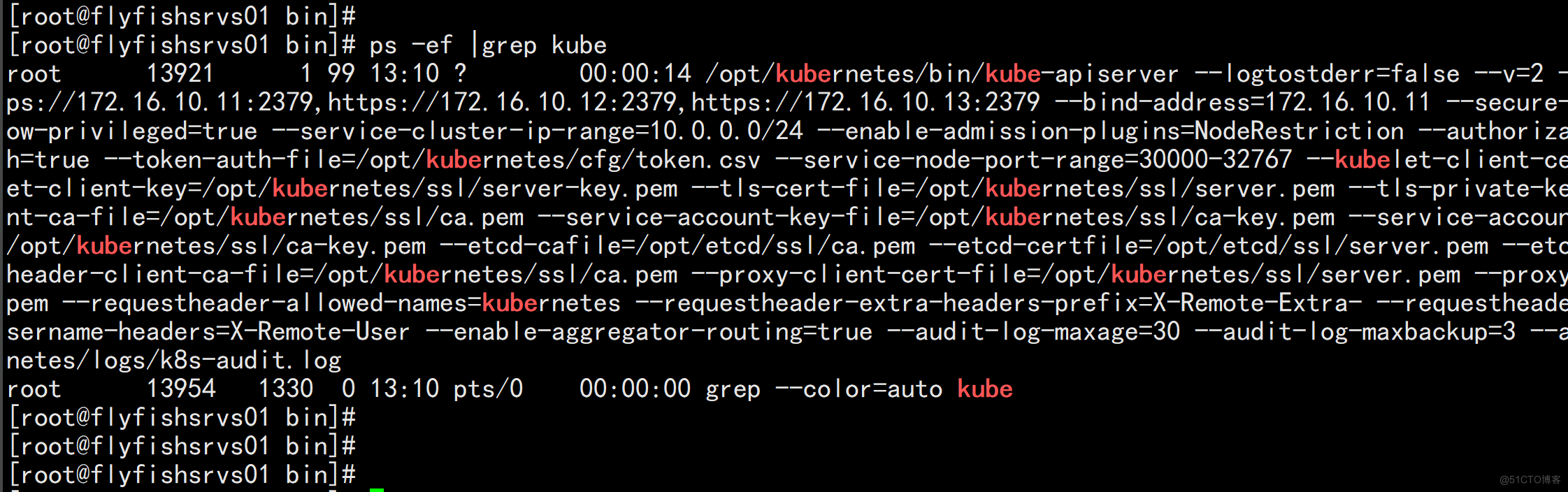

1. 部署kube-apiserver 创建配置文件 cat > /opt/kubernetes/cfg/kube-apiserver.conf << EOF KUBE_APISERVER_OPTS="--logtostderr=false \\ --v=2 \\ --log-dir=/opt/kubernetes/logs \\ --etcd-servers=https://172.16.10.11:2379,https://172.16.10.12:2379,https://172.16.10.13:2379 \\ --bind-address=172.16.10.11 \\ --secure-port=6443 \\ --advertise-address=172.16.10.11 \\ --allow-privileged=true \\ --service-cluster-ip-range=10.0.0.0/24 \\ --enable-admission-plugins=NodeRestriction \\ --authorization-mode=RBAC,Node \\ --enable-bootstrap-token-auth=true \\ --token-auth-file=/opt/kubernetes/cfg/token.csv \\ --service-node-port-range=30000-32767 \\ --kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \\ --kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \\ --tls-cert-file=/opt/kubernetes/ssl/server.pem \\ --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \\ --client-ca-file=/opt/kubernetes/ssl/ca.pem \\ --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\ --service-account-issuer=api \\ --service-account-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\ --etcd-cafile=/opt/etcd/ssl/ca.pem \\ --etcd-certfile=/opt/etcd/ssl/server.pem \\ --etcd-keyfile=/opt/etcd/ssl/server-key.pem \\ --requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \\ --proxy-client-cert-file=/opt/kubernetes/ssl/server.pem \\ --proxy-client-key-file=/opt/kubernetes/ssl/server-key.pem \\ --requestheader-allowed-names=kubernetes \\ --requestheader-extra-headers-prefix=X-Remote-Extra- \\ --requestheader-group-headers=X-Remote-Group \\ --requestheader-username-headers=X-Remote-User \\ --enable-aggregator-routing=true \\ --audit-log-maxage=30 \\ --audit-log-maxbackup=3 \\ --audit-log-maxsize=100 \\ --audit-log-path=/opt/kubernetes/logs/k8s-audit.log" EOF

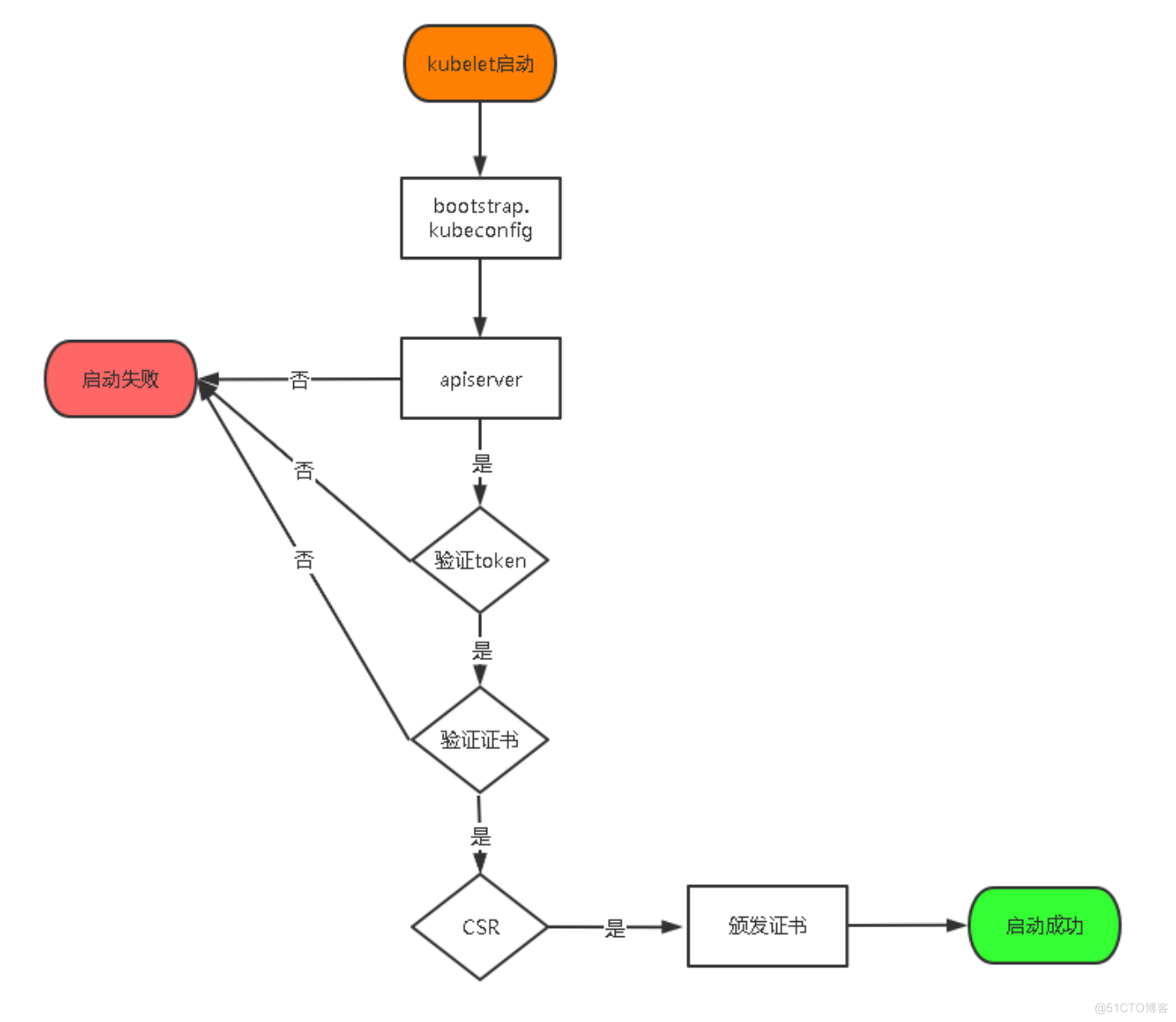

TLS bootstraping 工作流程:

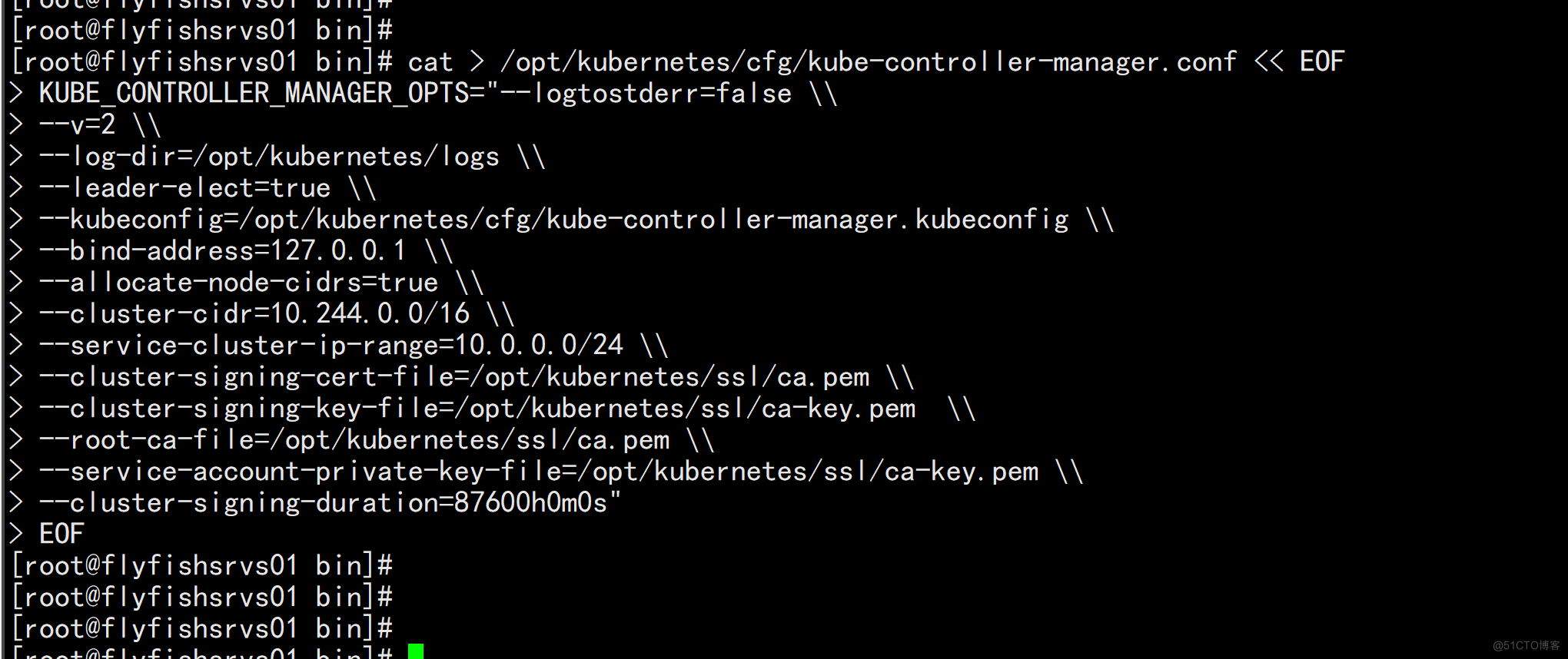

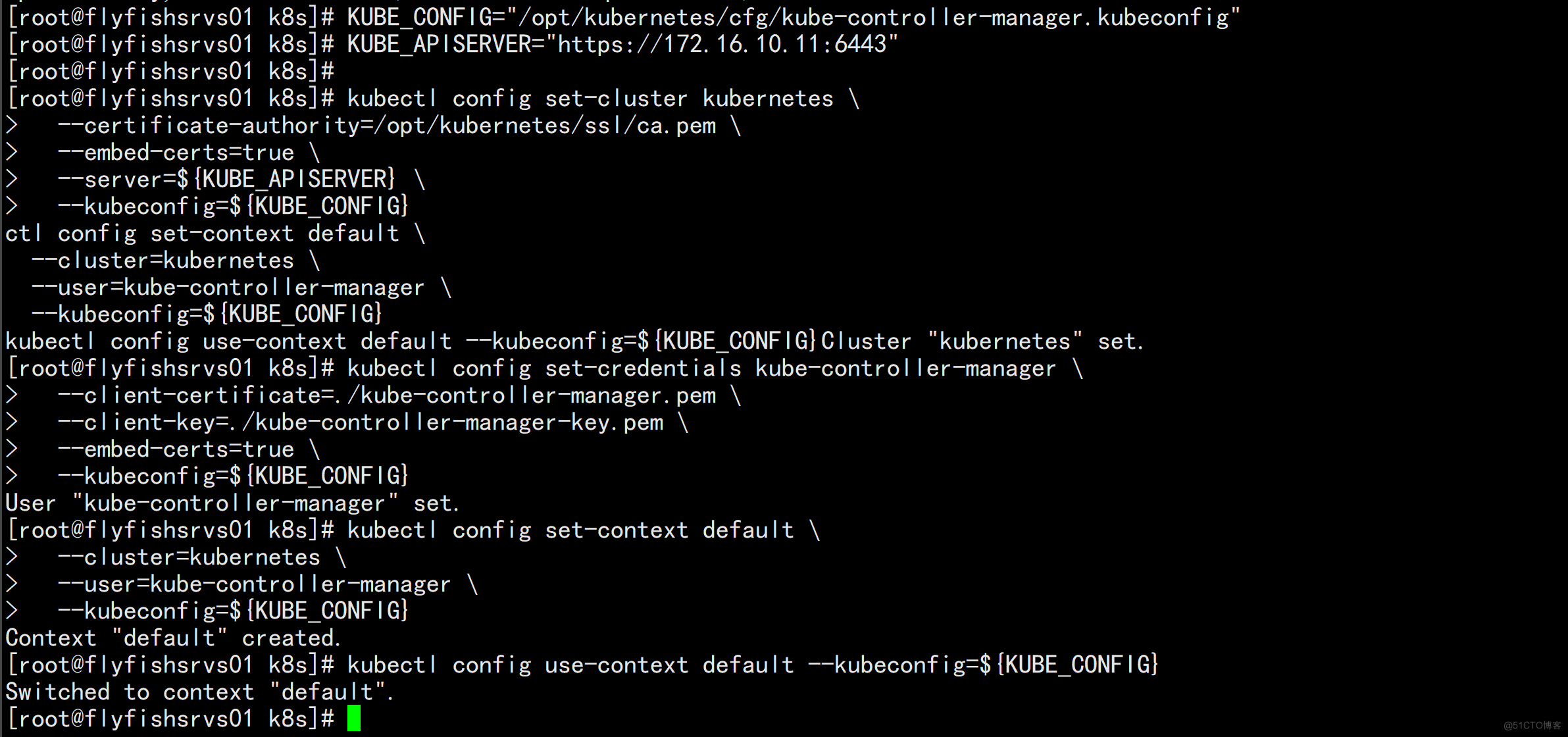

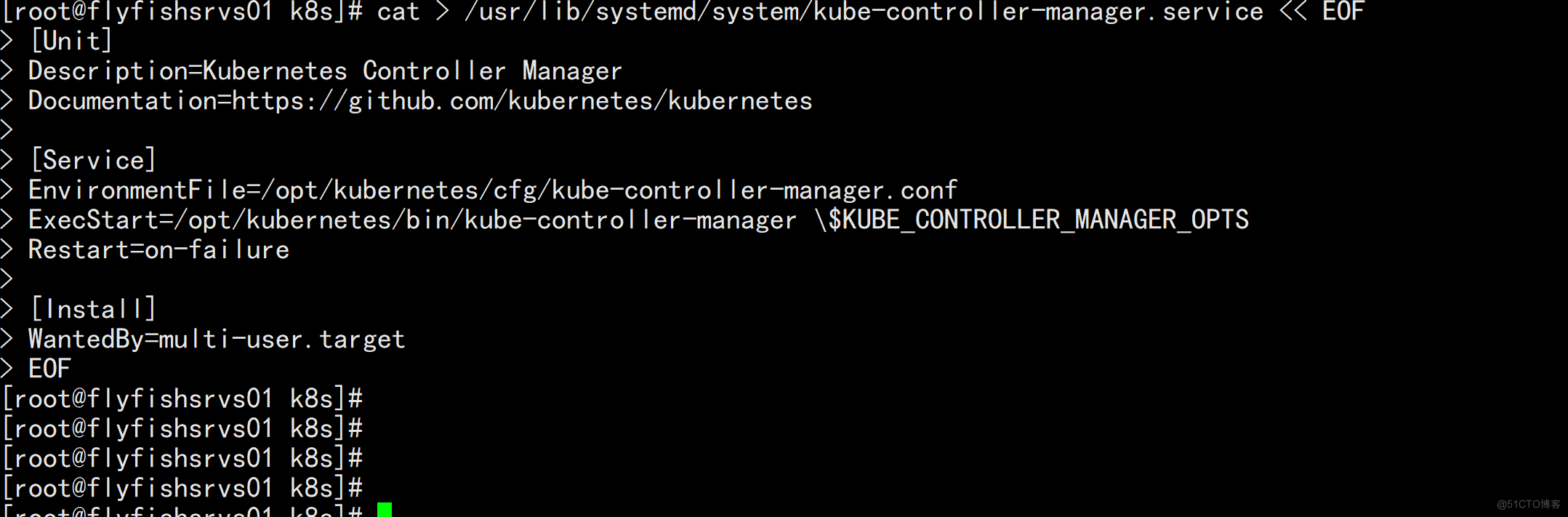

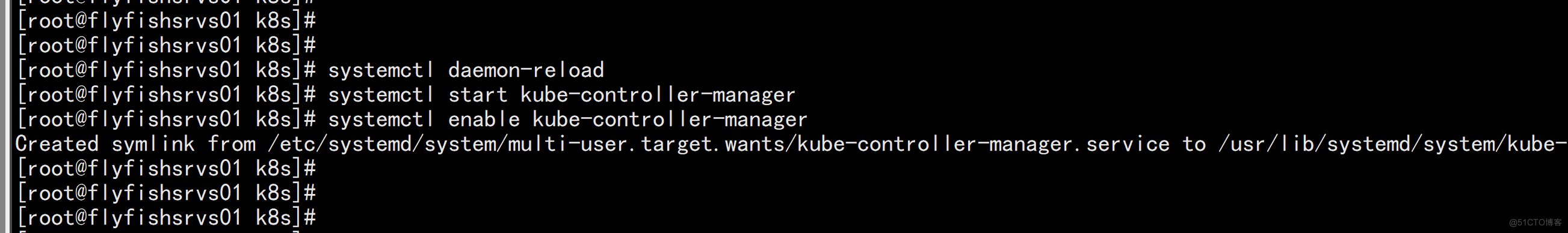

3.3.2 部署kube-controller-manager

1. 创建配置文件 cat > /opt/kubernetes/cfg/kube-controller-manager.conf << EOF KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \\ --v=2 \\ --log-dir=/opt/kubernetes/logs \\ --leader-elect=true \\ --kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig \\ --bind-address=127.0.0.1 \\ --allocate-node-cidrs=true \\ --cluster-cidr=10.244.0.0/16 \\ --service-cluster-ip-range=10.0.0.0/24 \\ --cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\ --cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\ --root-ca-file=/opt/kubernetes/ssl/ca.pem \\ --service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\ --cluster-signing-duration=87600h0m0s" EOF •--kubeconfig:连接apiserver配置文件 •--leader-elect:当该组件启动多个时,自动选举(HA) •--cluster-signing-cert-file/--cluster-signing-key-file:自动为kubelet颁发证书的CA,与apiserver保持一致

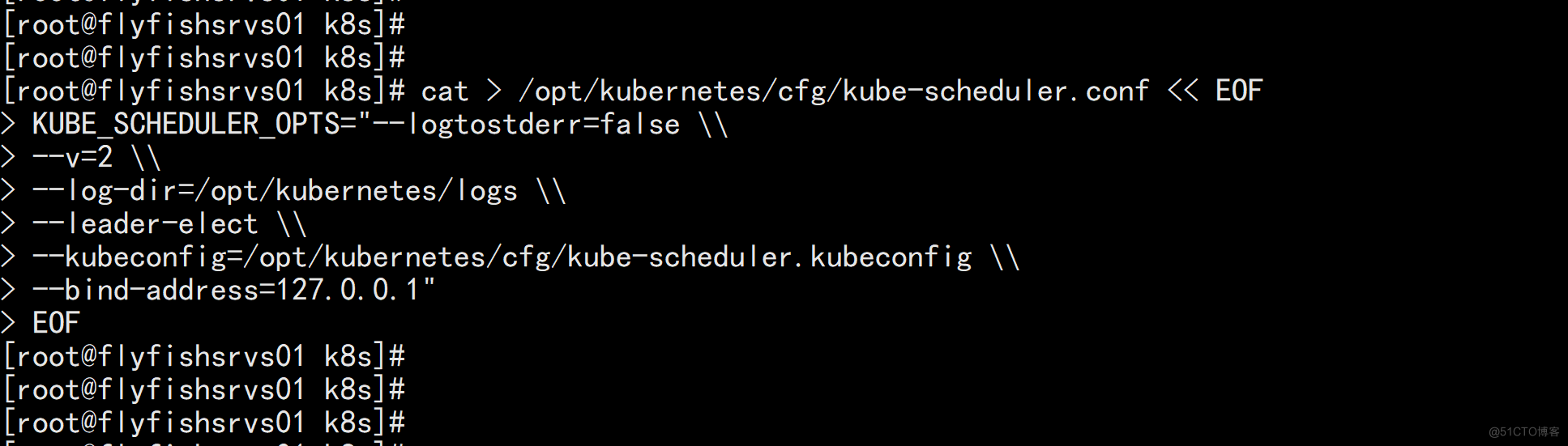

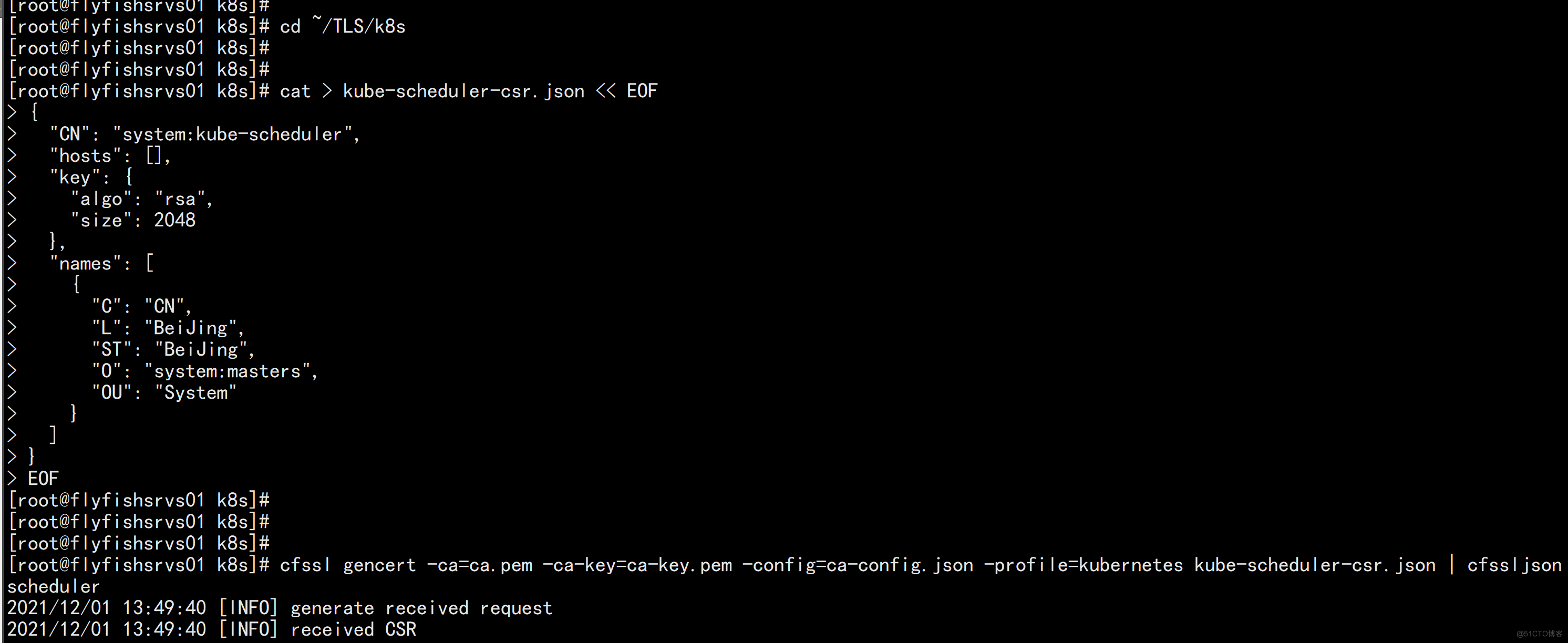

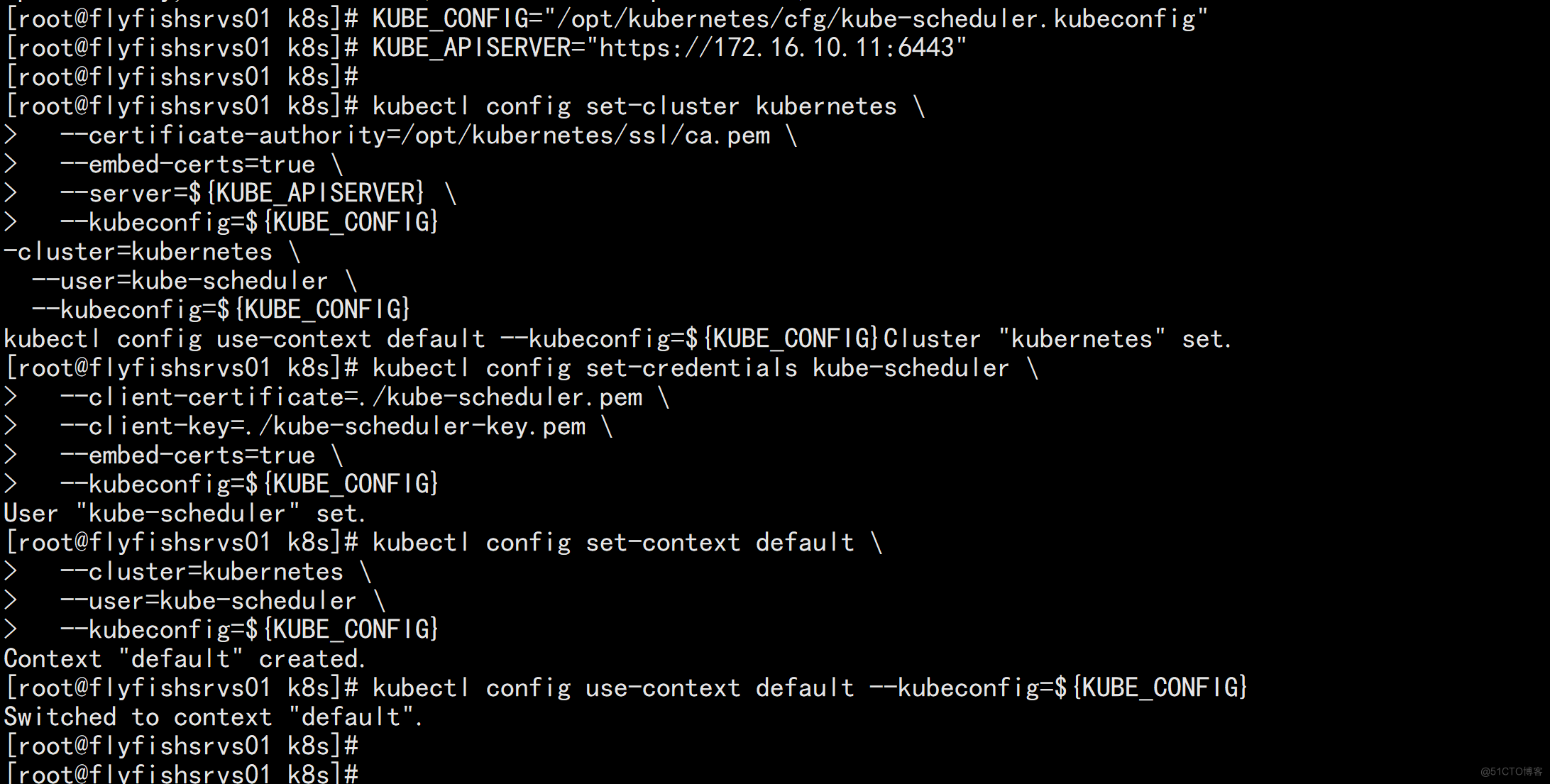

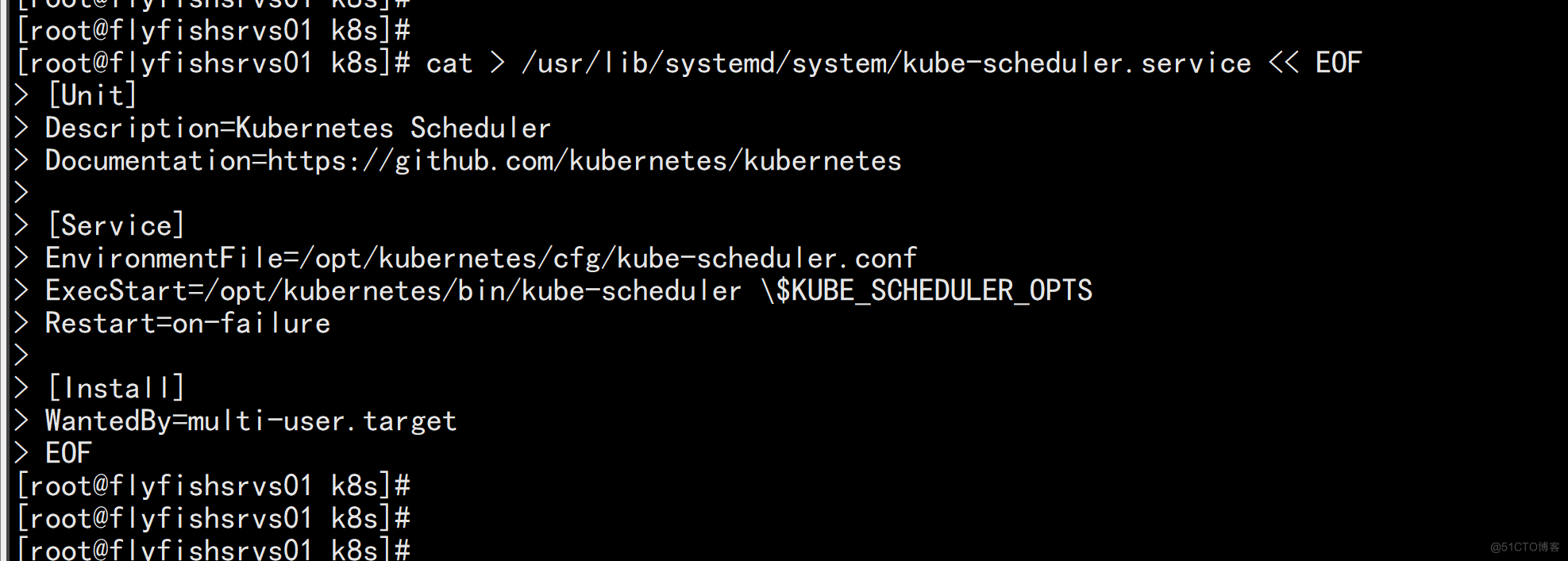

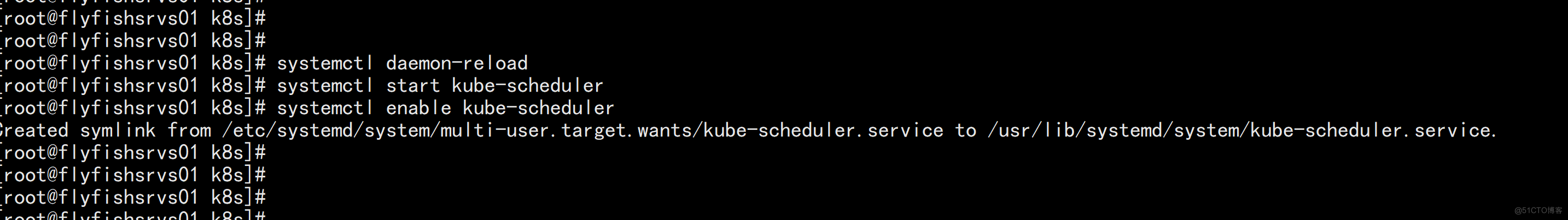

3.3.3 部署kube-scheduler

部署kube-scheduler 1. 创建配置文件 cat > /opt/kubernetes/cfg/kube-scheduler.conf << EOF KUBE_SCHEDULER_OPTS="--logtostderr=false \\ --v=2 \\ --log-dir=/opt/kubernetes/logs \\ --leader-elect \\ --kubeconfig=/opt/kubernetes/cfg/kube-scheduler.kubeconfig \\ --bind-address=127.0.0.1" EOF •--kubeconfig:连接apiserver配置文件 •--leader-elect:当该组件启动多个时,自动选举(HA)

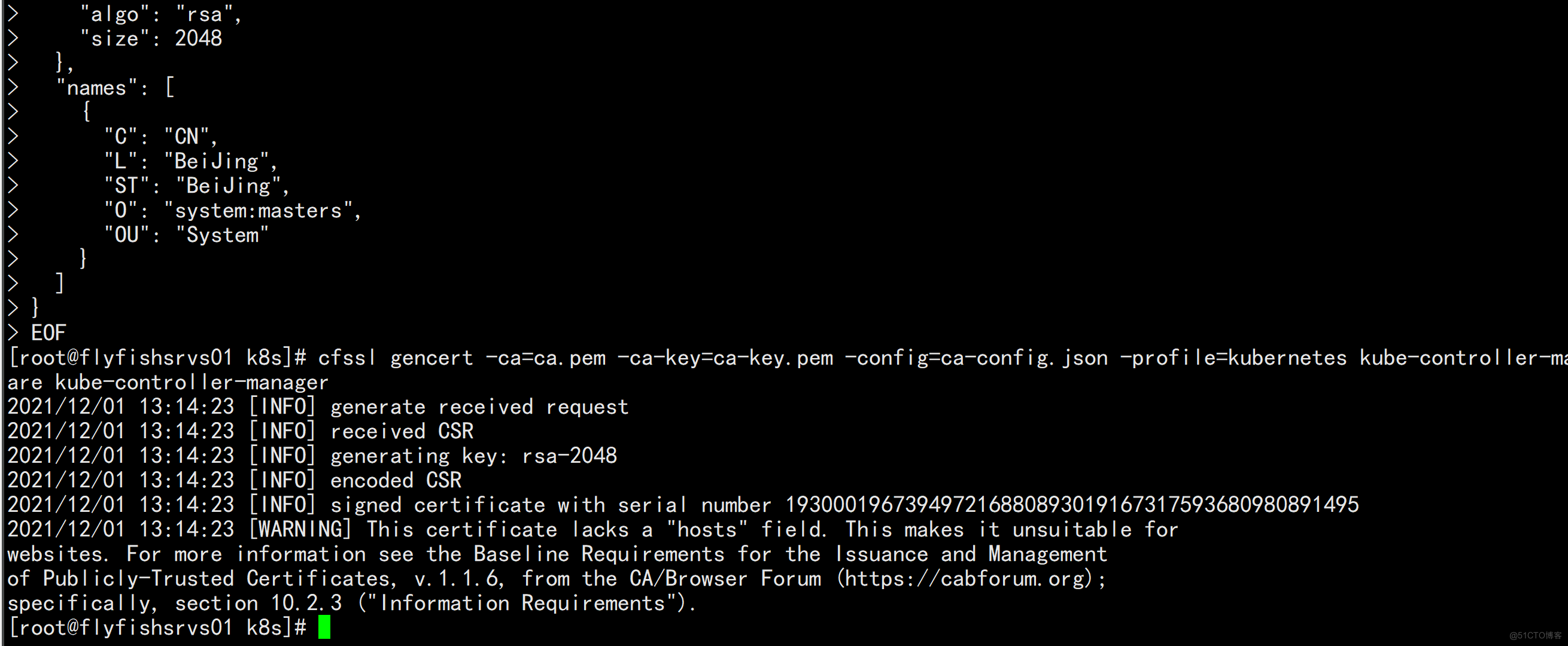

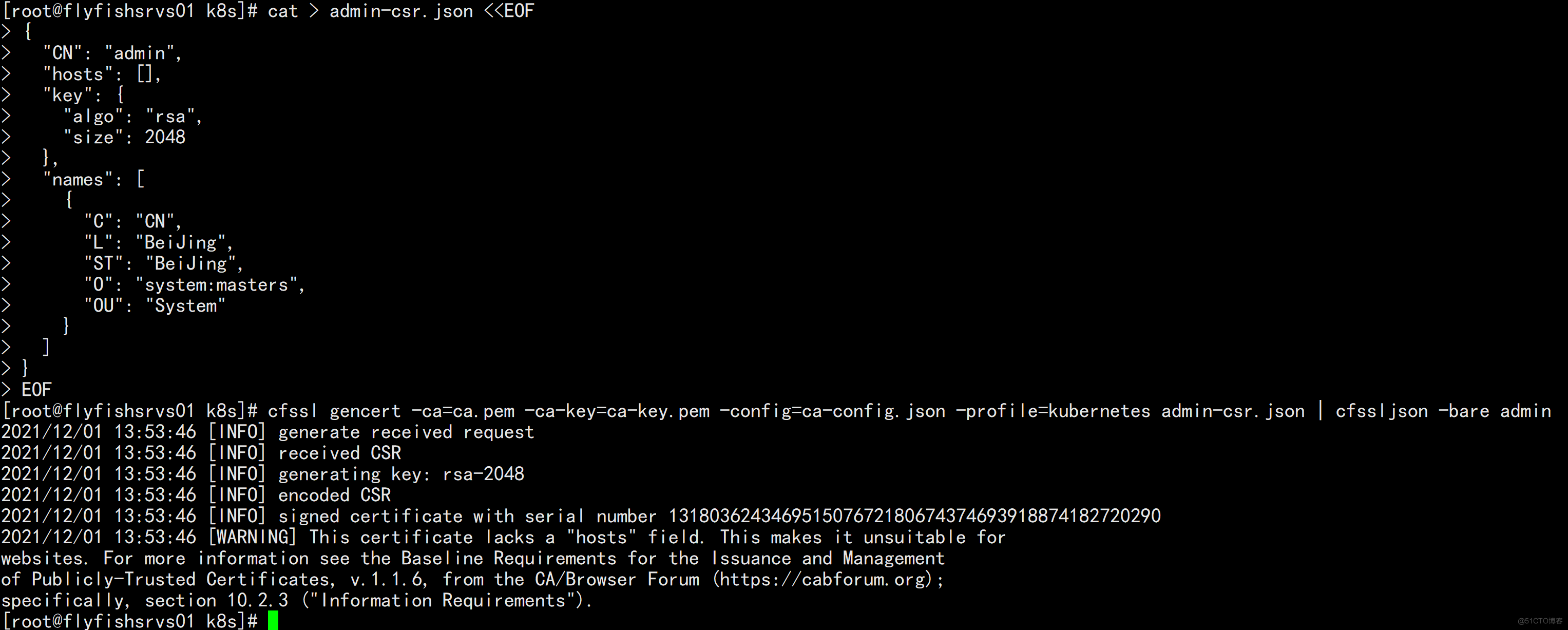

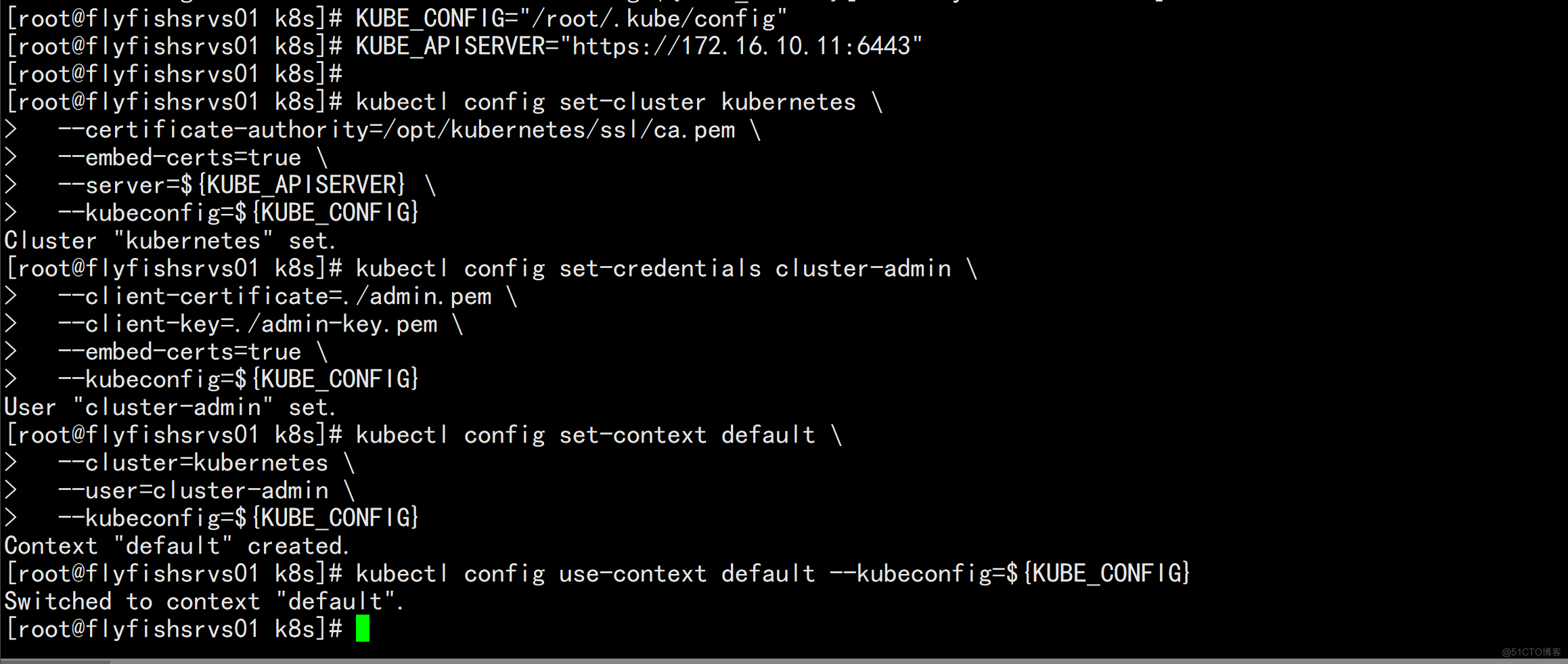

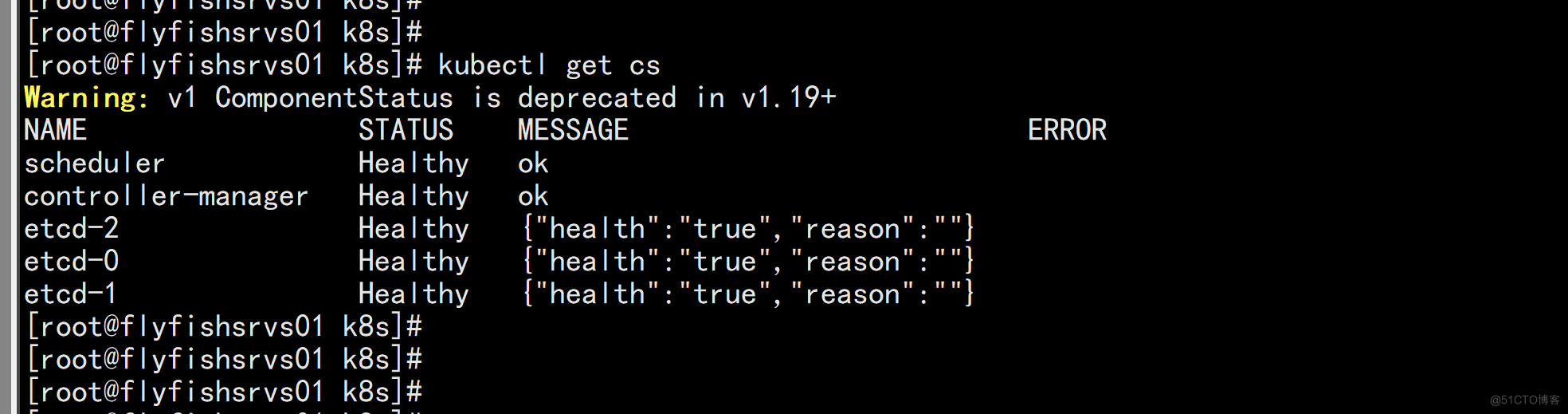

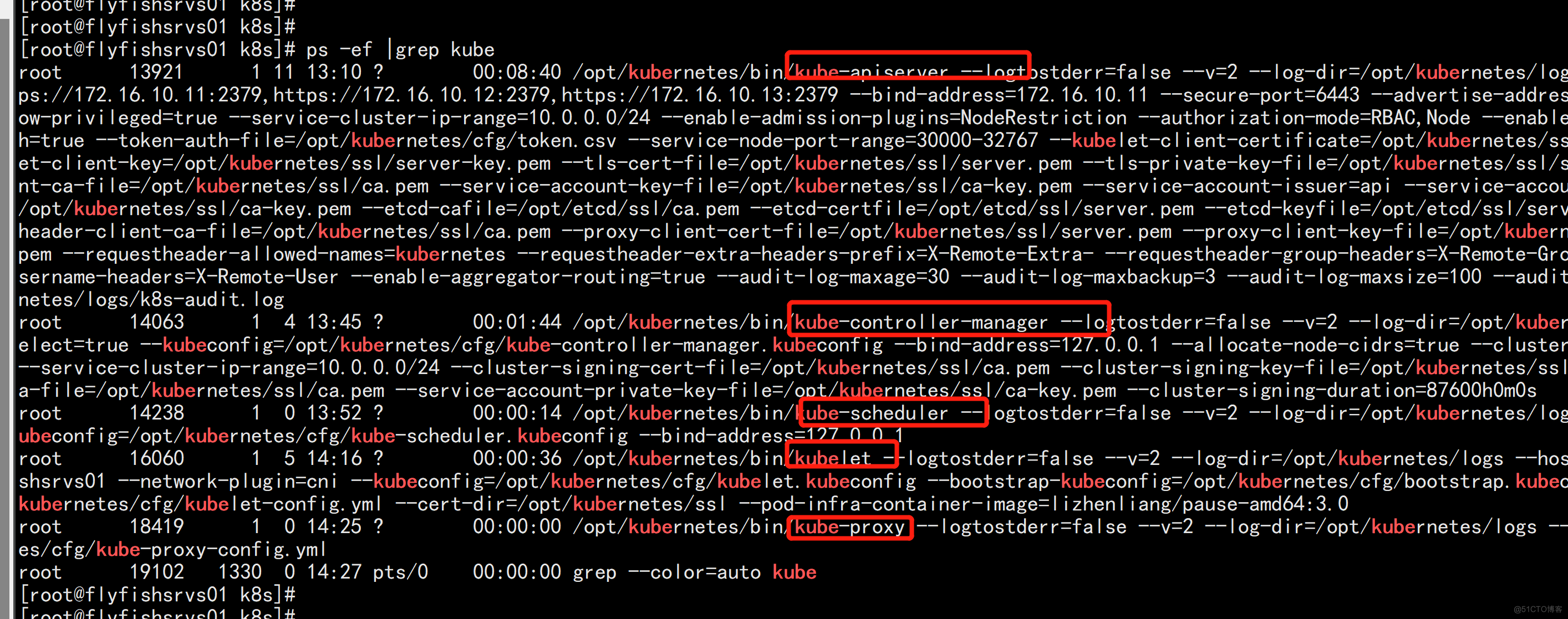

3.3.4 查看集群状态

查看集群状态 生成kubectl连接集群的证书: cat > admin-csr.json <<EOF { "CN": "admin", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "system:masters", "OU": "System" } ] } EOF cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

四:部署worker节点

4.1 创建工作目录并拷贝二进制文件

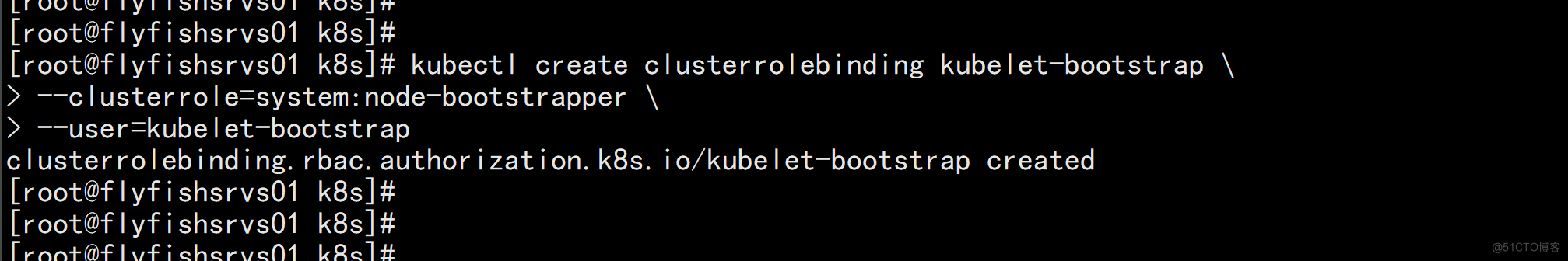

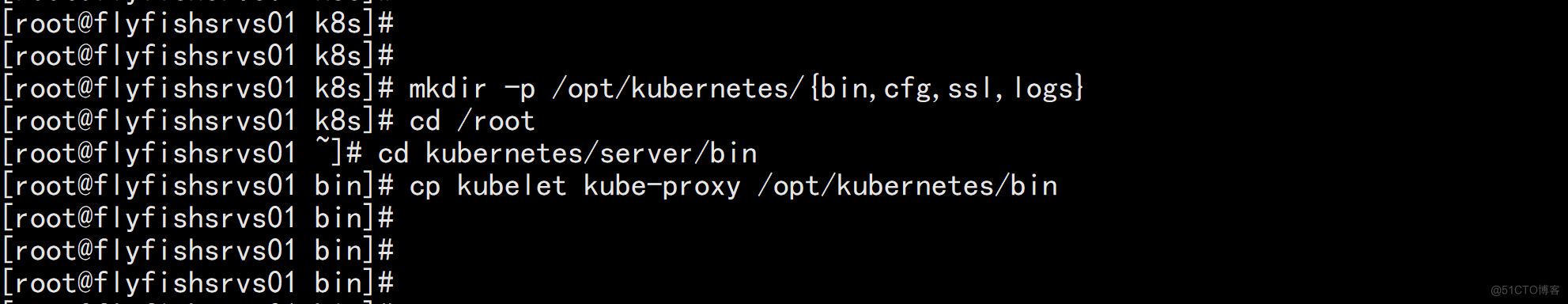

在所有worker node创建工作目录: mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs} 从master节点拷贝: cd /root cd kubernetes/server/bin cp kubelet kube-proxy /opt/kubernetes/bin # 本地拷贝

4.2 部署kubelet

4.2.1 创建配置文件

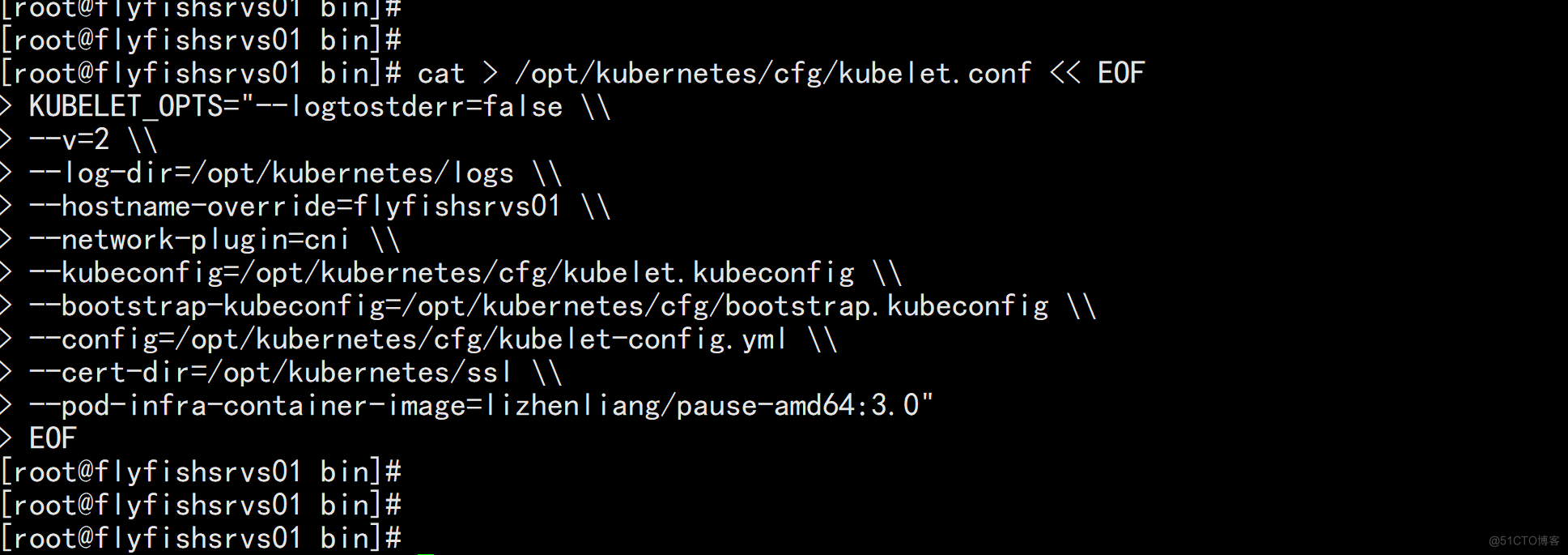

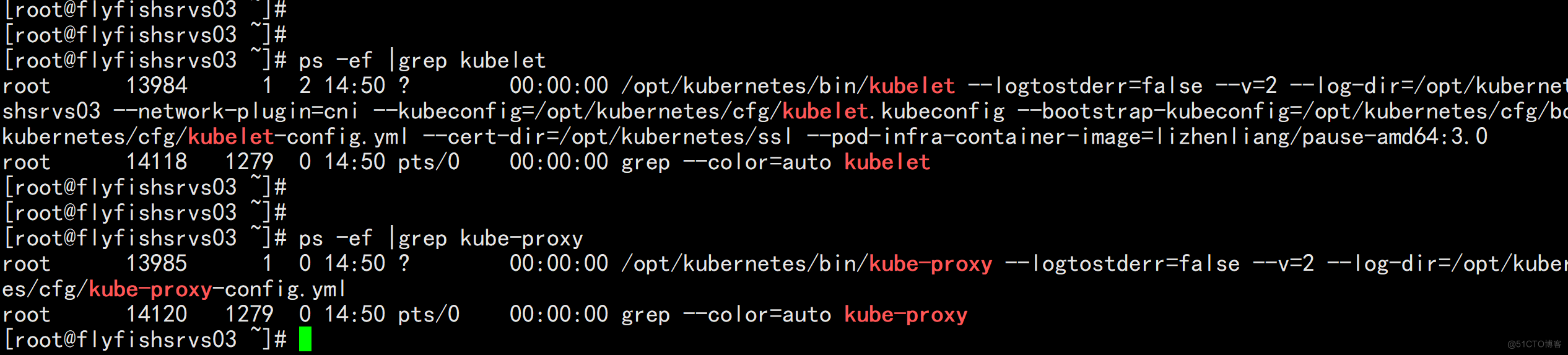

1. 创建配置文件 cat > /opt/kubernetes/cfg/kubelet.conf << EOF KUBELET_OPTS="--logtostderr=false \\ --v=2 \\ --log-dir=/opt/kubernetes/logs \\ --hostname-override=flyfishsrvs01 \\ --network-plugin=cni \\ --kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \\ --bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \\ --config=/opt/kubernetes/cfg/kubelet-config.yml \\ --cert-dir=/opt/kubernetes/ssl \\ --pod-infra-container-image=lizhenliang/pause-amd64:3.0" EOF •--hostname-override:显示名称,集群中唯一 •--network-plugin:启用CNI •--kubeconfig:空路径,会自动生成,后面用于连接apiserver •--bootstrap-kubeconfig:首次启动向apiserver申请证书 •--config:配置参数文件 •--cert-dir:kubelet证书生成目录 •--pod-infra-container-image:管理Pod网络容器的镜像

4.2.2 配置参数文件

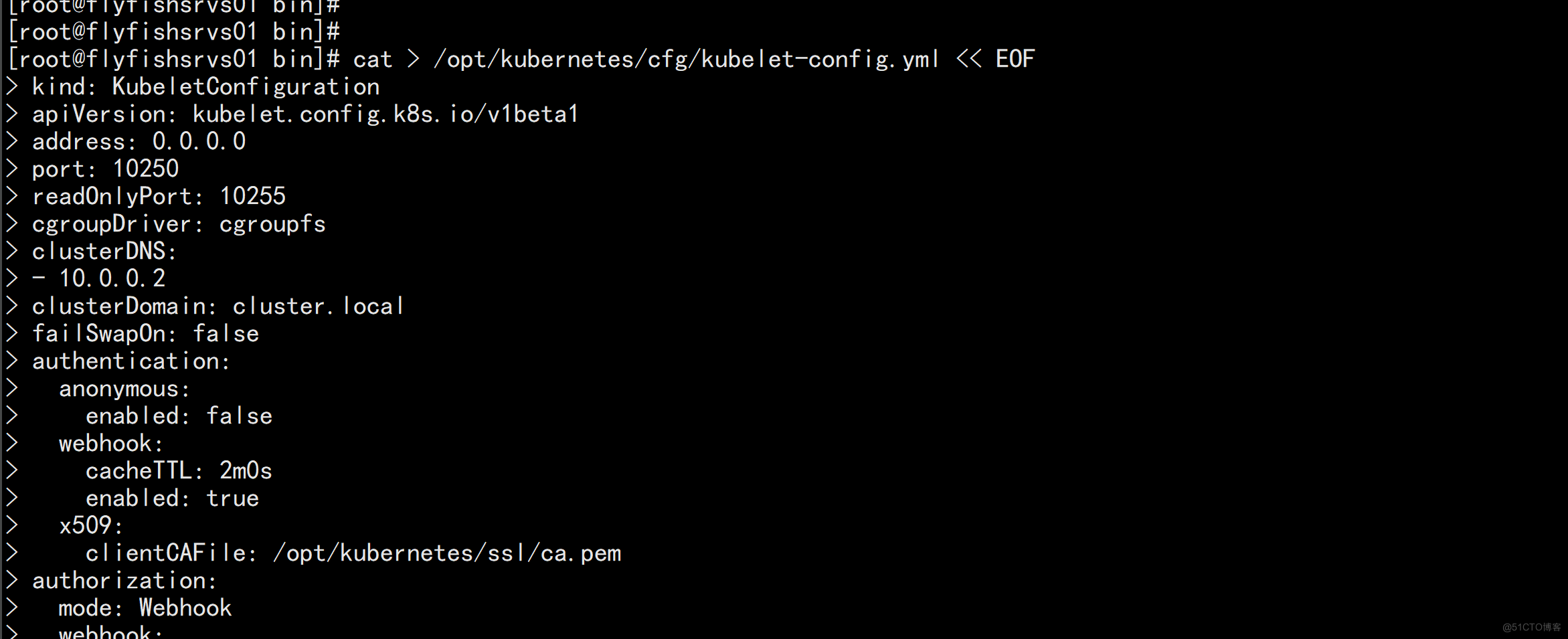

2. 配置参数文件 cat > /opt/kubernetes/cfg/kubelet-config.yml << EOF kind: KubeletConfiguration apiVersion: kubelet.config.k8s.io/v1beta1 address: 0.0.0.0 port: 10250 readOnlyPort: 10255 cgroupDriver: cgroupfs clusterDNS: - 10.0.0.2 clusterDomain: cluster.local failSwapOn: false authentication: anonymous: enabled: false webhook: cacheTTL: 2m0s enabled: true x509: clientCAFile: /opt/kubernetes/ssl/ca.pem authorization: mode: Webhook webhook: cacheAuthorizedTTL: 5m0s cacheUnauthorizedTTL: 30s evictionHard: imagefs.available: 15% memory.available: 100Mi nodefs.available: 10% nodefs.inodesFree: 5% maxOpenFiles: 1000000 maxPods: 110 EOF

4.2.3 生成kubelet初次加入集群引导kubeconfig文件

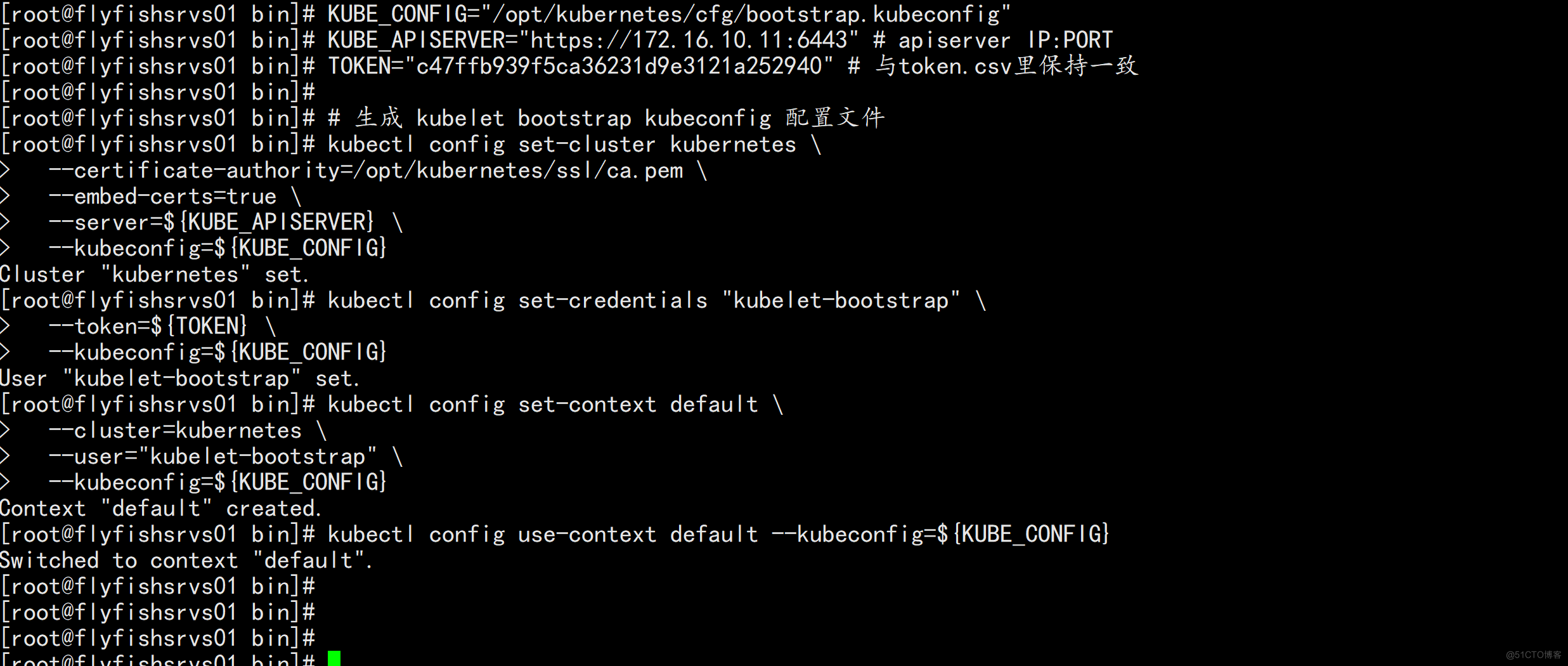

生成kubelet初次加入集群引导kubeconfig文件 KUBE_CONFIG="/opt/kubernetes/cfg/bootstrap.kubeconfig" KUBE_APISERVER="https://172.16.10.11:6443" # apiserver IP:PORT TOKEN="c47ffb939f5ca36231d9e3121a252940" # 与token.csv里保持一致 # 生成 kubelet bootstrap kubeconfig 配置文件 kubectl config set-cluster kubernetes \ --certificate-authority=/opt/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=${KUBE_APISERVER} \ --kubeconfig=${KUBE_CONFIG} kubectl config set-credentials "kubelet-bootstrap" \ --token=${TOKEN} \ --kubeconfig=${KUBE_CONFIG} kubectl config set-context default \ --cluster=kubernetes \ --user="kubelet-bootstrap" \ --kubeconfig=${KUBE_CONFIG} kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

4.2.4 systemd管理kubelet

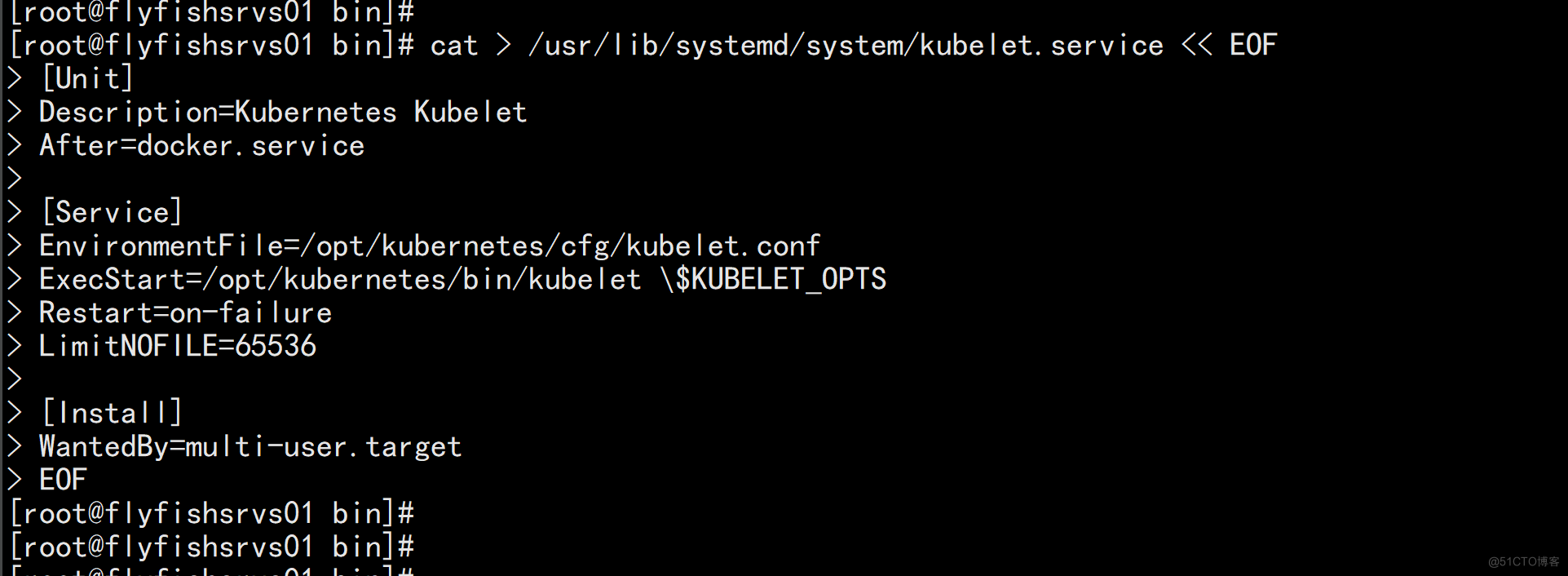

4. systemd管理kubelet cat > /usr/lib/systemd/system/kubelet.service << EOF [Unit] Description=Kubernetes Kubelet After=docker.service [Service] EnvironmentFile=/opt/kubernetes/cfg/kubelet.conf ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF

4.2.5 启动kubelet

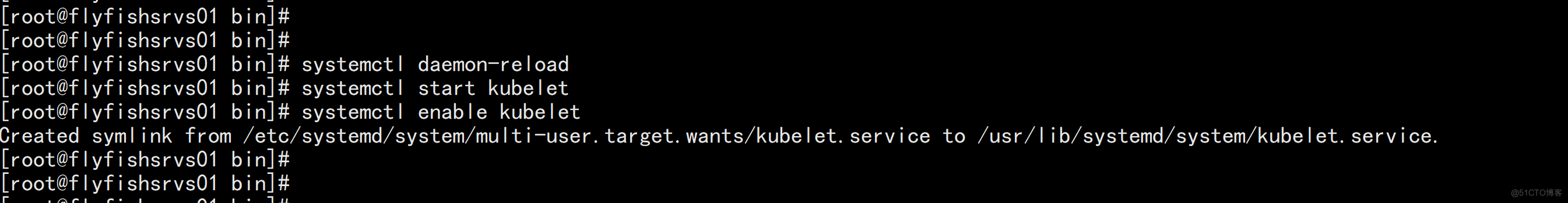

启动并设置开机启动 systemctl daemon-reload systemctl start kubelet systemctl enable kubelet

4.2.6 批准kubelet证书申请并加入集群

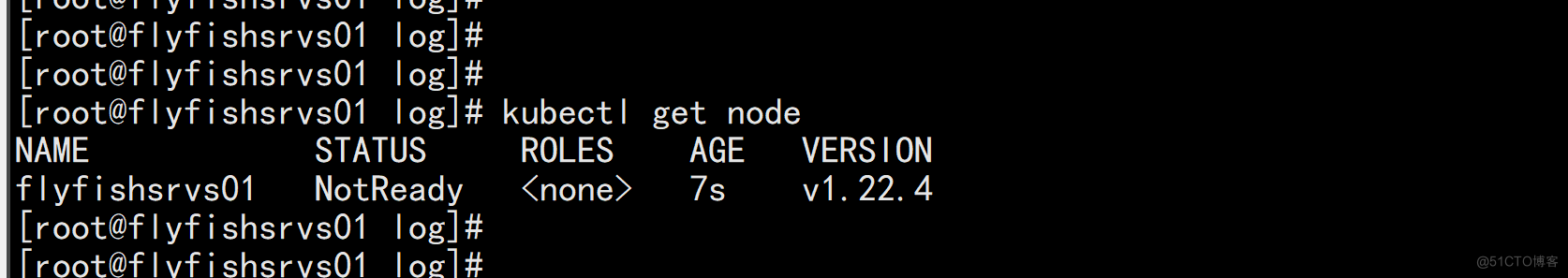

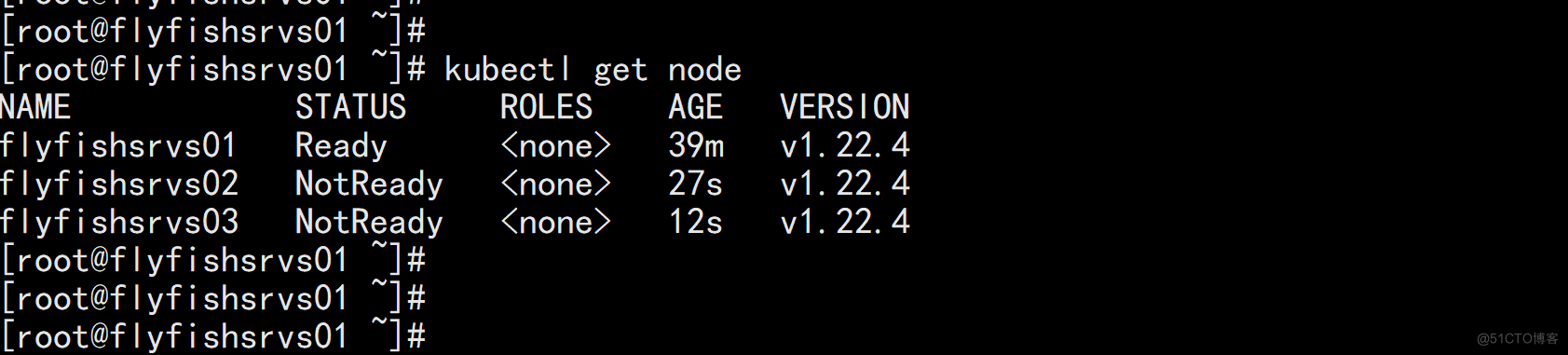

批准kubelet证书申请并加入集群 # 查看kubelet证书请求 kubectl get csr NAME AGE SIGNERNAME REQUESTOR CONDITION node-csr-JwE4AmKjdtZ0D3zmJMTTigshOUXmZlEtghwyKZT9WKA 6m3s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending # 批准申请 kubectl certificate approve node-csr-JwE4AmKjdtZ0D3zmJMTTigshOUXmZlEtghwyKZT9WKA # 查看节点 kubectl get node NAME STATUS ROLES AGE VERSION flyfishsrvs01 NotReady <none> 7s v1.22.4 注:由于网络插件还没有部署,节点会没有准备就绪 NotReady

五:部署kube-proxy

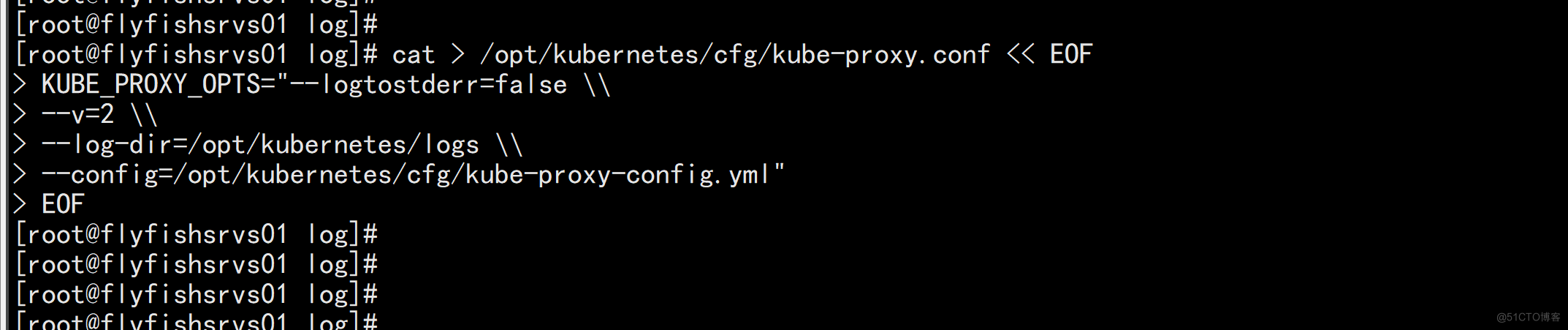

5.1 1. 创建配置文件

1. 创建配置文件 cat > /opt/kubernetes/cfg/kube-proxy.conf << EOF KUBE_PROXY_OPTS="--logtostderr=false \\ --v=2 \\ --log-dir=/opt/kubernetes/logs \\ --config=/opt/kubernetes/cfg/kube-proxy-config.yml" EOF

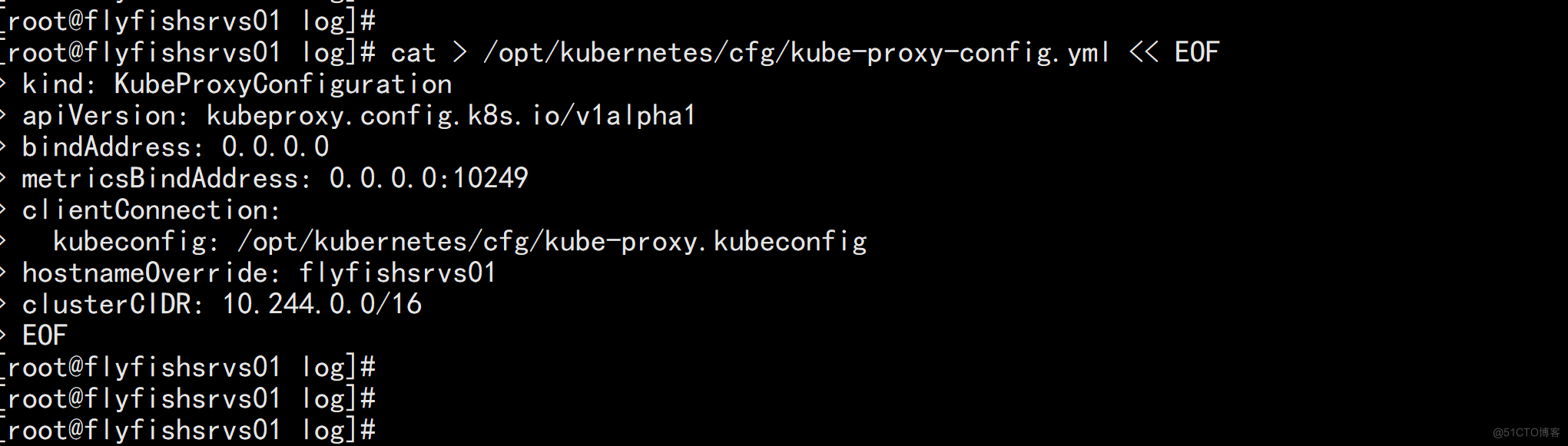

5.1.2 配置文件参数

2. 配置参数文件 cat > /opt/kubernetes/cfg/kube-proxy-config.yml << EOF kind: KubeProxyConfiguration apiVersion: kubeproxy.config.k8s.io/v1alpha1 bindAddress: 0.0.0.0 metricsBindAddress: 0.0.0.0:10249 clientConnection: kubeconfig: /opt/kubernetes/cfg/kube-proxy.kubeconfig hostnameOverride: flyfishsrvs01 clusterCIDR: 10.244.0.0/16 EOF

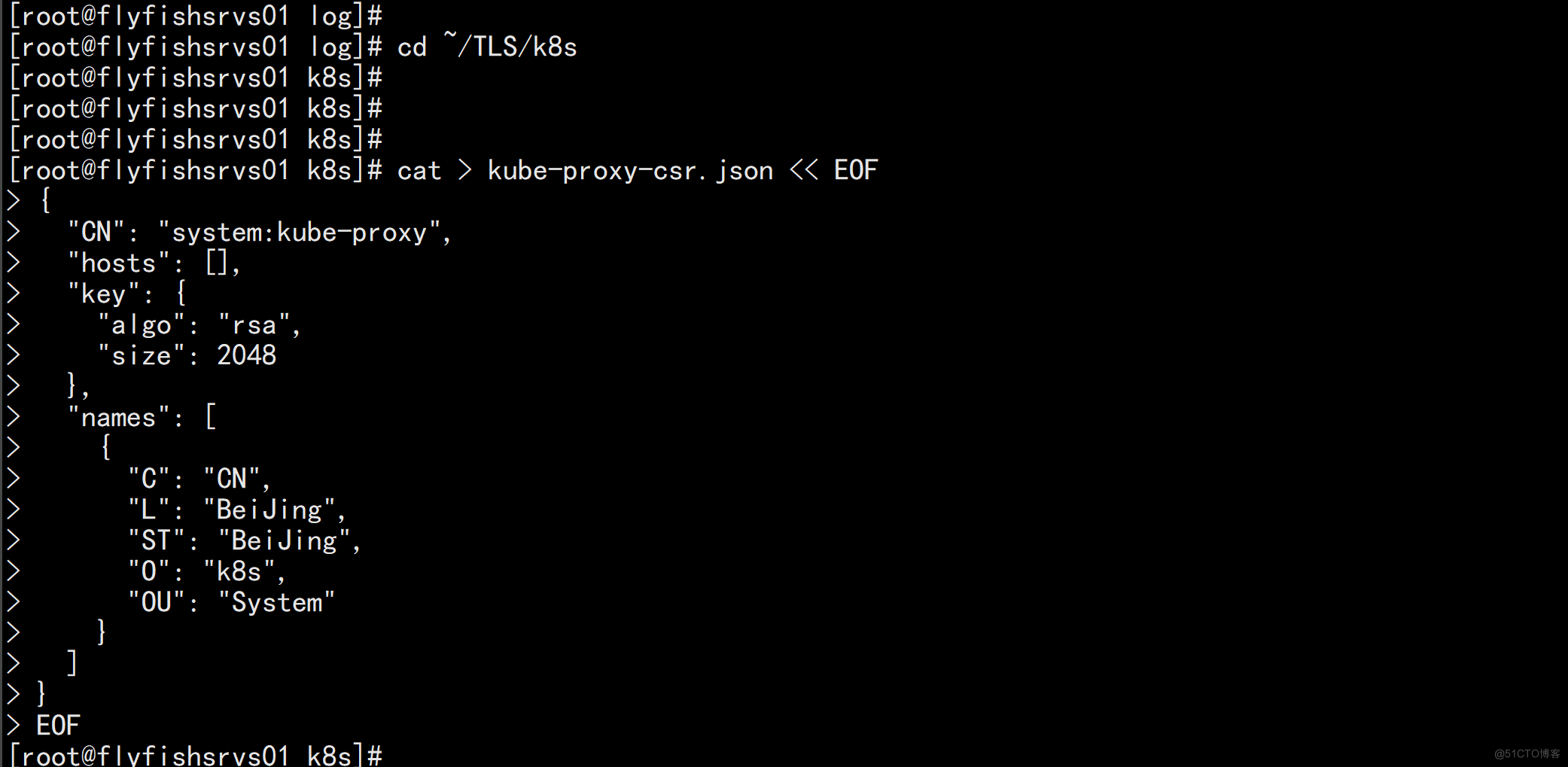

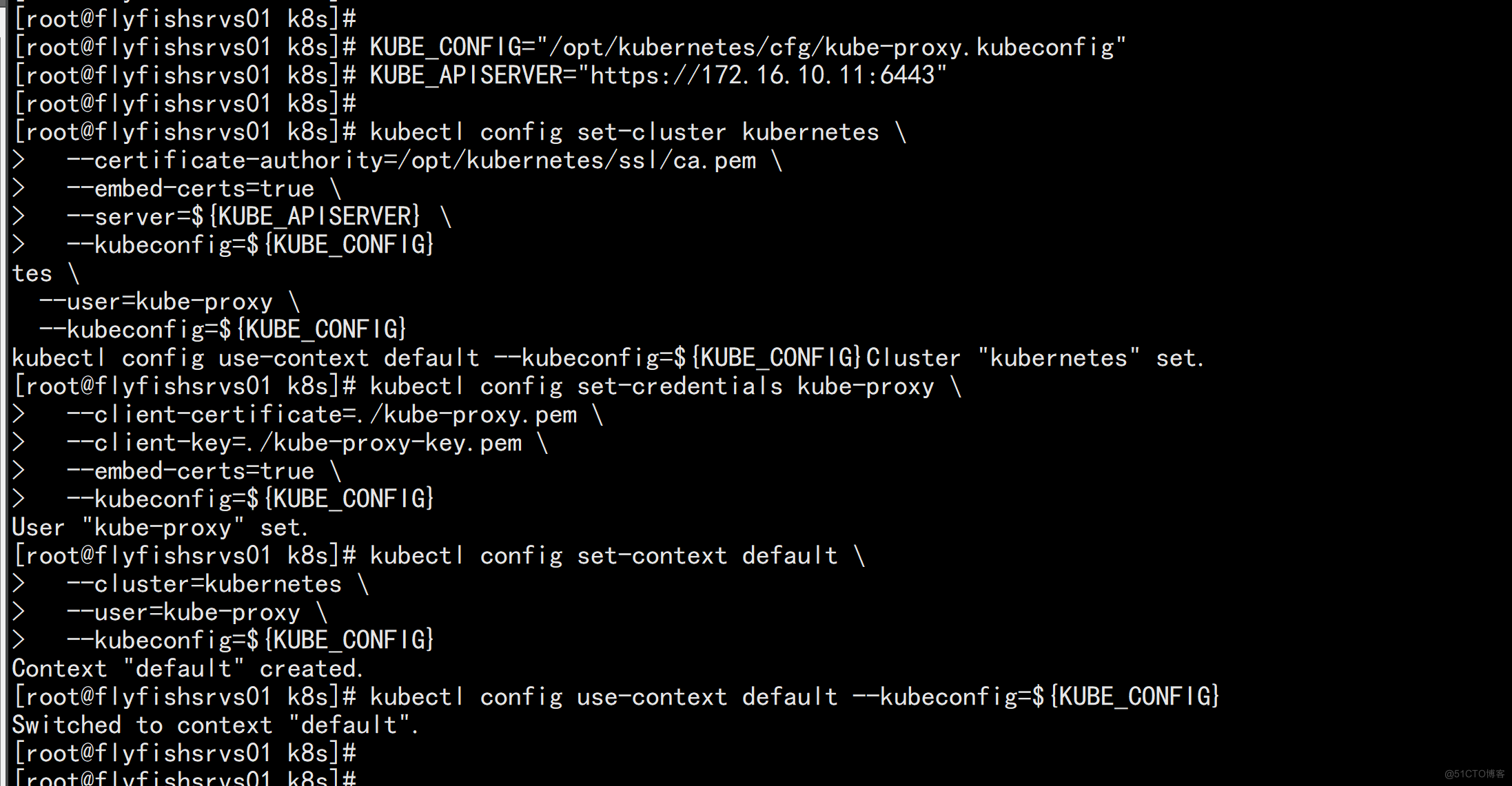

5.1.3 生成kube-proxy.kubeconfig文件

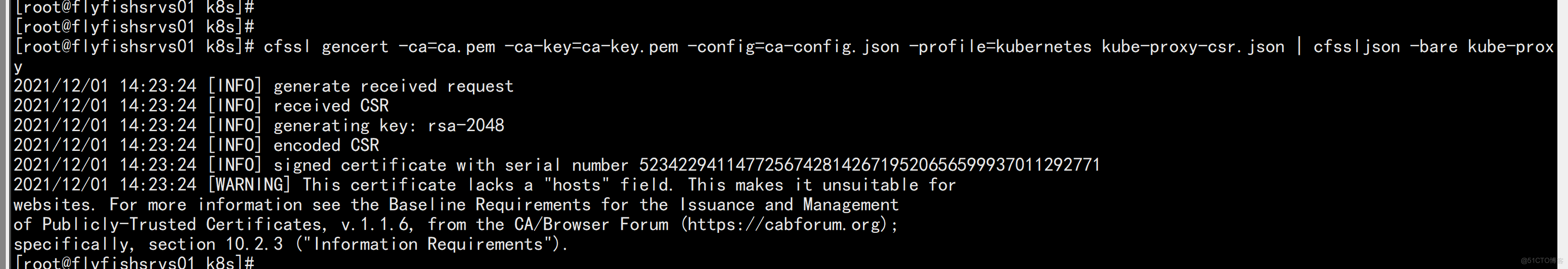

3. 生成kube-proxy.kubeconfig文件 # 切换工作目录 cd ~/TLS/k8s # 创建证书请求文件 cat > kube-proxy-csr.json << EOF { "CN": "system:kube-proxy", "hosts": [], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "k8s", "OU": "System" } ] } EOF # 生成证书 cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy 生成kubeconfig文件: KUBE_CONFIG="/opt/kubernetes/cfg/kube-proxy.kubeconfig" KUBE_APISERVER="https://172.16.10.11:6443" kubectl config set-cluster kubernetes \ --certificate-authority=/opt/kubernetes/ssl/ca.pem \ --embed-certs=true \ --server=${KUBE_APISERVER} \ --kubeconfig=${KUBE_CONFIG} kubectl config set-credentials kube-proxy \ --client-certificate=./kube-proxy.pem \ --client-key=./kube-proxy-key.pem \ --embed-certs=true \ --kubeconfig=${KUBE_CONFIG} kubectl config set-context default \ --cluster=kubernetes \ --user=kube-proxy \ --kubeconfig=${KUBE_CONFIG} kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

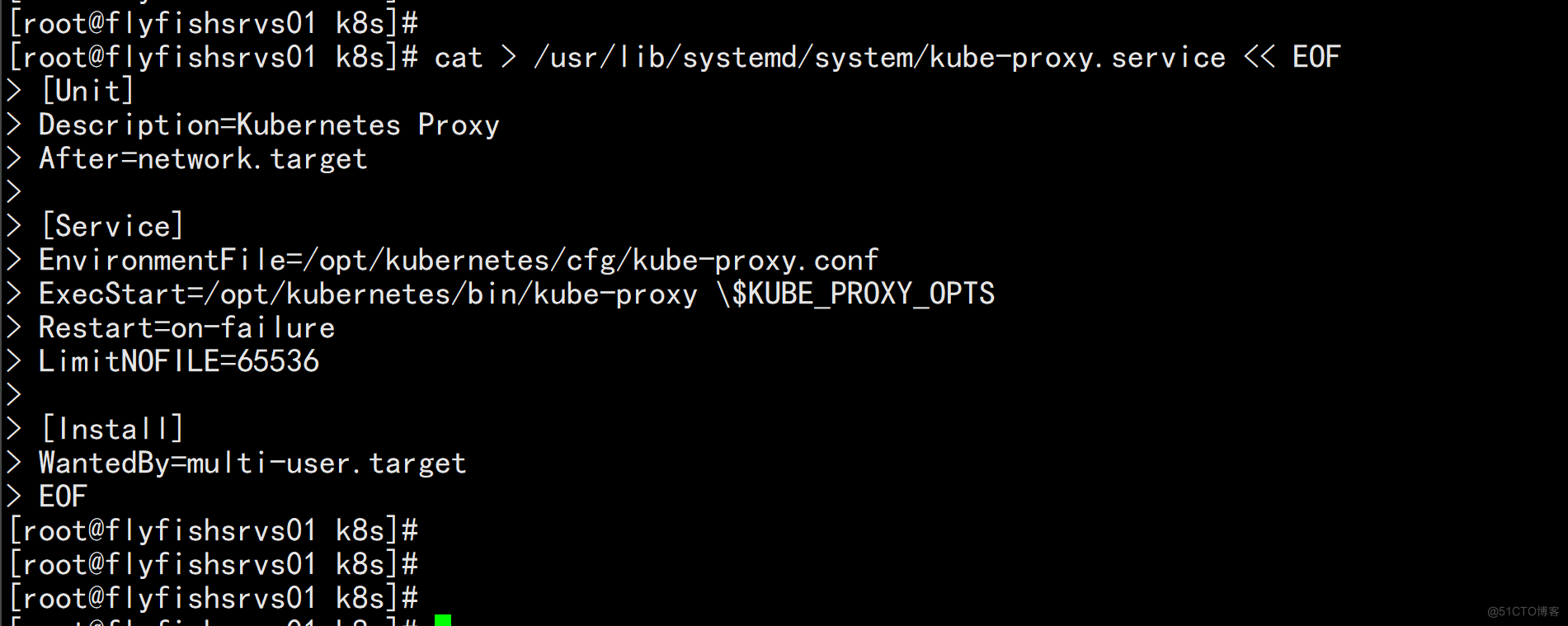

5.1.4 systemd管理kube-proxy

4. systemd管理kube-proxy cat > /usr/lib/systemd/system/kube-proxy.service << EOF [Unit] Description=Kubernetes Proxy After=network.target [Service] EnvironmentFile=/opt/kubernetes/cfg/kube-proxy.conf ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF

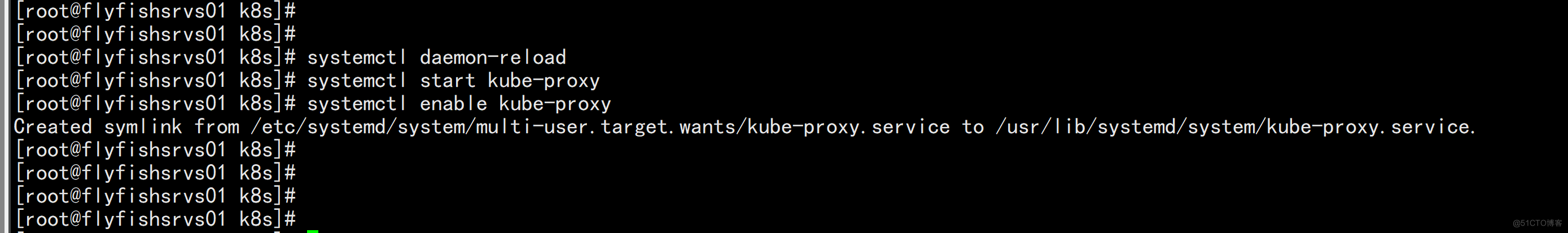

5.1.5 启动kube-proxy

5. 启动并设置开机启动 systemctl daemon-reload systemctl start kube-proxy systemctl enable kube-proxy

六:部署网络插件

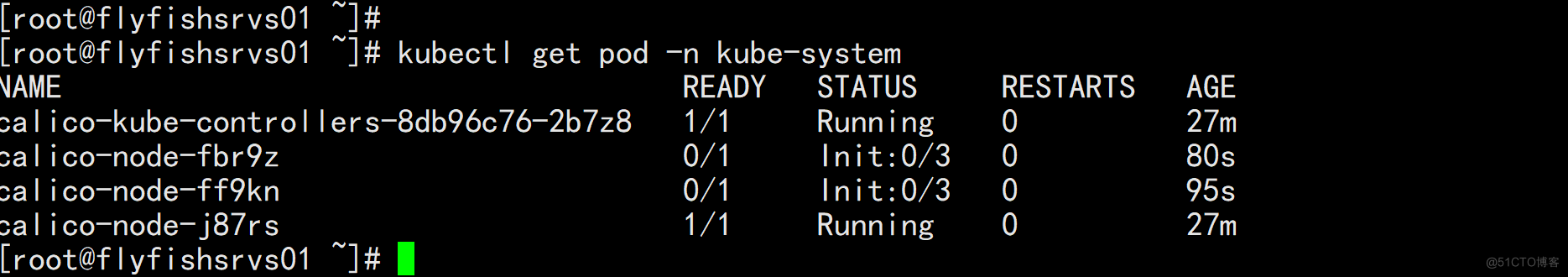

6.1 网络插件calico

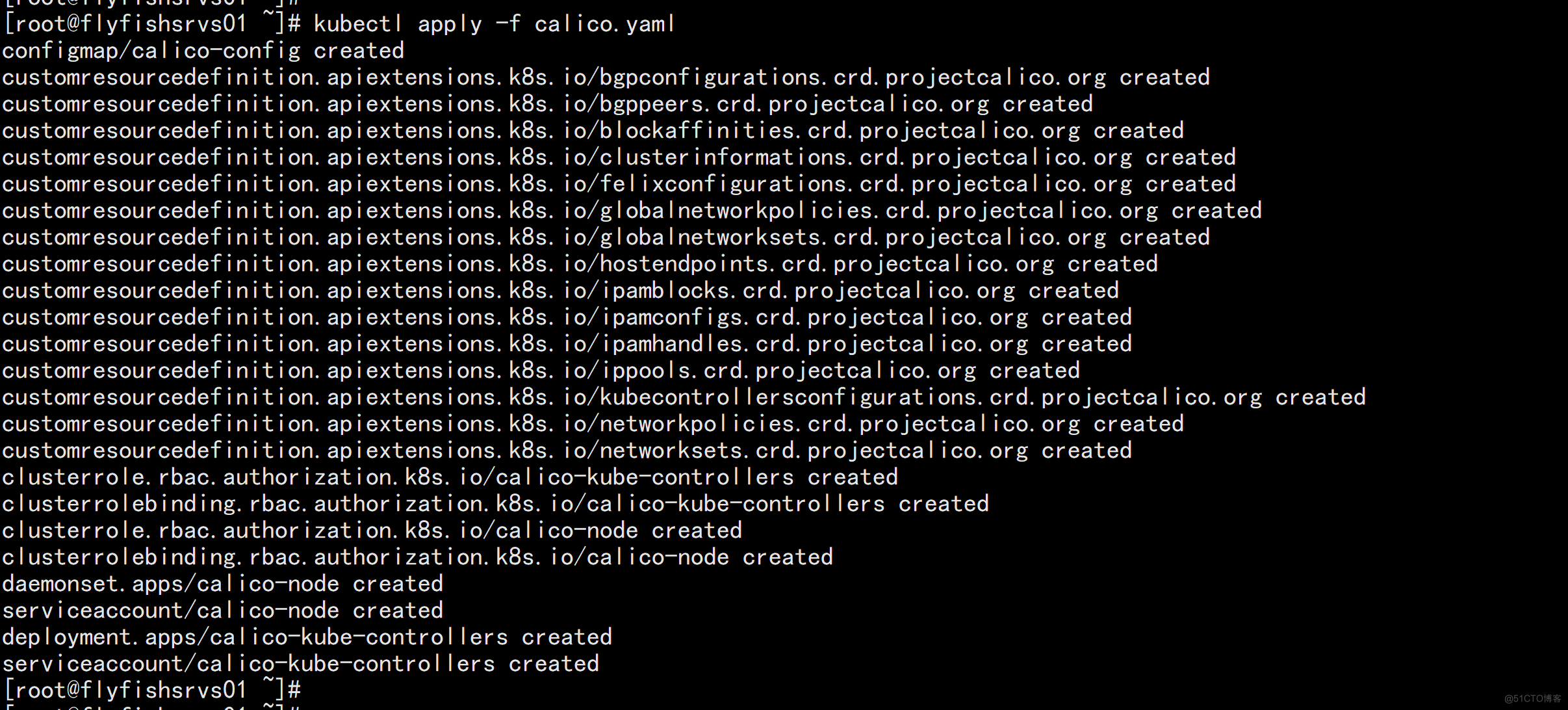

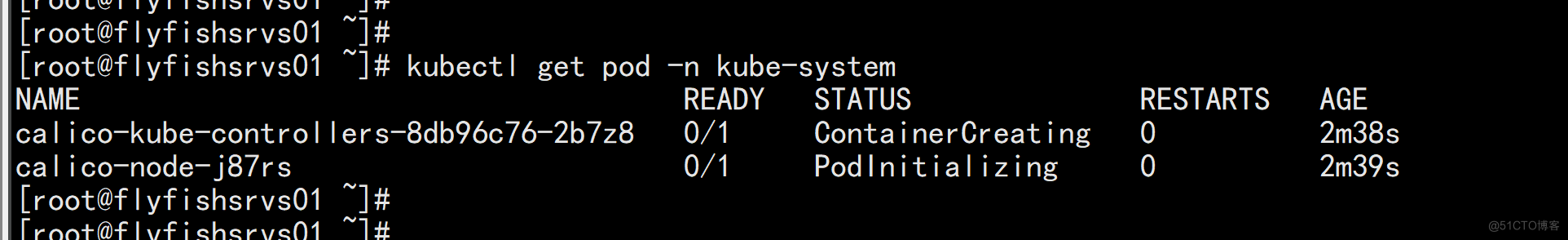

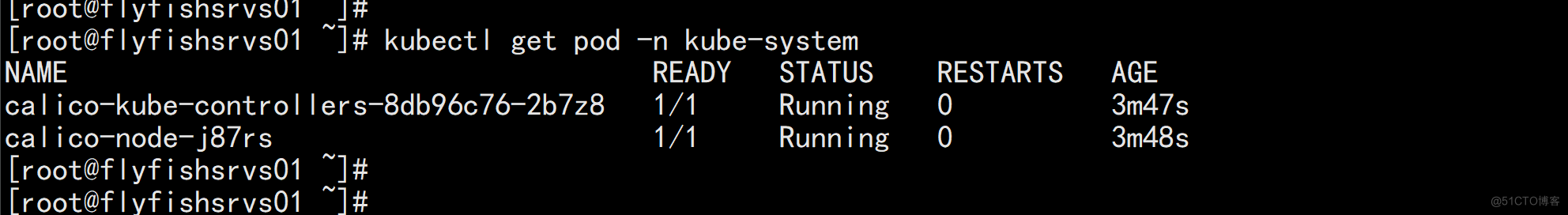

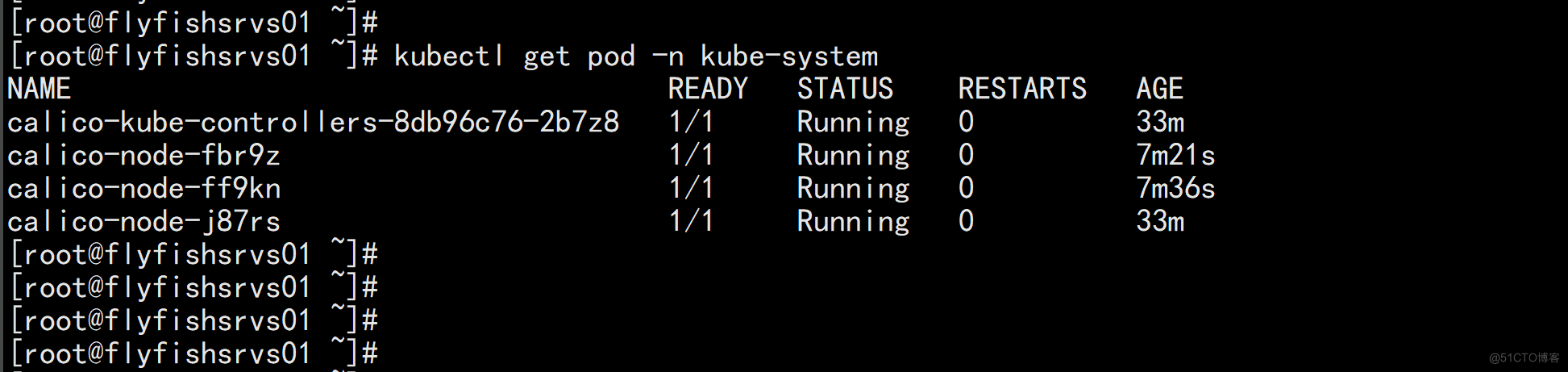

Calico是一个纯三层的数据中心网络方案,是目前Kubernetes主流的网络方案。 部署Calico: kubectl apply -f calico.yaml kubectl get pods -n kube-system 等Calico Pod都Running,节点也会准备就绪: kubectl get node NAME STATUS ROLES AGE VERSION flyfishsrvs01 Ready <none> 37m v1.22.4

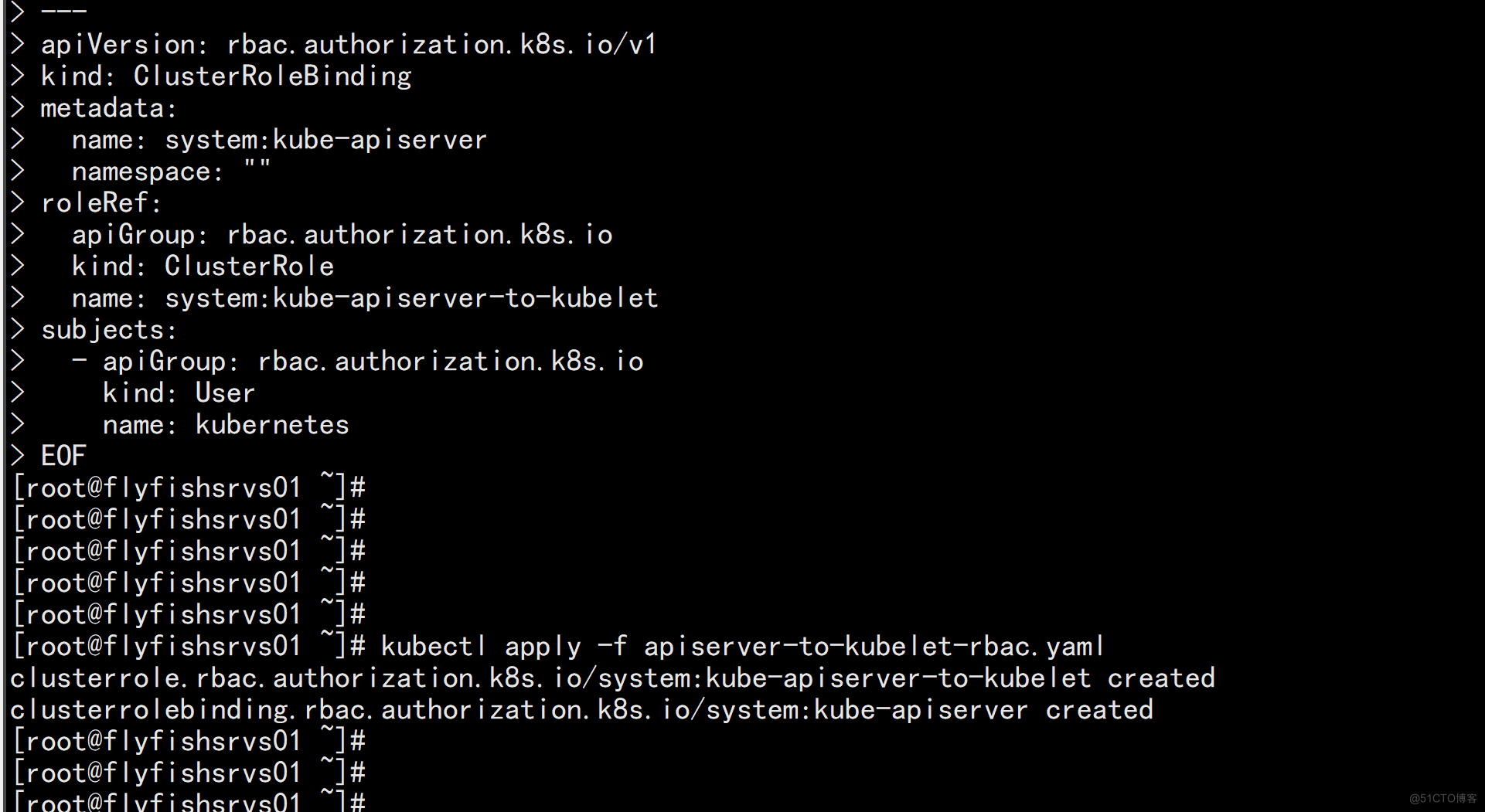

6.2 授权apiserver访问kubelet

应用场景:例如kubectl logs cat > apiserver-to-kubelet-rbac.yaml << EOF apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: annotations: rbac.authorization.kubernetes.io/autoupdate: "true" labels: kubernetes.io/bootstrapping: rbac-defaults name: system:kube-apiserver-to-kubelet rules: - apiGroups: - "" resources: - nodes/proxy - nodes/stats - nodes/log - nodes/spec - nodes/metrics - pods/log verbs: - "*" --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: system:kube-apiserver namespace: "" roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:kube-apiserver-to-kubelet subjects: - apiGroup: rbac.authorization.k8s.io kind: User name: kubernetes EOF kubectl apply -f apiserver-to-kubelet-rbac.yaml

七:新增加一个worker node

7.1 同步配置文件

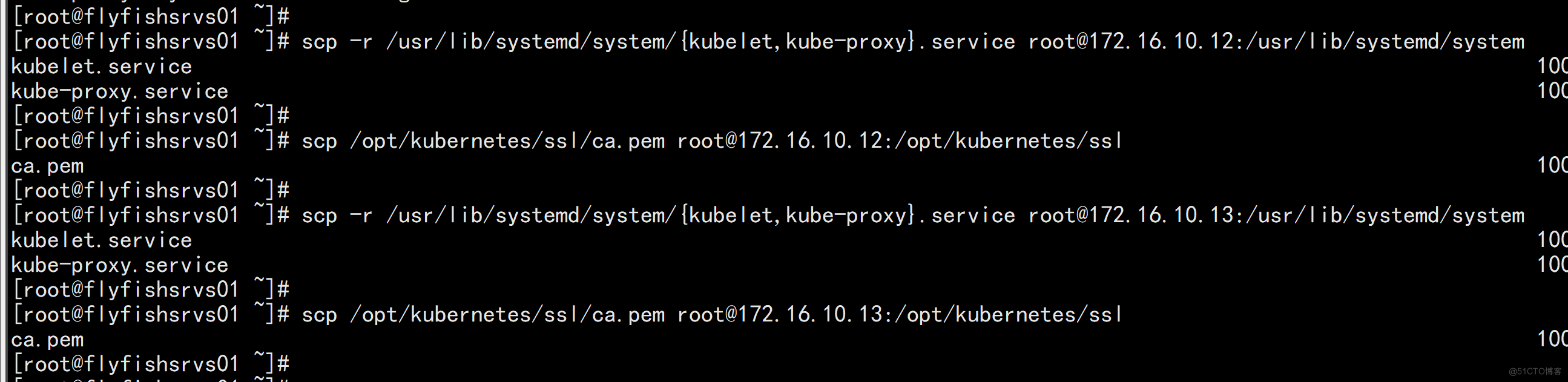

1. 拷贝已部署好的Node相关文件到新节点 在Master节点将Worker Node涉及文件拷贝到新节点172.16.10.12/13 scp -r /opt/kubernetes root@172.16.10.12:/opt/ scp /opt/kubernetes/ssl/ca.pem root@172.16.10.12:/opt/kubernetes/ssl scp -r /usr/lib/systemd/system/{kubelet,kube-proxy}.service root@172.16.10.12:/usr/lib/systemd/system scp -r /opt/kubernetes root@172.16.10.13:/opt/ scp /opt/kubernetes/ssl/ca.pem root@172.16.10.13:/opt/kubernetes/ssl scp -r /usr/lib/systemd/system/{kubelet,kube-proxy}.service root@172.16.10.13:/usr/lib/systemd/system

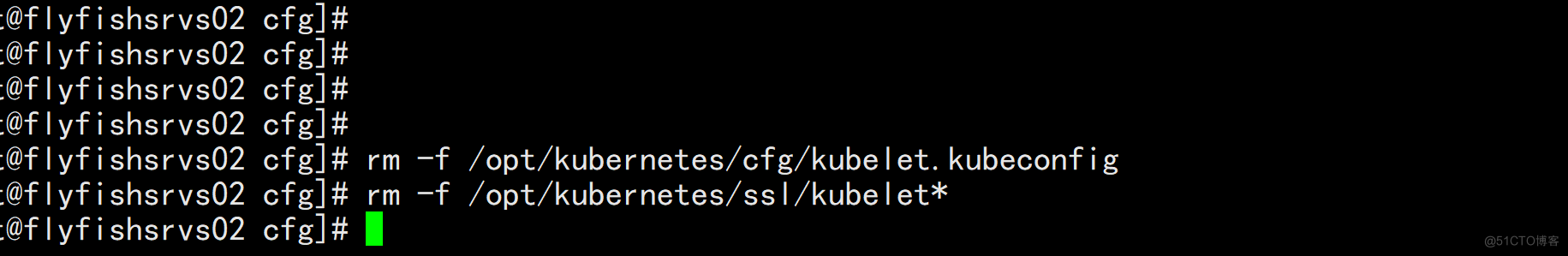

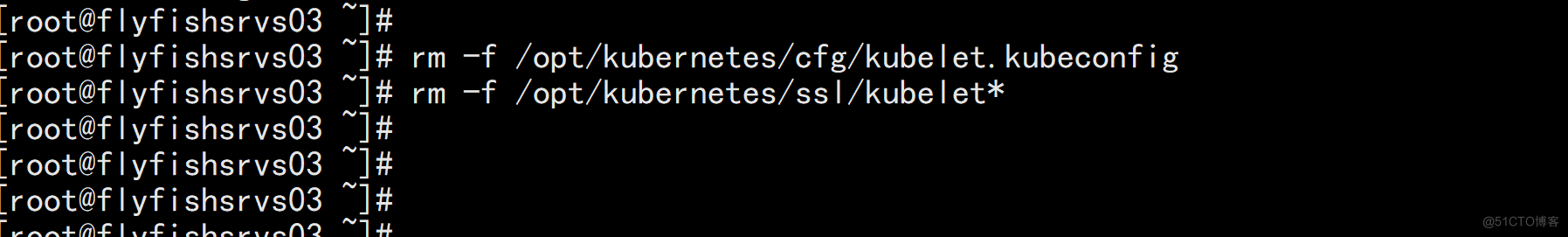

7.2 删除kubelet证书和kubeconfig文件

2. 删除kubelet证书和kubeconfig文件 rm -f /opt/kubernetes/cfg/kubelet.kubeconfig rm -f /opt/kubernetes/ssl/kubelet* rm -rf /opt/kubernetes/logs/* 注:这几个文件是证书申请审批后自动生成的,每个Node不同,必须删除

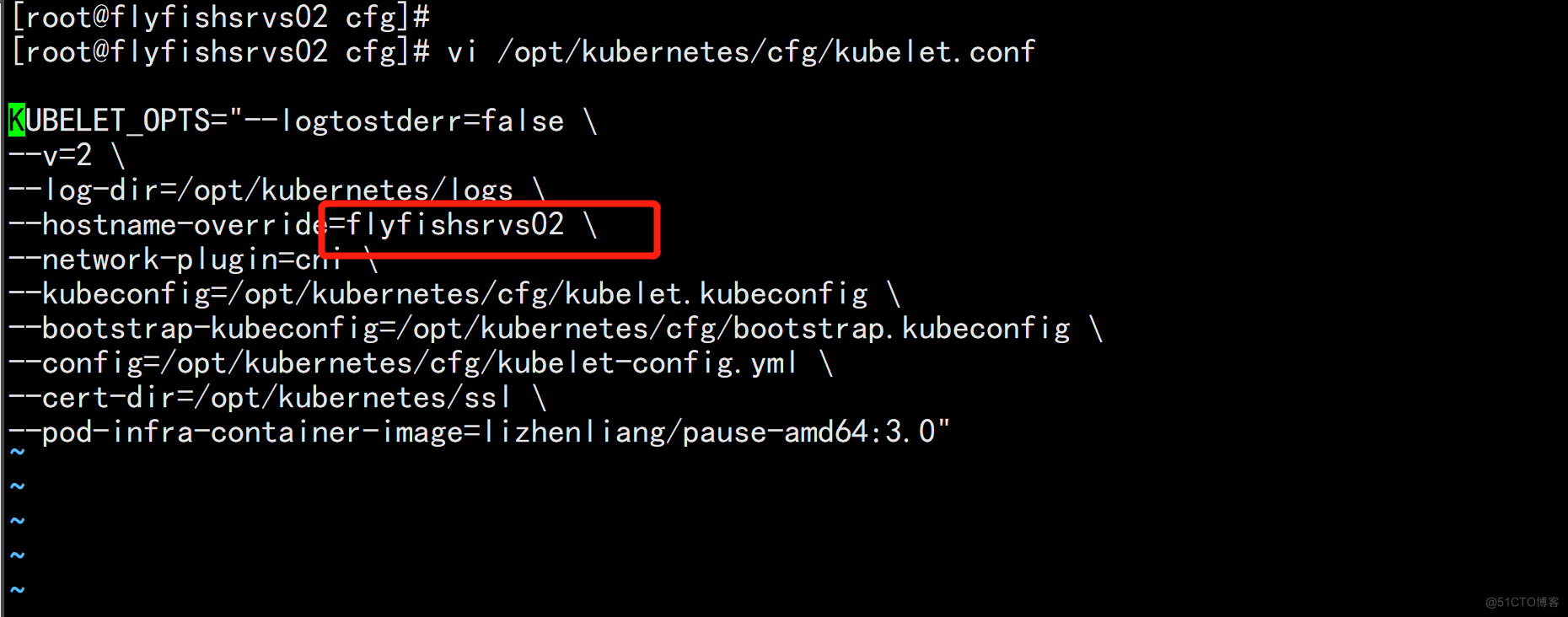

7.3 修改主机名 [改节点的主机名]

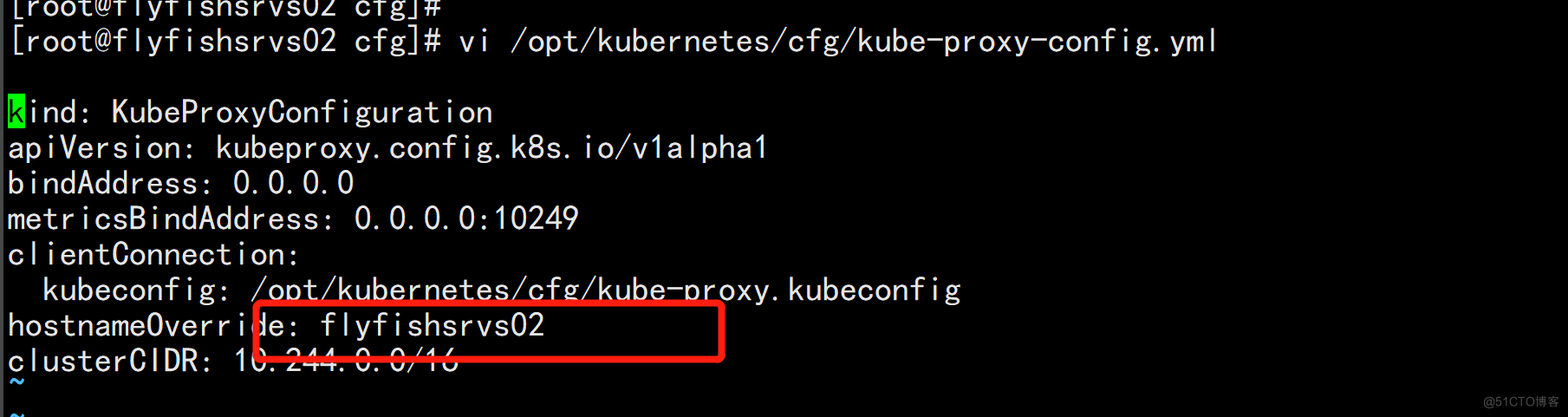

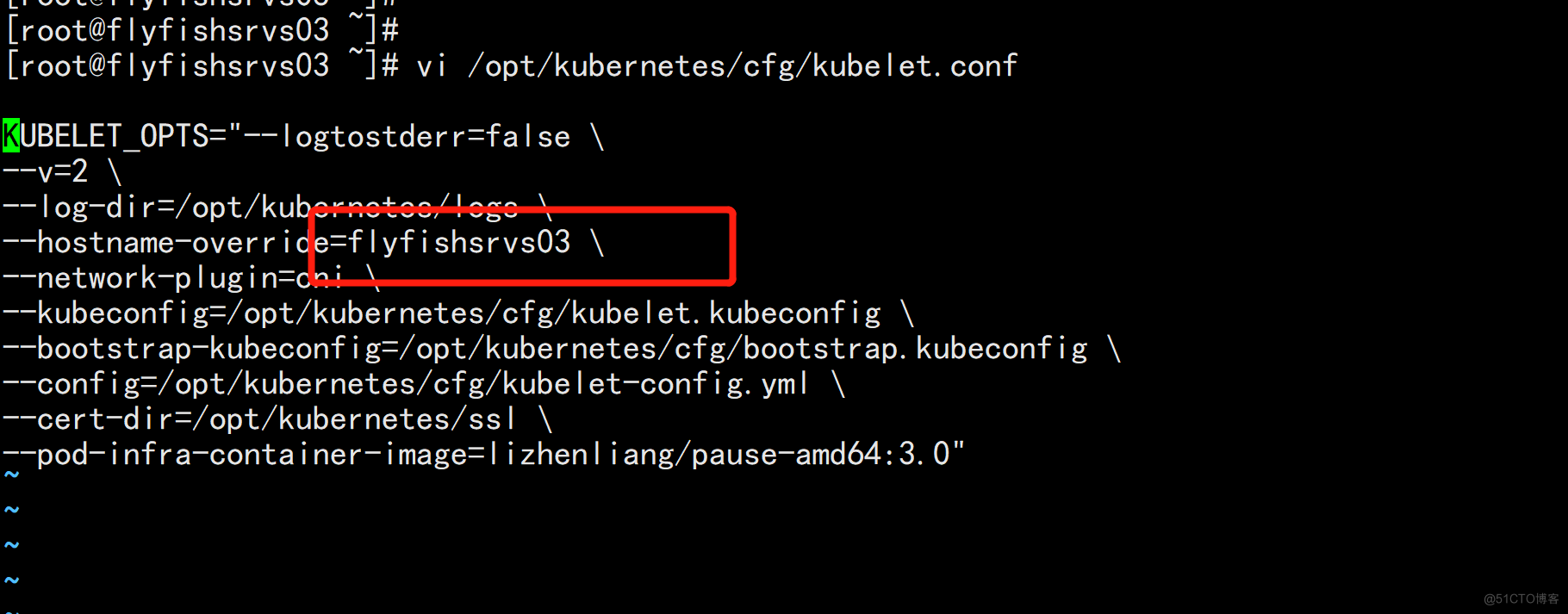

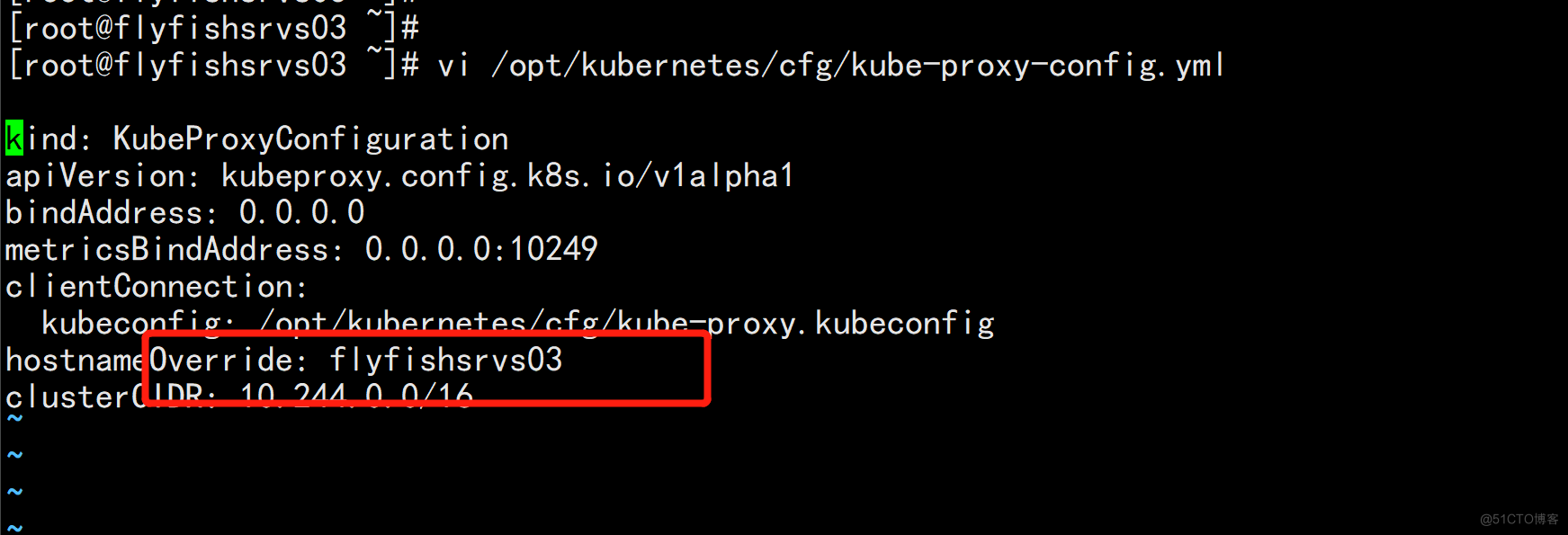

3. 修改主机名 [改节点的主机名] vi /opt/kubernetes/cfg/kubelet.conf --hostname-override=flyfishsrvs02 vi /opt/kubernetes/cfg/kube-proxy-config.yml hostnameOverride: flyfishsrvs02

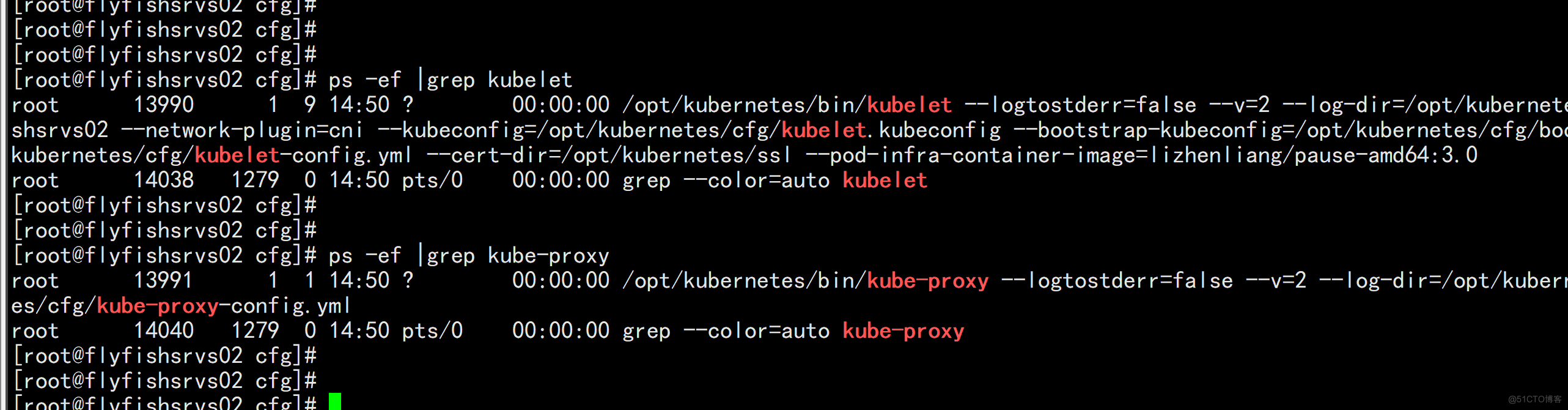

7.4 启动并设置开机启动

4. 启动并设置开机启动 systemctl daemon-reload systemctl start kubelet kube-proxy systemctl enable kubelet kube-proxy

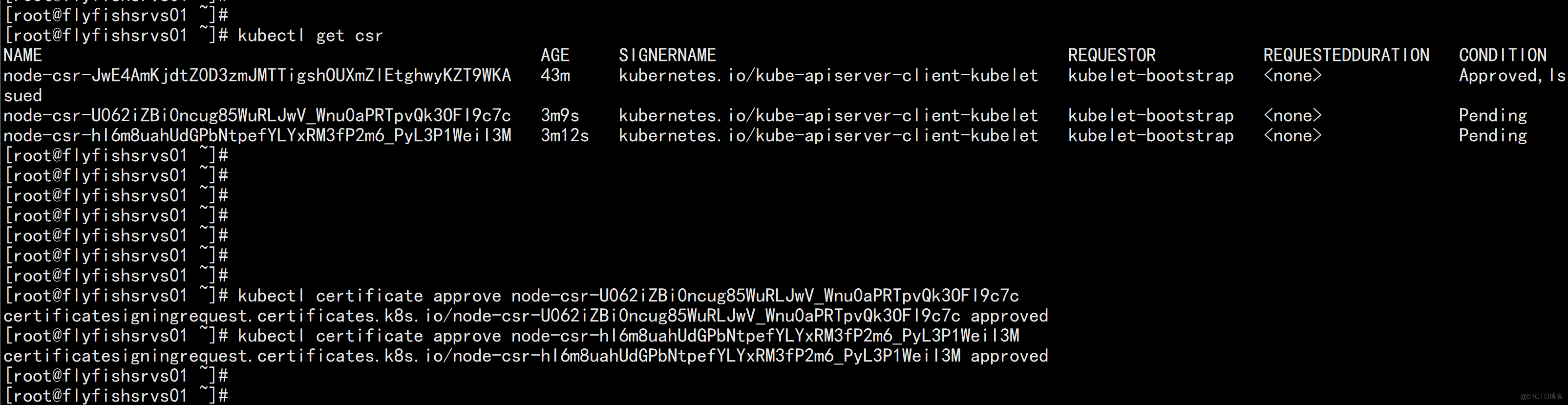

7.5 在Master上批准新Node kubelet证书申请

# 查看证书请求 kubectl get csr NAME AGE SIGNERNAME REQUESTOR CONDITION node-csr-JwE4AmKjdtZ0D3zmJMTTigshOUXmZlEtghwyKZT9WKA 43m kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Approved,Issued node-csr-U062iZBi0ncug85WuRLJwV_Wnu0aPRTpvQk3OFI9c7c 3m9s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Pending node-csr-hI6m8uahUdGPbNtpefYLYxRM3fP2m6_PyL3P1WeiI3M 3m12s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap <none> Pending # 授权请求 kubectl certificate approve node-csr-U062iZBi0ncug85WuRLJwV_Wnu0aPRTpvQk3OFI9c7c kubectl certificate approve node-csr-hI6m8uahUdGPbNtpefYLYxRM3fP2m6_PyL3P1WeiI3M

7.6 查看集群节点

kubectl get node

八:部署Dashboard和CoreDNS

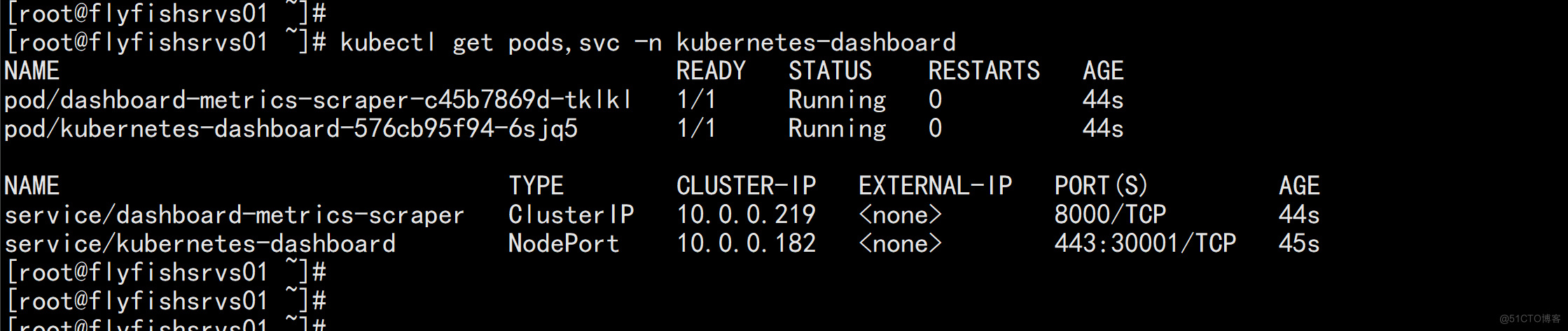

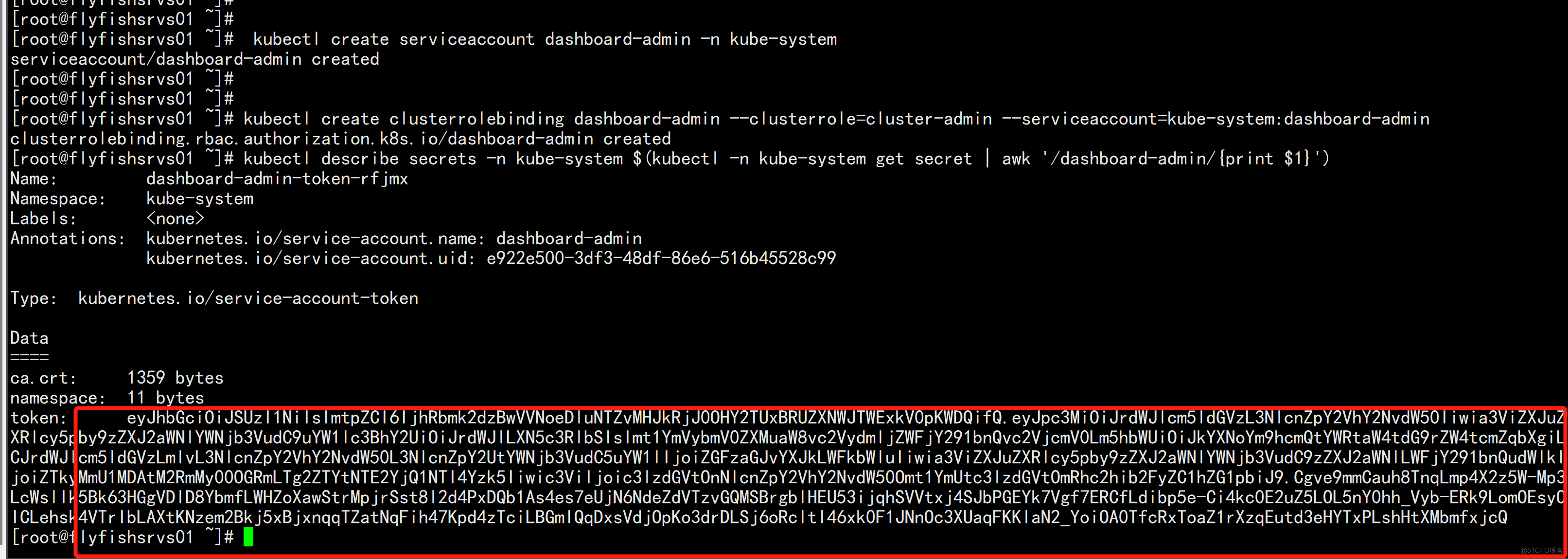

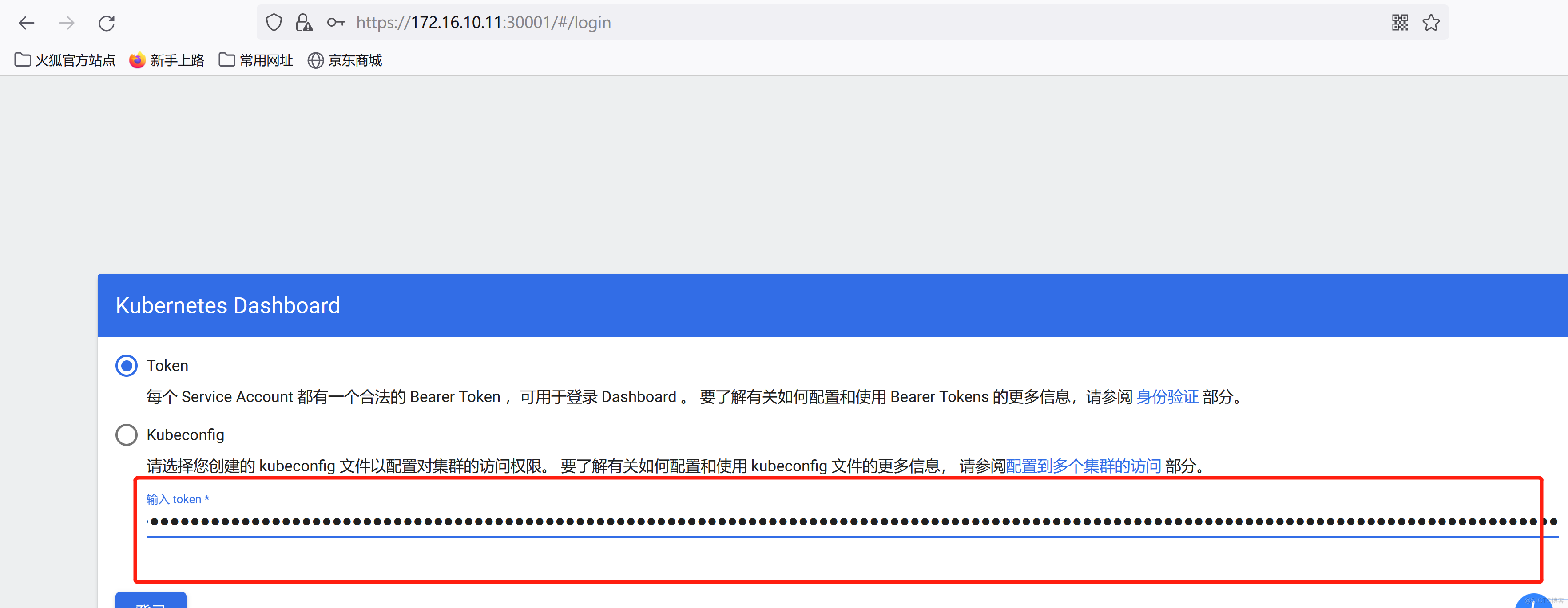

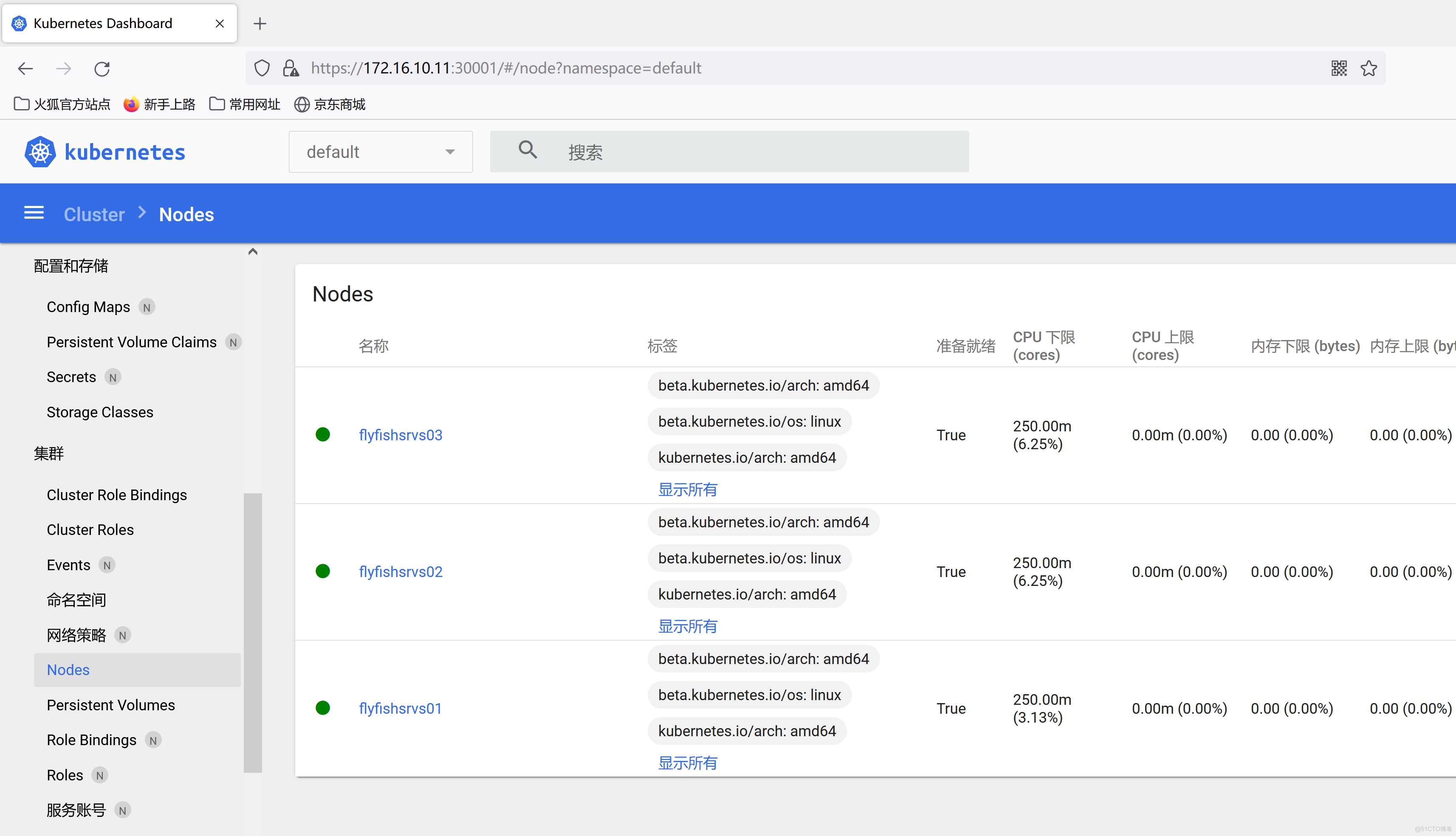

8.1 部署dashborad

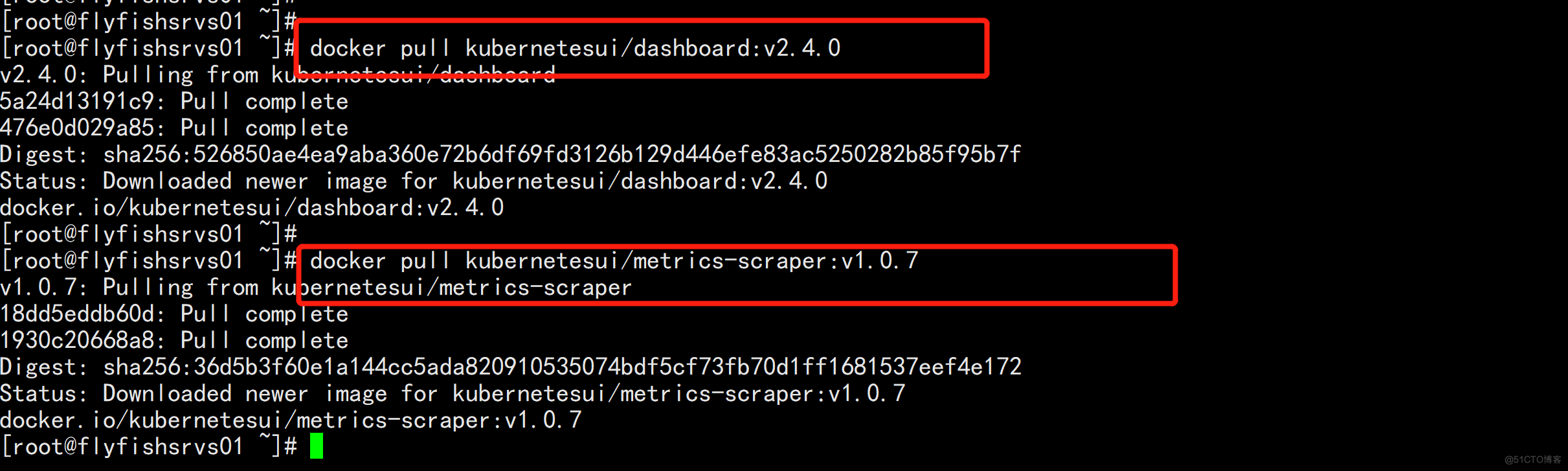

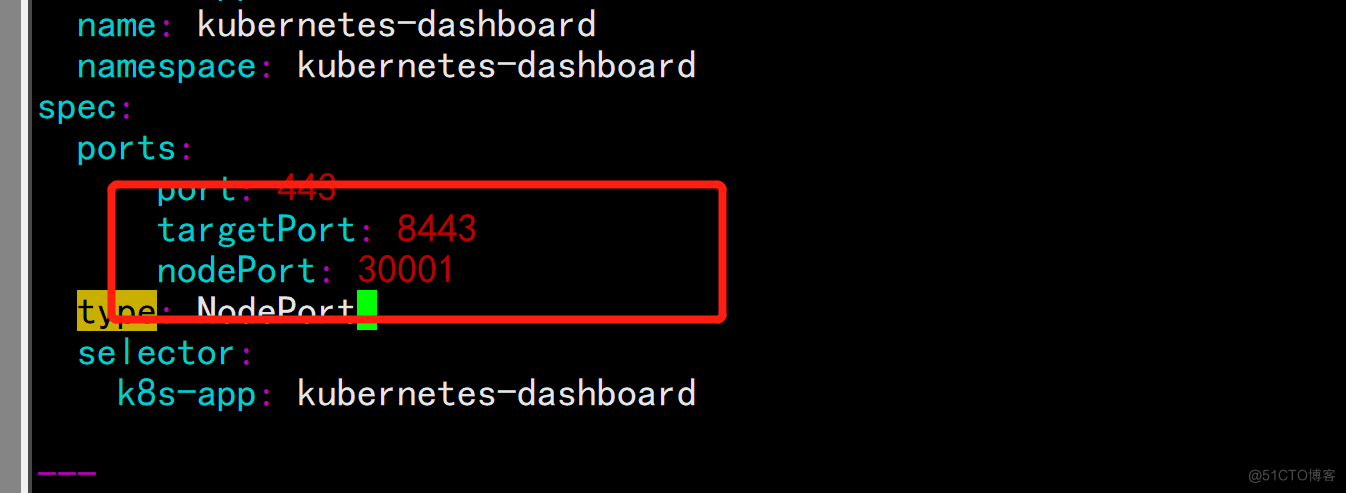

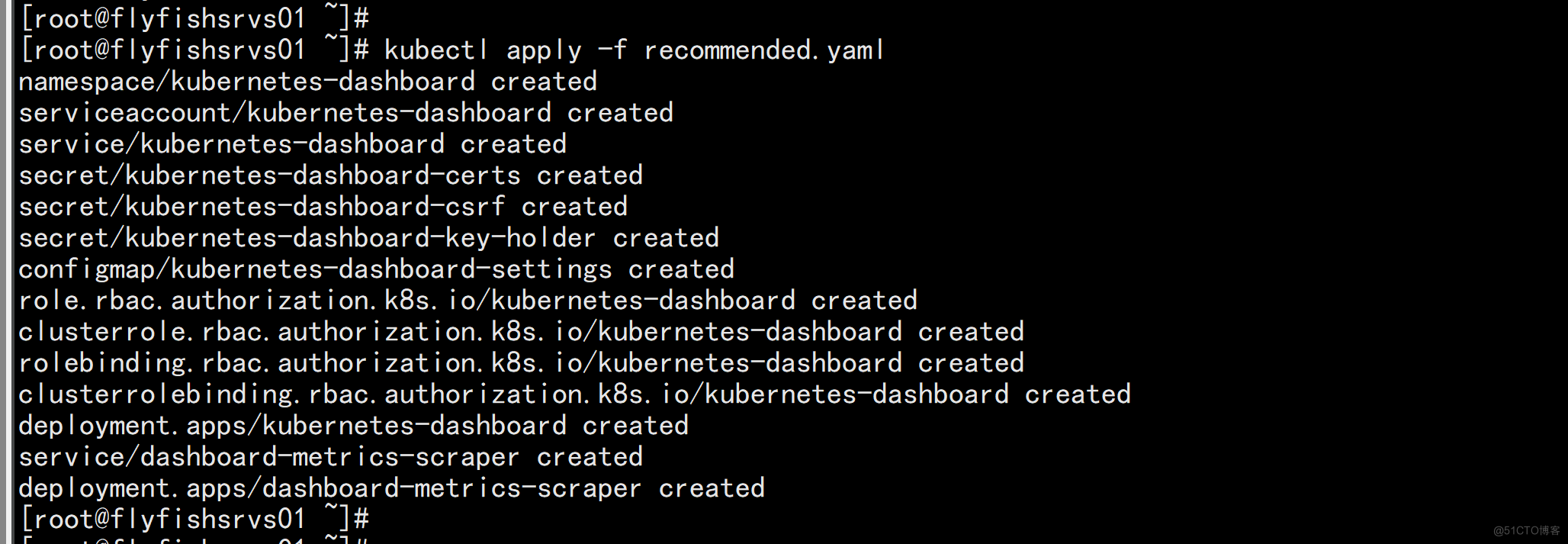

目前最新版本v2.4 参考: https://github.com/kubernetes/dashboard/releases/tag/v2.4.0 ----- Kubernetes Dashboard: kubernetesui/dashboard:v2.4.0 Metrics Scraper: kubernetesui/metrics-scraper:v1.0.7 Installation: kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.4.0/aio/deploy/recommended.yaml ------ docker pull kubernetesui/dashboard:v2.4.0 docker pull kubernetesui/metrics-scraper:v1.0.7

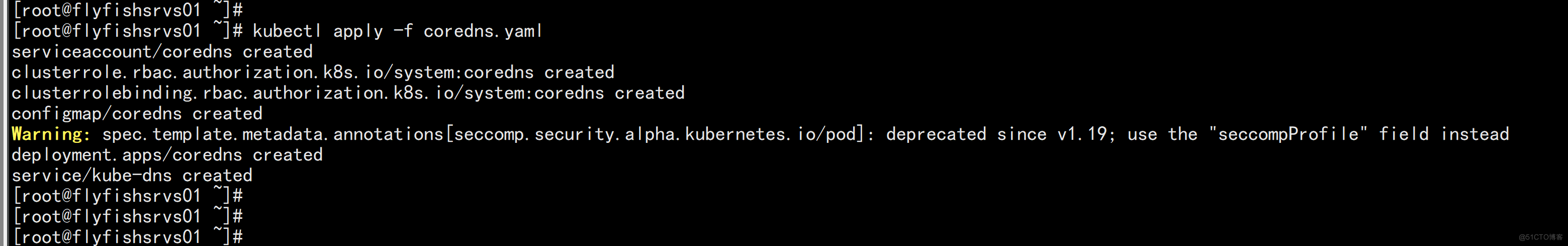

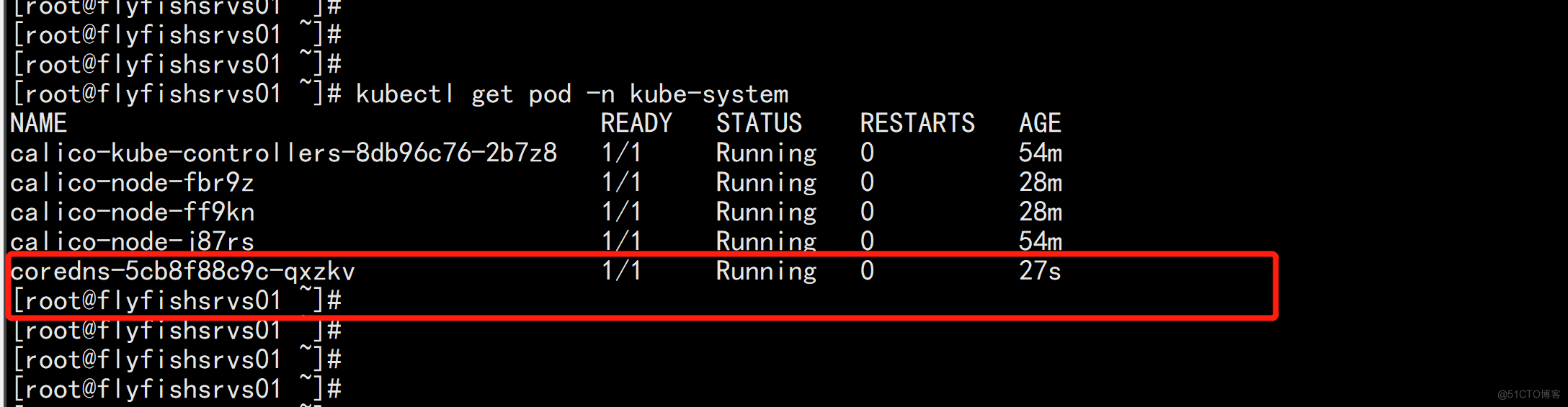

8.2 部署CoreDNS

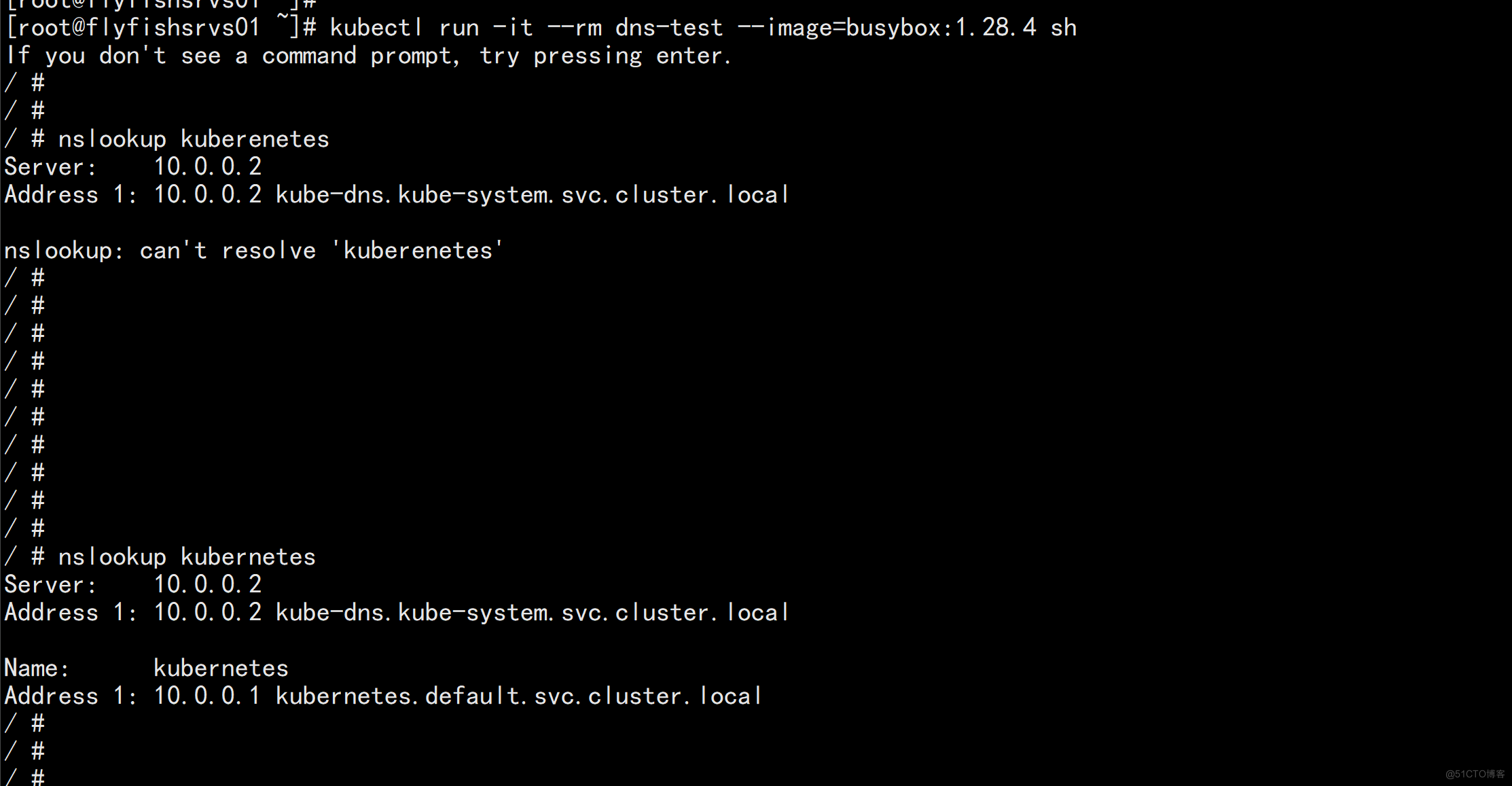

CoreDNS用于集群内部Service名称解析。 kubectl apply -f coredns.yaml kubectl run -it --rm dns-test --image=busybox:1.28.4 sh

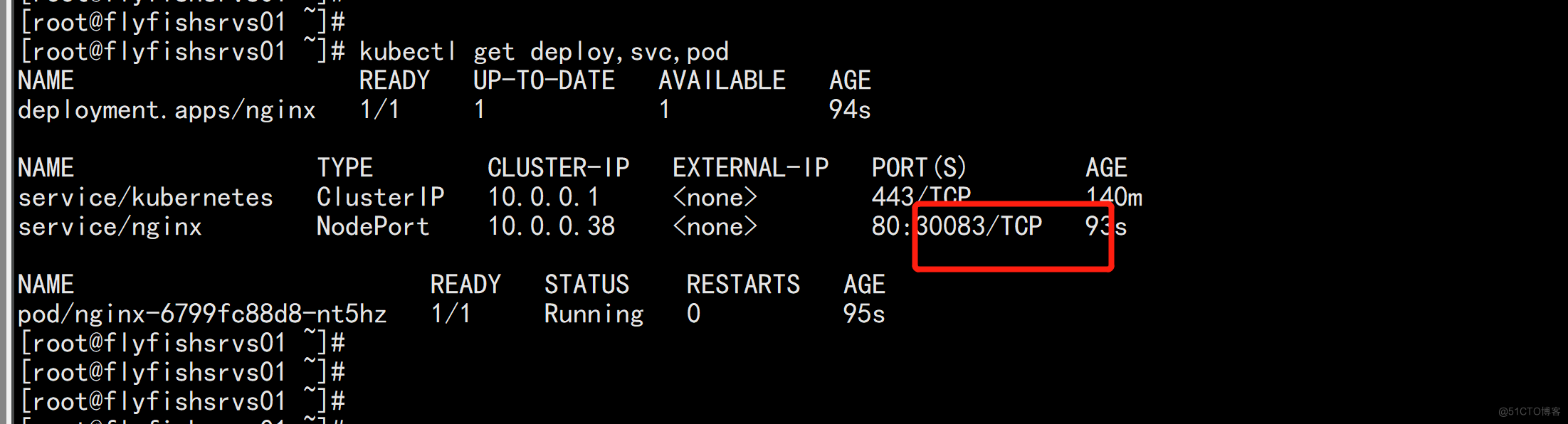

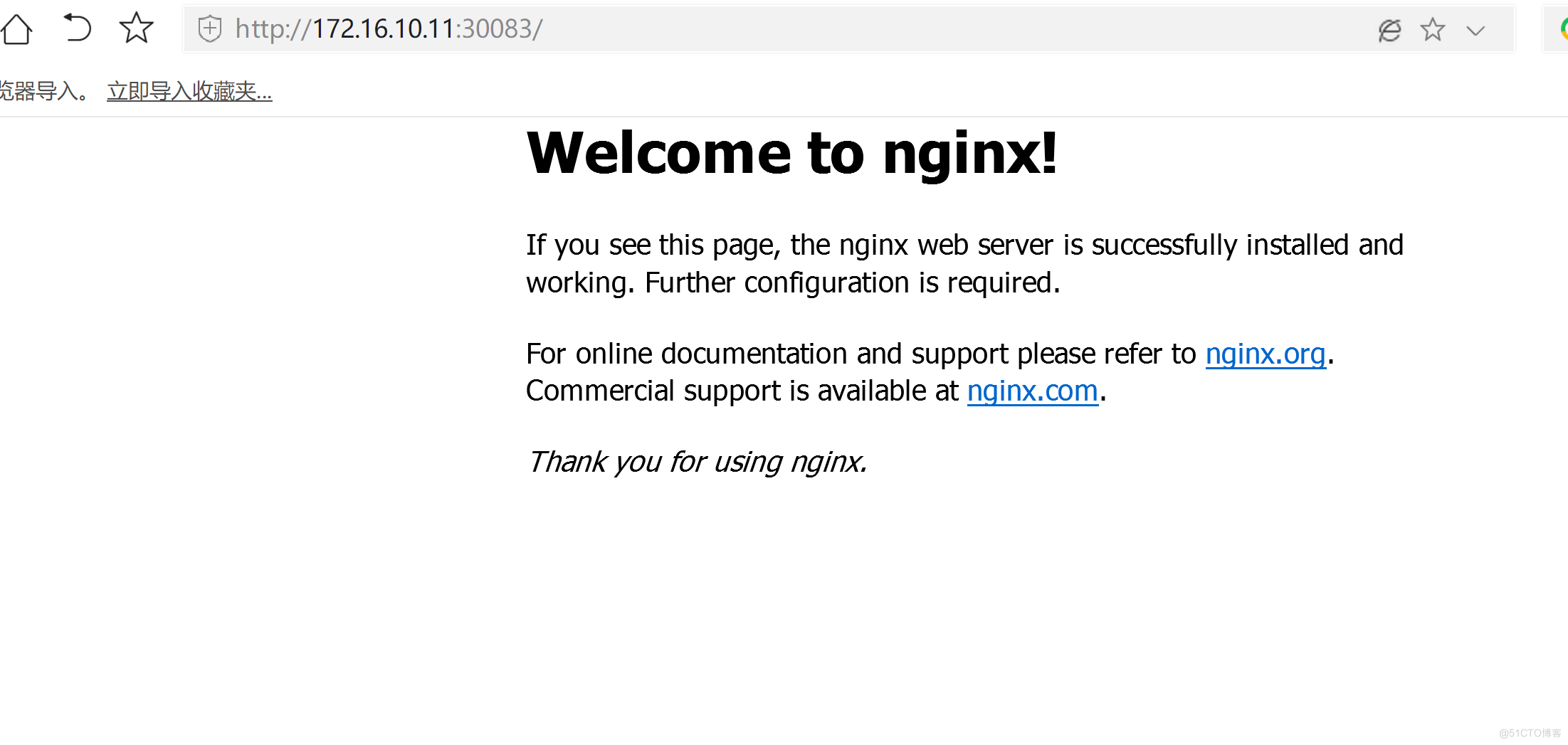

8.3 创建一个nginx 测试

kubectl create deployment nginx --image=nginx kubectl expose deployment nginx --port=80 --type=NodePort kubectl get deploy,svc,pod

zz

zz